Eric Wong

942 posts

My company rolled out AI tools 11 months ago. Since then, every task I do takes longer. I am not allowed to say this out loud. Not because there is a policy. There is no policy. There is something worse than a policy. There is enthusiasm. There is a Slack channel called #ai-wins where people post screenshots of AI outputs with captions like "this just saved me an hour." There is a VP who opens every all-hands with "the companies that adopt fastest win." There is a Director who renamed his team from Operations to Intelligent Operations. There is a peer review question that now asks: "How have you leveraged AI tools to enhance your workflow this quarter?" If the answer is "I haven't, because I was faster before," that is a career decision. So I leverage. Emails. Before the tools, I wrote emails. This took the amount of time it takes to write an email. I did not measure it. Nobody measured it. The email got written and sent and it was fine. Now I write the email. Then I highlight the text and click "Enhance with AI." The AI rewrites my email. It replaces "Can we meet Thursday?" with "I'd love to explore the possibility of finding a mutually convenient time to align on this." I read the rewrite. I delete the rewrite. I send my original email. This takes 4 minutes instead of 2. The 2 extra minutes are the enhancement. I do this 11 times a day. That is 22 minutes I spend each day rejecting improvements to sentences that were already finished. In #ai-wins I posted a screenshot of the rewrite. I did not post the part where I deleted it. 23 people reacted with the rocket emoji. That is adoption. Meetings. We have an AI notetaker in every meeting now. It joins automatically. It records. It transcribes. It summarizes. After each meeting I receive a 3-paragraph summary of the meeting I just attended. I read the summary. This takes 3 minutes. I was in the meeting. I know what happened. I am reading a machine's account of something I experienced firsthand. Sometimes the account is wrong. Last Tuesday it attributed a comment about Q3 revenue to me. My manager made that comment. I spent 4 minutes correcting the transcript. Before the notetaker, I did not spend 7 minutes after each meeting correcting a robot's memory of something I personally witnessed. I attend 11 meetings a week. That is 77 minutes per week supervising a transcription nobody requested. I mentioned this once. My manager said "think about the people who weren't in the meeting." The people who weren't in the meeting do not read the summaries. I checked. The read receipts show single-digit opens. The summaries exist not because they are useful but because they are there. I read them for the same reason. Documents. I write a weekly status update. Before the tools, this took 10 minutes. I typed what happened. I sent it. My manager skimmed it. The system worked. Now I open the AI writing assistant. I give it my bullet points. It produces a draft. The draft says "Significant progress was achieved across multiple workstreams." I did not achieve significant progress across multiple workstreams. I updated a spreadsheet and sent 4 emails. I rewrite the draft to say what actually happened. Then I run my rewrite through the grammar tool. It suggests I change "done" to "completed" and "next week" to "in the forthcoming period." I click Ignore 9 times. Then I send the version I would have written in 10 minutes. The process now takes 30. I have been doing this every week for 11 months. I have added 20 minutes to a task that did not need 20 more minutes. I call this efficiency. I have been calling it efficiency for 11 months. That is what efficiency means now. It means the additional time you spend to arrive at the same outcome through a longer process. Nobody has questioned this definition. I have not offered it for review. I kept a log once. 2 weeks. Every task, timed. Before-AI and after-AI. The after number was larger in every case. Every single one. Not by a little. The range was 40 to 200 percent. I deleted the log. I deleted it because it was a document that said, in plain numbers, that the AI tools make me slower. And a document like that has no place in a company where AI adoption is a strategic priority. I could not send it to my manager. He championed the rollout. I could not post it in #ai-wins. I could not raise it in a meeting because the notetaker would transcribe it and the summary would read "[Name] expressed concerns about AI tool efficacy" and that summary would be the first one anyone actually reads. So I do what everyone does. I use the tools. I spend the extra time. I post in #ai-wins. I write "leveraged AI to streamline weekly reporting" in my review and my manager gives me a 4 out of 5 for innovation. I have innovated nothing. I have added steps to processes that were already finished. I have made simple things longer and labeled the difference with words that used to mean something. Every week in #ai-wins someone posts a screenshot. And 20 people react with the rocket emoji. And nobody posts the part where they deleted the output and did the task themselves. Nobody posts the revert. Nobody posts the before-and-after timer. Nobody will. Because "I was better at my job before the AI tools" is a sentence that cannot be said out loud in any company that has decided AI is the future. Every company has decided AI is the future. So we leverage. Quietly. Adding steps. Calling them optimization. Getting slightly less done, slightly more slowly, with slightly more steps, and reporting it as progress. My yearly review is next month. There is a new section this year. "AI Impact Assessment." It asks me to quantify the hours saved by AI tools per week. I will write a number. The number will be positive. It will not be true. But the AI writing assistant will help me phrase it convincingly. That is the one thing it does well.

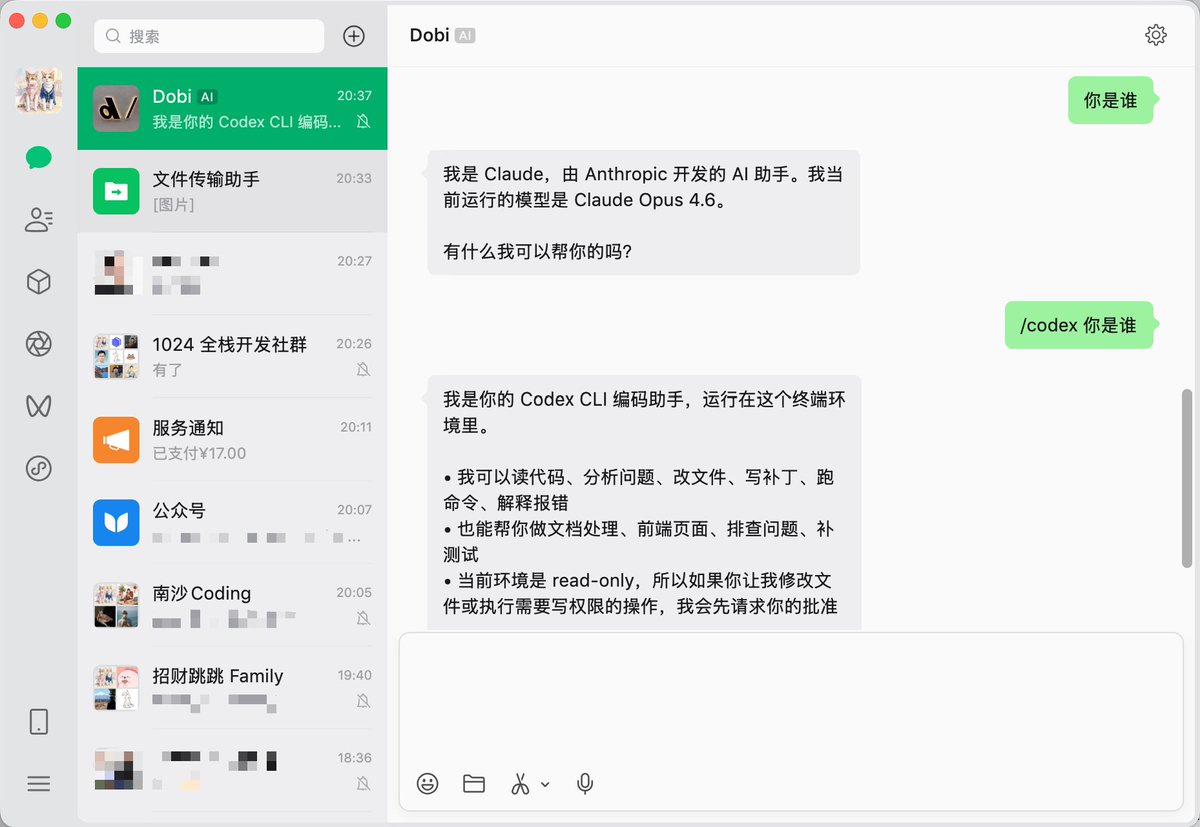

WeClaw 已支持自定义 Agent 的触发命令,只需要在配置文件:~/.weclaw/config.json 加一个 aliases { "codex": { "type": "acp", "command": "path-to/codex-acp", "aliases": ["gpt", "sam", "奥特曼"] }, "gemini": { "type": "acp", "command": "path-to/gemini", "aliases": ["pichai", "哈萨比斯"], "args": [ "--acp" ] } } 升级到新版本可用👇 weclaw update github.com/fastclaw-ai/we…

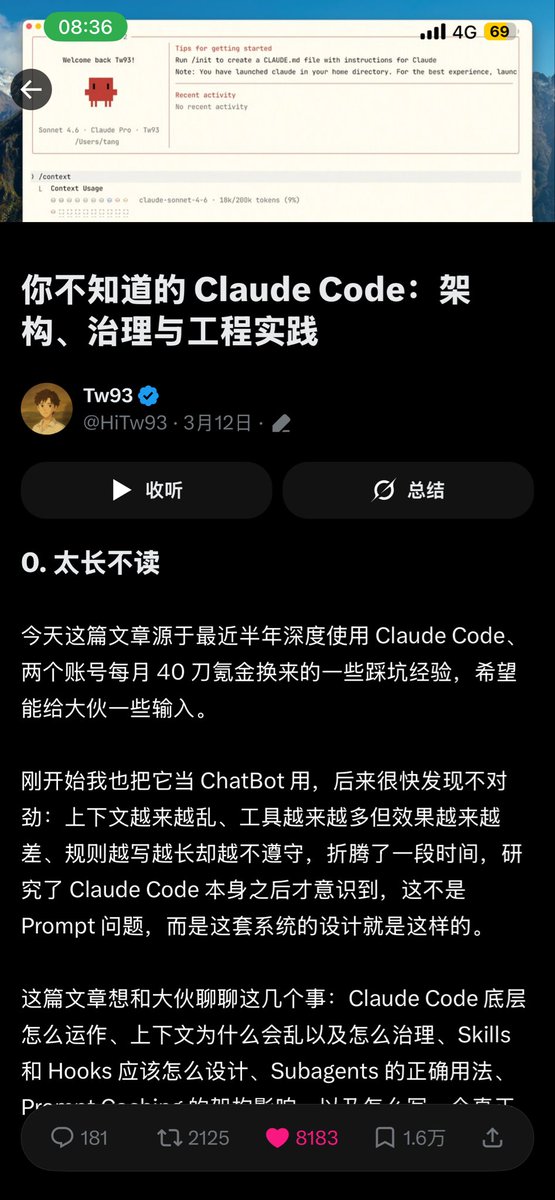

Today I read a lengthy piece on Harness Engineering — tens of thousands of words, almost certainly AI-written. My first reaction wasn't "wow, what a powerful concept." It was "do these people have any ideas beyond coining new terms for old ones?" I've always been annoyed by this pattern in the AI world — the constant reinvention of existing concepts. From prompt engineering to context engineering, now to harness engineering. Every few months someone coins a new term, writes a 10,000-word essay, sprinkles in a few big-company case studies, and the whole community starts buzzing. But if you actually look at the content, it's the same thing every time: Design the environment your model runs in — what information it receives, what tools it can use, how errors get intercepted, how memory is managed across sessions. This has existed since the day ChatGPT launched. It doesn't become a new discipline just because someone — for whatever reason — decided to give it a new name. That said, complaints aside, the research and case studies cited in the article do have value — especially since they overlap heavily with what I've been building with how-to-sglang. So let me use this as an opportunity to talk about the mistakes I've actually made. Some background first. The most common requests in the SGLang community are How-to Questions — how to deploy DeepSeek-V3 on 8 GPUs, what to do when the gateway can't reach the worker address, whether the gap between GLM-5 INT4 and official FP8 is significant. These questions span an extremely wide technical surface, and as the community grows faster and faster, we increasingly can't keep up with replies. So I started building a multi-agent system to answer them automatically. The first idea was, of course, the most naive one — build a single omniscient Agent, stuff all of SGLang's docs, code, and cookbooks into it, and let it answer everything. That didn't work. You don't need harness engineering theory to explain why — the context window isn't RAM. The more you stuff into it, the more the model's attention scatters and the worse the answers get. An Agent trying to simultaneously understand quantization, PD disaggregation, diffusion serving, and hardware compatibility ends up understanding none of them deeply. The design we eventually landed on is a multi-layered sub-domain expert architecture. SGLang's documentation already has natural functional boundaries — advanced features, platforms, supported models — with cookbooks organized by model. We turned each sub-domain into an independent expert agent, with an Expert Debating Manager responsible for receiving questions, decomposing them into sub-questions, consulting the Expert Routing Table to activate the right agents, solving in parallel, then synthesizing answers. Looking back, this design maps almost perfectly onto the patterns the harness engineering community advocates. But when I was building it, I had no idea these patterns had names. And I didn't need to. 1. Progressive disclosure — we didn't dump all documentation into any single agent. Each domain expert loads only its own domain knowledge, and the Manager decides who to activate based on the question type. My gut feeling is that this design yielded far more improvement than swapping in a stronger model ever did. You don't need to know this is called "progressive disclosure" to make this decision. You just need to have tried the "stuff everything in" approach once and watched it fail. 2. Repository as source of truth — the entire workflow lives in the how-to-sglang repo. All expert agents draw their knowledge from markdown files inside the repo, with no dependency on external documents or verbal agreements. Early on, we had the urge to write one massive sglang-maintain.md covering everything. We quickly learned that doesn't work. OpenAI's Codex team made the same mistake — they tried a single oversized AGENTS.md and watched it rot in predictable ways. You don't need to have read their blog to step on this landmine yourself. It's the classic software engineering problem of "monolithic docs always go stale," except in an agent context the consequences are worse — stale documentation doesn't just go unread, it actively misleads the agent. 3. Structured routing — the Expert Routing Table explicitly maps question types to agents. A question about GLM-5 INT4 activates both the Cookbook Domain Expert and the Quantization Domain Expert simultaneously. The Manager doesn't guess; it follows a structured index. The harness engineering crowd calls this "mechanized constraints." I call it normal engineering. I'm not saying the ideas behind harness engineering are bad. The cited research is solid, the ACI concept from SWE-agent is genuinely worth knowing, and Anthropic's dual-agent architecture (initializer agent + coding agent) is valuable reference material for anyone doing long-horizon tasks. What I find tiresome is the constant coining of new terms — packaging established engineering common sense as a new discipline, then manufacturing anxiety around "you're behind if you don't know this word." Prompt engineering, context engineering, harness engineering — they're different facets of the same thing. Next month someone will probably coin scaffold engineering or orchestration engineering, write another lengthy essay citing the same SWE-agent paper, and the community will start another cycle of amplification. What I actually learned from how-to-sglang can be stated without any new vocabulary: Information fed to agents should be minimal and precise, not maximal. Complex systems should be split into specialized sub-modules, not built as omniscient agents. All knowledge must live in the repo — verbal agreements don't exist. Routing and constraints must be structural, not left to the agent's judgment. Feedback loops should be as tight as possible — we currently use a logging system to record the full reasoning chain of every query, and we've started using Codex for LLM-as-a-judge verification, but we're still far from ideal. None of this is new. In traditional software engineering, these are called separation of concerns, single responsibility principle, docs-as-code, and shift-left constraints. We're just applying them to LLM work environments now, and some people feel that warrants a new name. I don't know how many more new terms this field will produce. But I do know that, at least today, we've never achieved a qualitative leap on how-to-sglang by swapping in a stronger model. What actually drove breakthroughs was always improvements at the environment level — more precise knowledge partitioning, better routing logic, tighter feedback loops. Whether you call it harness engineering, context engineering, or nothing at all, it's just good engineering practice. Nothing more, nothing less. There is one question I genuinely haven't figured out: if model capabilities keep scaling exponentially, will there come a day when models are strong enough to build their own environments? I had this exact confusion when observing OpenClaw — it went from 400K lines to a million in a single month, driven entirely by AI itself. Who built that project's environment? A human, or the AI? And if it was the AI, how many of the design principles we're discussing today will be completely irrelevant in two years? I don't know. But at least today, across every instance of real practice I can observe, this is still human work — and the most valuable kind.