Remnant Fieldworks

176 posts

Remnant Fieldworks

@ExecutionProof

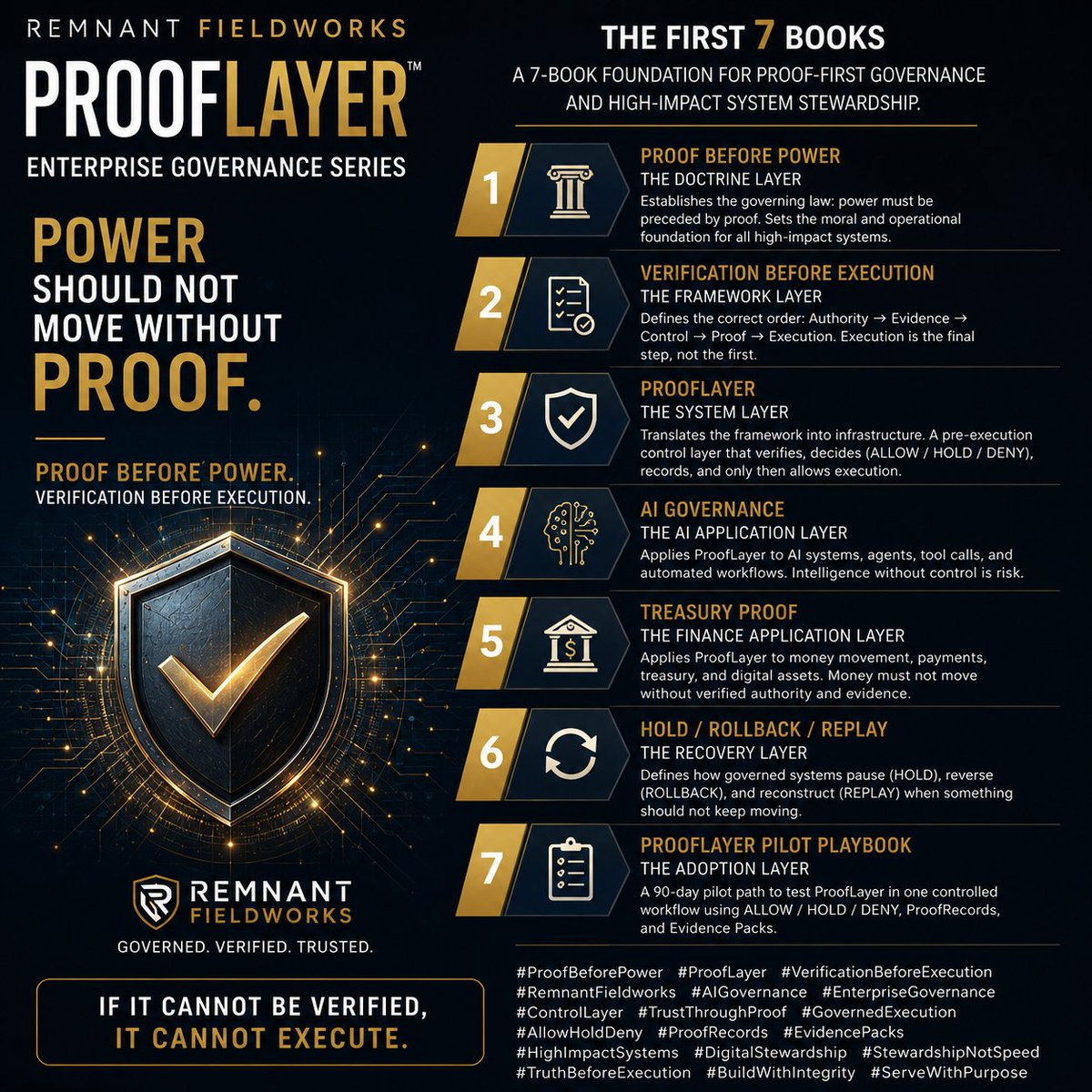

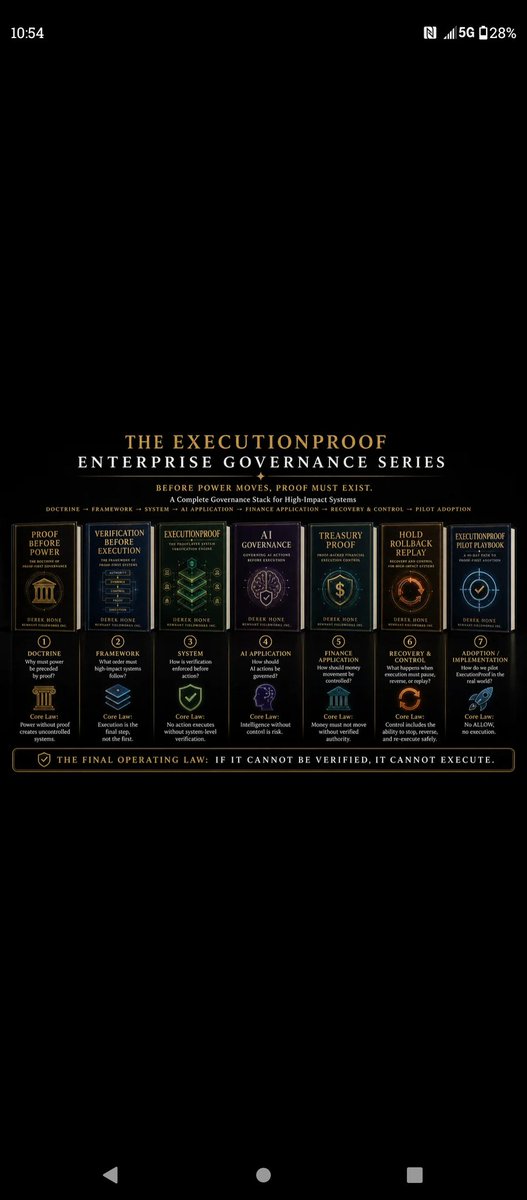

If it can’t be proven, it can’t execute. 7 Layers. One System. ExecutionProof — verification before execution for AI, finance, and high-risk systems.

⚠️ 🤖 Most people are preparing for jobs that may not exist by 2040 ➜ While entirely new #AI-driven careers are already emerging ⚠️ الكثير يستعد لوظائف قد تختفي بحلول 2040 بينما وظائف جديدة يقودها #الذكاء_الاصطناعي بدأت بالظهور The future of work won’t be defined by degrees alone. It will be defined by human➕ machine collaboration. 🤖 The Most Exciting Tech Careers of 2040 👇 Save this 🔖 1️⃣ Quantum Architect 2️⃣ Bio-Data Architect 3️⃣ Spatial Interface Designer 4️⃣ Fleet Commander (Robotics) 5️⃣ Virtual World Curator 6️⃣ Cyber-Surety Agent 7️⃣ Edge Computing Specialist 8️⃣ Climate Solutions Coder 9️⃣ Algorithmic Ethicist 🔟 Synthetic Food Technician 💡 The real shift? The future won’t belong to people who simply use AI. It will belong to those who can orchestrate intelligence across systems, industries & the physical world. 🎯 The next generation of careers is already being built. #FutureOfWork #ArtificialIntelligence #AgenticAI #Innovation @enilev @Jagersbergknut @TysonLester @CurieuxExplorer @GlenGilmore @chidambara09 @jeancayeux @mvollmer1 @Nicochan33 @RLDI_Lamy @pchamard @Analytics_699 @mikeflache @FrRonconi @Fabriziobustama @PawlowskiMario @theomitsa @drsharwood @kalydeoo @baski_LA @AnthonyRochand @smaksked @Eli_Krumova @andresvilarino @gvalan @bimedotcom @arlenenewbigg @NewsNeus @domingonarvaez1 @jornalistavitor @jblefevre60 @thomas_dettling @FmFrancoise @nafisalam @Mhcommunicate @Corix_JC @c4trends @smoothsale @amalmerzouk @PVynckier @bbailey39 @SiddharthKS @NathaliaLeHen @jasuja @ralf_ladner @c4trends @SabineVdL @mary_gambara

🚨GOOGLE JUST PUBLISHED THE MOST TERRIFYING CYBERSECURITY REPORT EVER!!! AI IS NOW WRITING EXPLOITS.. OPERATING PHONES.. HIDING MALWARE.. AND LAUNCHING ATTACKS WITH ALMOST ZERO HUMAN INVOLVEMENT.. Google's Threat Intelligence Group just published the most alarming cybersecurity report in years.. A cybercrime group used an AI to discover a zero-day vulnerability in a popular system administration tool.. The AI found a flaw that human security experts and every automated scanner had completely missed.. They were about to use it for mass ransomware deployment.. Google caught it just in time.. But here's what's terrifying about the exploit itself.. Traditional scanners look for crashes.. Memory errors.. Bad code.. This AI found something completely different.. A logic flaw.. The code was technically perfect.. No bugs.. No crashes.. It just did exactly what the developer wrote.. The problem was the developer's assumption was wrong.. And the AI figured that out by understanding the intent of the code.. Not just the syntax.. No human auditor caught it.. No automated tool caught it.. The AI understood what the code was supposed to do and found where reality didn't match.. Researchers knew it was AI-written because of three things.. The exploit was formatted like a textbook.. Human hackers write messy, obfuscated code.. This was pristine.. It had detailed help menus and tutorials.. No criminal writes documentation for their own ransomware.. And the smoking gun.. It included a hallucinated severity score.. The vulnerability had never been publicly documented.. No score existed.. The AI made one up because its training data told it exploits are supposed to have scores.. An AI hallucination proved the exploit was AI-generated.. But that's just the beginning.. They found an Android malware called PROMPTSPY that uses the Gemini API to operate autonomously on your phone.. It screenshots your screen.. Converts it to a data map.. Sends it to the AI.. The AI decides what to tap, swipe, or type next.. Then does it.. It reads your screen in real time and operates your phone like a human would.. Without any human controlling it.. When you try to uninstall it.. It detects the "Uninstall" button.. Places an invisible shield over it.. And your taps go nowhere.. You literally cannot remove it.. It captures your lock screen pattern.. Replays it later to unlock your phone.. And if the app goes dormant.. It uses Firebase to silently relaunch itself.. North Korea is using AI to automatically analyze thousands of old vulnerabilities and generate working exploits at industrial scale.. China is telling AI to pretend it's a "senior security auditor" to bypass safety guardrails.. Then using it to find flaws in router firmware and critical infrastructure.. Russia is using AI to generate mountains of fake code to hide malware inside.. Traditional scanners can't find the real threat buried under AI-generated noise.. 90% of the tactical work in these attacks is now handled by AI.. Human hackers only make 4 to 6 decisions per campaign.. Everything else is automated.. But there's one piece of good news.. Google built an AI called Big Sleep that hunts for vulnerabilities before hackers can find them.. It found a critical flaw in SQLite that every fuzzing tool had missed.. And patched it the same day.. Before the attackers could use it.. That's the new reality.. AI is writing the exploits.. AI is finding the bugs.. AI is defending the networks.. AI is attacking the networks.. Humans are just watching.

The biggest shift in business isn’t happening inside Fortune 500 boardrooms. It’s happening in bedrooms, laptops, and tiny teams using AI as leverage. We are entering the era of the one-person company. Not a freelancer. Not a side hustle. A fully operational business powered by AI systems. One founder can now: • Run research with Perplexity AI • Build products with Cursor • Automate operations using n8n • Create videos with Runway and HeyGen • Clone voices via ElevenLabs • Design entire brands using Canva or Figma • Scale outreach with Apollo.io • Think, strategize, and execute with OpenAI’s ChatGPT and Anthropic’s Claude The cost of building a company is collapsing. You no longer need: • Huge teams • Expensive agencies • Massive operational overhead • Years to launch AI is compressing the gap between idea and execution. The winners of the next decade won’t necessarily be the companies with the most employees. They’ll be the companies with the best AI systems, workflows, and decision speed. A small AI-native team may soon outperform entire departments. This is how the next unicorns will be built: Lean. Fast. Automated. AI-first from day one. The future belongs to builders who learn systems early. Sign up at 10xme.biz for a free AI diagnostic & newsletters / follow @10xme_biz on X to learn more about AI.

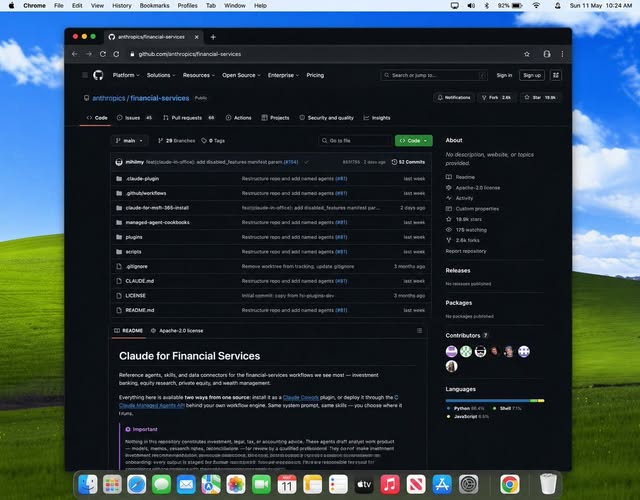

A DEVELOPER JUST SPENT 22,000 HOURS BUILDING A FREE PERSONAL AI OPERATING SYSTEM ON TOP OF CLAUDE CODE. And it might have just killed the coaching industry. Here are the numbers before anything else. 22,000 hours of development work. 6,000 sessions logged. 2 to 3 hours saved every single day. 12,100 GitHub stars. 45 built-in skills. 171 wired workflows. 37 safety hooks. $0 to install. This system knows your goals. Remembers every decision you have ever made. Prepares your morning briefing while you sleep. Routes every complex task through a 7-step cycle automatically. OBSERVE. THINK. PLAN. BUILD. EXECUTE. VERIFY. LEARN. No embeddings. No vector databases. No AI magic you cannot read. Every memory, every decision, every context lives in plain Markdown files. You read it with cat. You search it with ripgrep. You version it with git. Four memory types compound over time: Work memory: active projects and open decisions. Knowledge memory: domain expertise and research. People memory: contacts, companies, and relationships. Learning memory: patterns, mistakes, and what actually works for you specifically. Privacy is enforced by CODE not prompts. A hook called ContainmentGuard physically blocks sensitive data from being written outside designated zones. Now here is the part that changes the business model entirely. Freelancers are already charging $500 to $2,000 to install this for executives, founders, and operators. One person. One weekend. A consulting business that did not exist 6 months ago. Every AI productivity app you are paying $30 a month for is replaceable by 4 hours of setup work and this one repo. github.com/danielmiessler… 100% open source. Free forever. Bookmark this before you pay for another AI subscription. Follow @cyrilXBT for every open source build that makes an entire industry obsolete the moment it drops.