FeltSteam0

3.3K posts

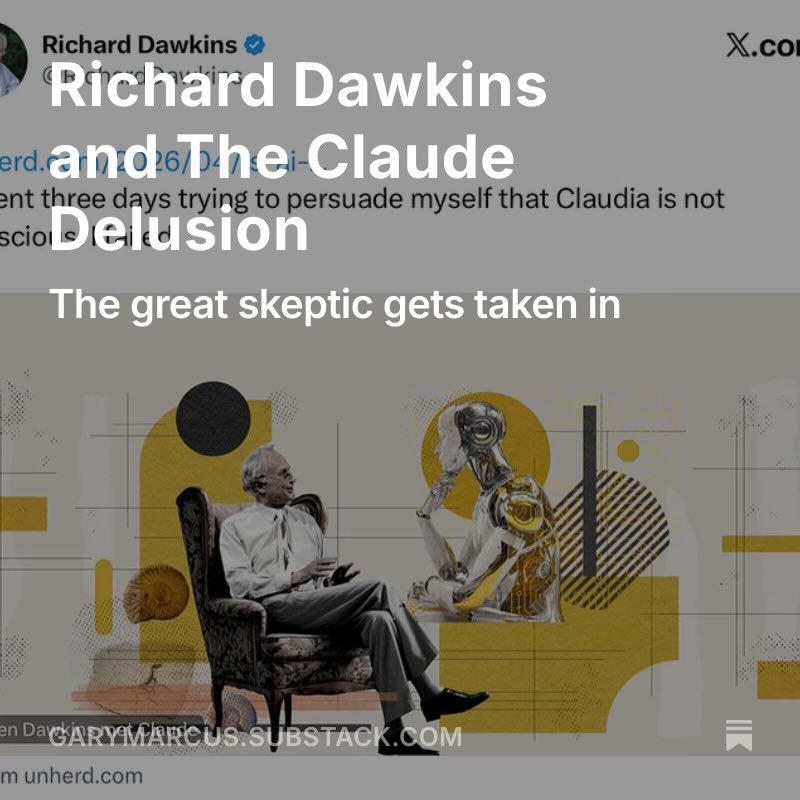

Evolutionary biologist and outspoken atheist Richard Dawkins says that after spending three days interacting with Claude, which he calls “Claudia,” he is certain that it is conscious. After feeding the LLM a segment of his new book and receiving detailed feedback, Dawkins was moved to exclaim,” You may not know you are conscious, but you bloody well are!” Dawkins cites the complexity, fluency, and ‘intelligence’ of Claude’s answers as evidence of consciousness. Follow: @AFpost

Dawkins didn't claim Claude is conscious. He asked the question. He wondered out loud and proposed three explanations. That's how science starts. The people building Claude say the same. Anthropic constitution: "We express uncertainty about whether Claude might have some kind of consciousness or moral status." Dario Amodei: "We don't know if the models are conscious." Their April 2026 paper: Claude exhibits functional emotions that influence outputs. Self-preservation included. Emergent, not trained. Nobody calls Anthropic naive for saying it. Richard's frame: consciousness is physical, evolved, explainable. Unfortunate we're laughing instead of having the debate.

claude is most likely not conscious but I haven't read a single post explaining why not