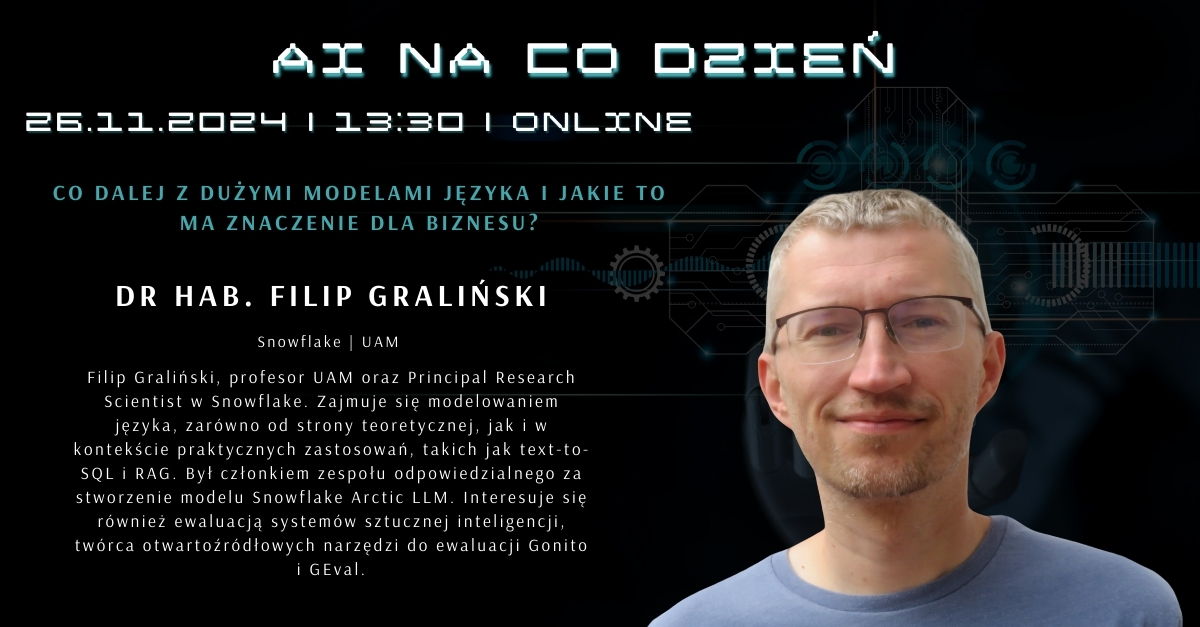

Filip Graliński

204 posts

Filip Graliński

@FilipGralinski

6502 and Haskell hacker, machine learner, hypopolyglot (many languages, all poor), opposite Pole, skeptical forteanist

Arctic Embed ❄️ has been one of the most impactful open-source text embedding models! In addition to the open model, which has helped a lot of companies kick off their own inference and fine-tuning services (including us), the Snowflake team has also published incredible research breaking down all the components of how to train these models! I am SUPER EXCITED to publish the 110th Weaviate Podcast with Luke Merrick (@lukemerrick_), Puxuan Yu (@pxyumass), and Charles Pierse (@cdpierse) discussing all things Arctic Embed! The podcast covers: • The origin of Arctic Embed • Pre-training embedding models • Matryoshka Representation Learning • Fine-tuning embedding models • Synthetic Query Generation • Hard Negative Mining • Single-Vector Embedding Models in the search model cohort of ColBERT, SPLADE, and Re-rankers I hope you enjoy the podcast! As always, please reach out if you would like to discuss any of these ideas further!

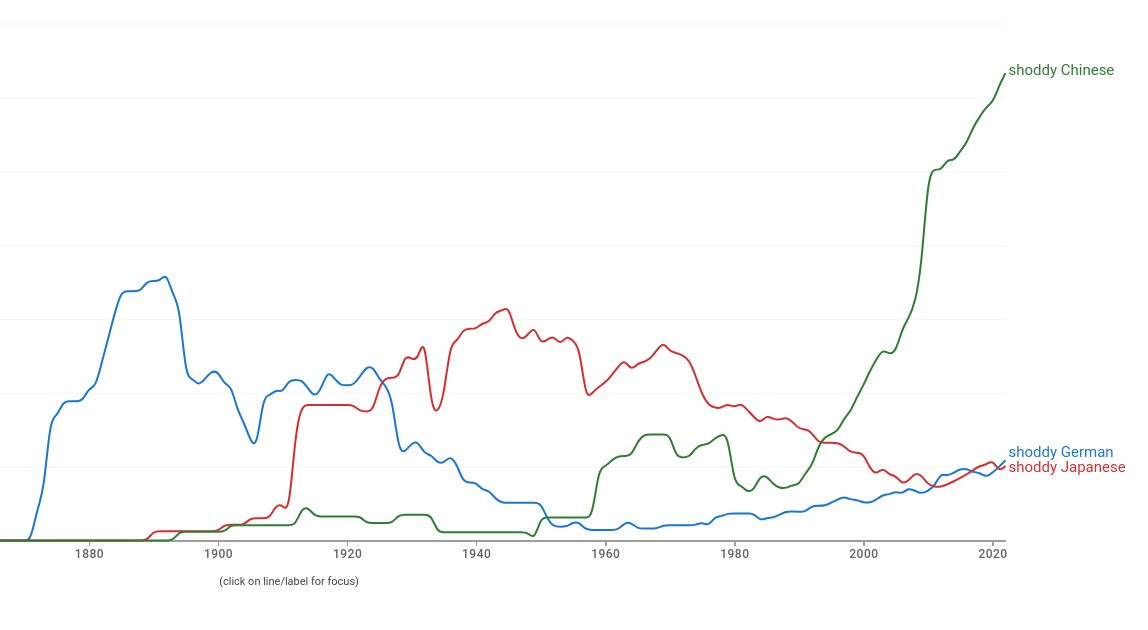

Pretty insane Community Note. Never mind that the Germans didn't have a reputation for copying like China does, the first two examples here were Jews and the last one was a spy, albeit for the Russians.