Sabitlenmiş Tweet

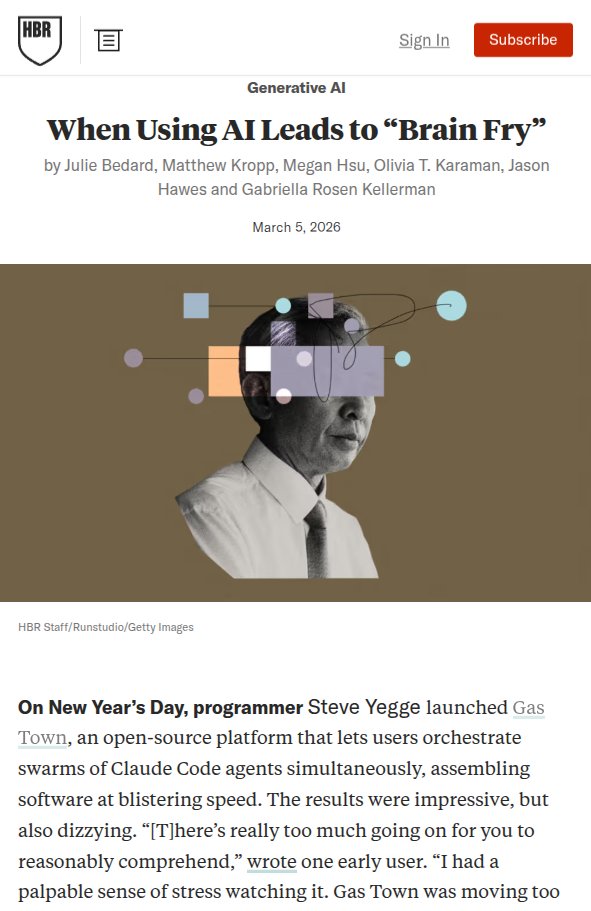

in last few years and especially last few months.

Two thoughts that hit me simultaneously every morning:

I need to keep up with AI. There's no point keeping up with AI.

Spent a while thinking about why both feel true at once and what it means for what we should actually be learning right now.

Wrote it up. Honest about where I'm uncertain.

Full piece linked below. Curious what the split looks like in your own work.

Kiran Mohan@KMohan40821

English