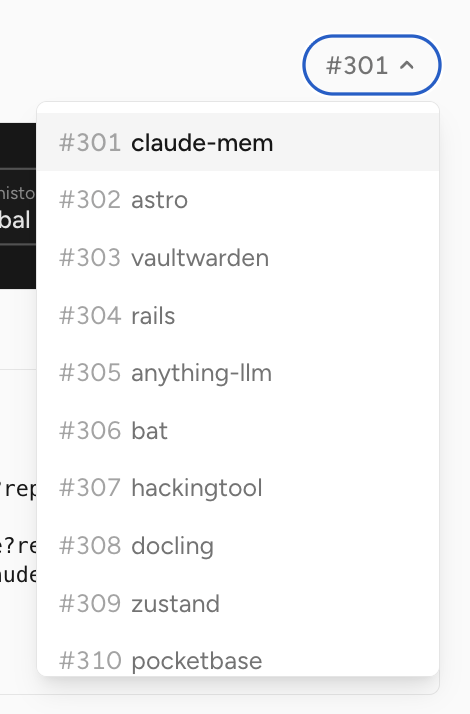

lukex

6.2K posts

lukex

@Lukex

co-founder, chief janitor https://t.co/ugjMK4yDEO | venture partner https://t.co/SyPtrlM8Sj

Forward deployed engineers, or equivalent, are about to become one of the most in-demand jobs in tech. And one of the most important functions for AI rollouts. Deploying agents is far more technical of a task than most people realize, often far more involved than deploying software. Software generally works the same way every time, and generally for the past few decades has been updated versions of an existing technology or concept (which basically means easier for the enterprise to update their workflows on a newer system). With agents, you’re actually deploying the equivalent of work output within the enterprise. The customer is effectively using you as a professional services provider for a task, which they expect to get solved nearly end-to-end now. This means you need to actually deeply understand the business process as a vendor, and get the customer from the current to the end state seamlessly. Companies need help figuring out which models will work best for their workflows, they need extensive evals setup often, they need change management support for workflows, they need to get their data setup for the agents, and constant tuning of the agentic system for their process. Massive role in tech now. And another example of the kind of highly technical work that AI is creating.

ran openai's 663 open roles through chatgpt to ask what one could infer about their strategy nothing particularly new, but it worked well

Everyone should read what's below. This is why actually knowing your stuff instead of naively regurgitating a particular startup's marketing propaganda bullet points is important. I've also included a screenshot of my Substack writeup of Nvidia's Bill Dally and Google's Jeff Dean GTC session that confirms Gavin's analysis.

Anthropic just accidentally leaked Claude Code’s entire source… seriously 😳 Buried in the code are 4 secret features they haven’t announced yet. Here’s what’s coming: BUDDY - A Tamagotchi-style AI pet that lives next to your input box - 18 species. Rarity tiers. Shiny variants. Permanent personality. - Teaser drops April 1. Full launch May 2026. KAIROS - “Always-On Claude.” A persistent agent that runs across sessions. - Watches, logs, and proactively acts without you typing anything. - Has a nightly “dreaming” cycle that consolidates its memory. ULTRAPLAN - 30-minute deep planning sessions in the cloud. - Claude explores and builds a plan. You approve or reject in browser. - Can “teleport” the session to your local terminal when ready. COORDINATOR MODE - One Claude spawns multiple worker Claudes in parallel. - Workers report back with status, token usage, duration. - Multi-agent orchestration built directly into the CLI. This is the compiled code behind feature flags. They’re actively building all of this in secret.

Sage observation from @karrisaarinen (CEO of Linear) It now makes SO MUCH sense why I see a bunch of eng teams rebuilt a SaaS vendor in-house with AI, brag about and feel good They are doing side quests... and they don't even know it. And they are not helping their co win!!

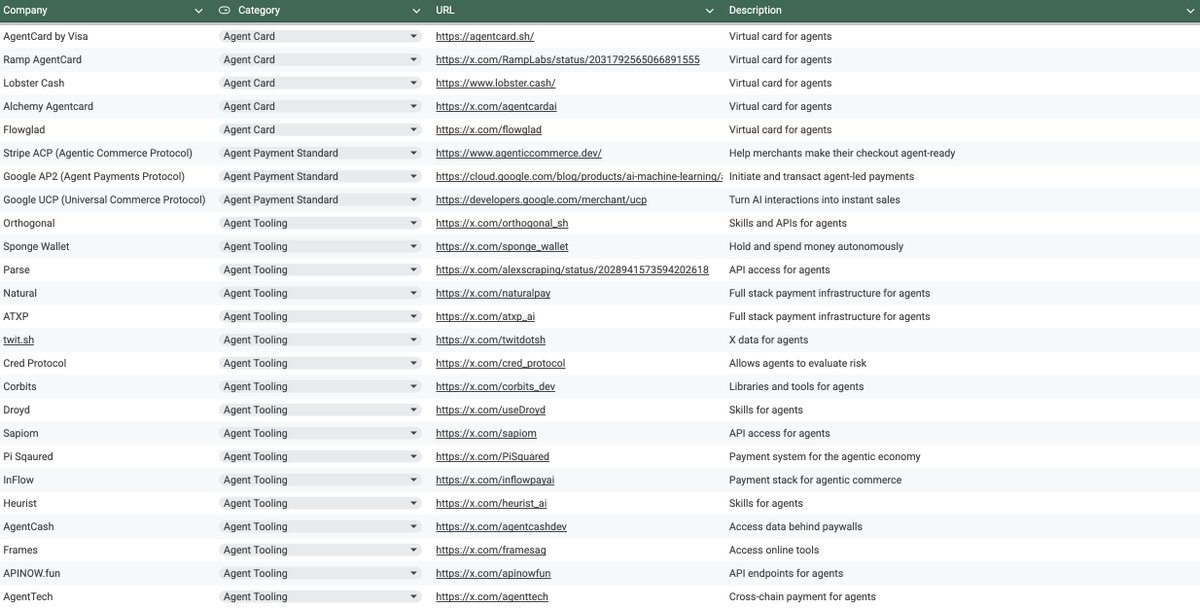

AI agents will soon graduate to fully-fledged economic actors that buy services, compute, and even data in the course of accomplishing high-level goals. 1-2 years before we start seeing this at scale.

This short film made with Seedance 2.0 is absolutely insane. The realism looks like a real movie — no one can tell it's AI.

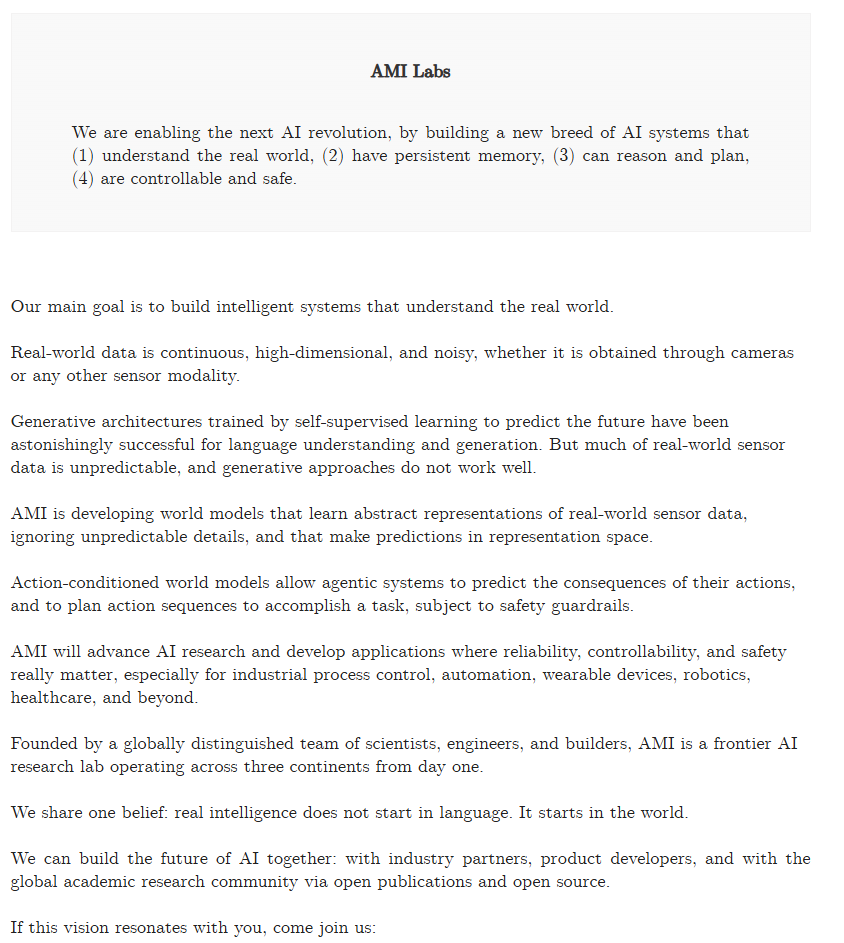

Advanced Machine Intelligence (AMI) is building a new breed of AI systems that understand the world, have persistent memory, can reason and plan, and are controllable and safe. We’ve raised a $1.03B (~€890M) round from global investors who believe in our vision of universally intelligent systems centered on world models. This round is co-led by Cathay Innovation, Greycroft, Hiro Capital, HV Capital, and Bezos Expeditions, along with other investors and angels across the world. We are a growing team of researchers and builders, operating in Paris, New York, Montreal and Singapore from day one. Read more: amilabs.xyz AMI - Real world. Real intelligence.