Mathieu

96 posts

@Mathieu_Rita

Research Scientist @AIatMeta ex: INRIA-MSR | @CoML_ENS | @Polytechnique Llama3 - RL fine-tuning - Emergent communication

With today’s launch of our Llama 3.1 collection of models we’re making history with the largest and most capable open source AI model ever released. 128K context length, multilingual support, and new safety tools. Download 405B and our improved 8B & 70B here. llama.meta.com

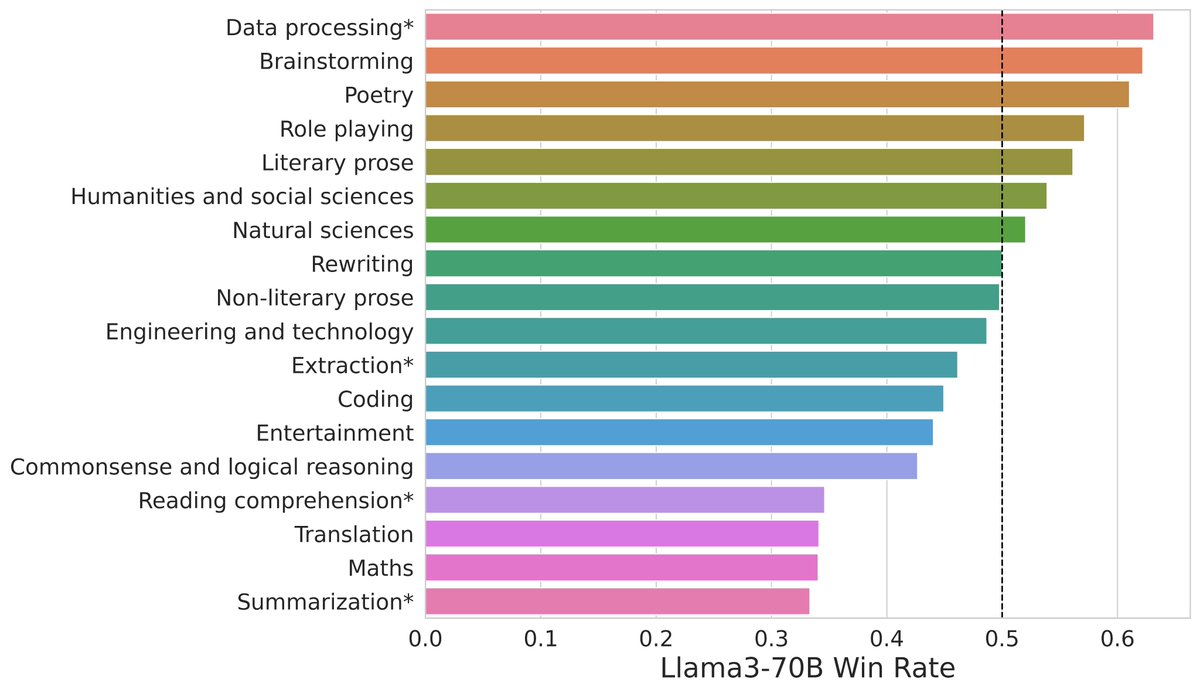

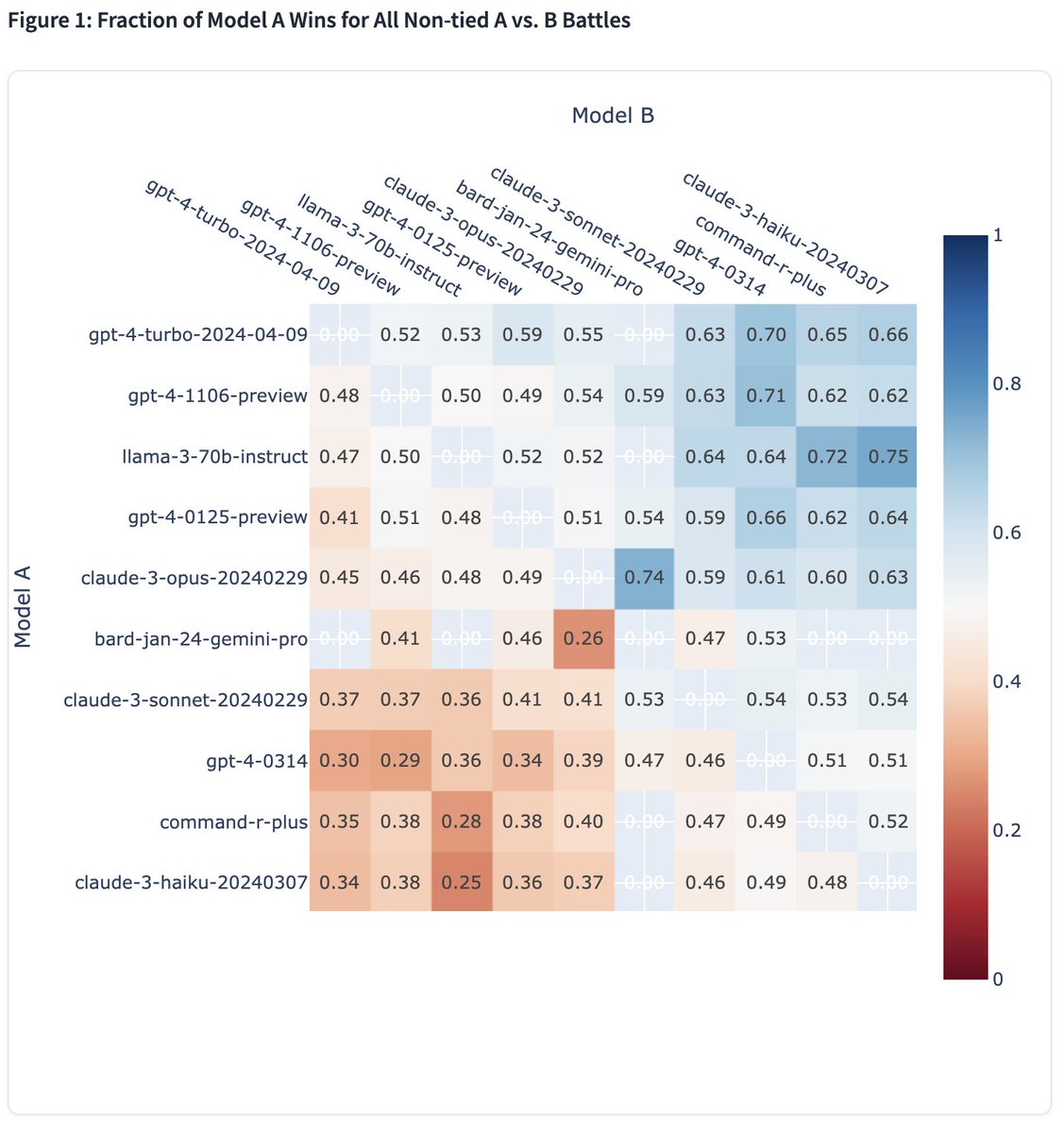

It’s here! Meet Llama 3, our latest generation of models that is setting a new standard for state-of-the art performance and efficiency for openly available LLMs. Key highlights • 8B and 70B parameter openly available pre-trained and fine-tuned models. • Trained on more than 15T tokens, 7x+ larger than Llama 2's dataset! • Improved tokenizer with vocabulary of 128K tokens for better performance. • State-of-the-art performance across industry benchmarks. • New capabilities, including enhanced reasoning and coding. • 3x more efficient training than Llama 2. • New trust and safety tools with Llama Guard 2, Code Shield, and CyberSec Eval 2. • Integrated into Meta AI, and available in more countries across our apps. • And, just the beginning with more models and new capabilities coming soon! Visit the Llama 3 website to read more and download the models. llama.meta.com/llama3

Today we’re releasing Code Llama 70B: a new, more performant version of our LLM for code generation — available under the same license as previous Code Llama models. Download the models ➡️ bit.ly/3Oil6bQ • CodeLlama-70B • CodeLlama-70B-Python • CodeLlama-70B-Instruct