Ramchand Kumaresan

200 posts

Sabitlenmiş Tweet

1/ New paper: "KALAVAI: Predicting When Independent Specialist Fusion Works"

What if 20 people each trained a specialist LLM on their own GPU, on their own data, with zero communication — and then fused them into one model that beats any individual specialist? This is my attempt to bring LLMs into everyone's reach (@0xSero and @karpathy inspired!)

That's what we tested. arxiv.org/abs/2603.22755

Webpage: murailabs.com/kalavai/

English

@smolekoma This is going to be one of the biggest unlock for AI game development

English

Ramchand Kumaresan retweetledi

Just shipped a new skill to GitHub for Web GPT and Codex Agents that turns a single prompt into fully animated 64x64 game sprites.

Screen recorded the whole thing: reference in, prompt once, walk 8 directions + attack animations out, ready for a game.

This kind of workflow still feels a little unreal.

One prompt to playable character pipeline.

github.com/tachikomared/c…

#gamedev #indiedev #pixelart #aiagents #openai #github

English

Ramchand Kumaresan retweetledi

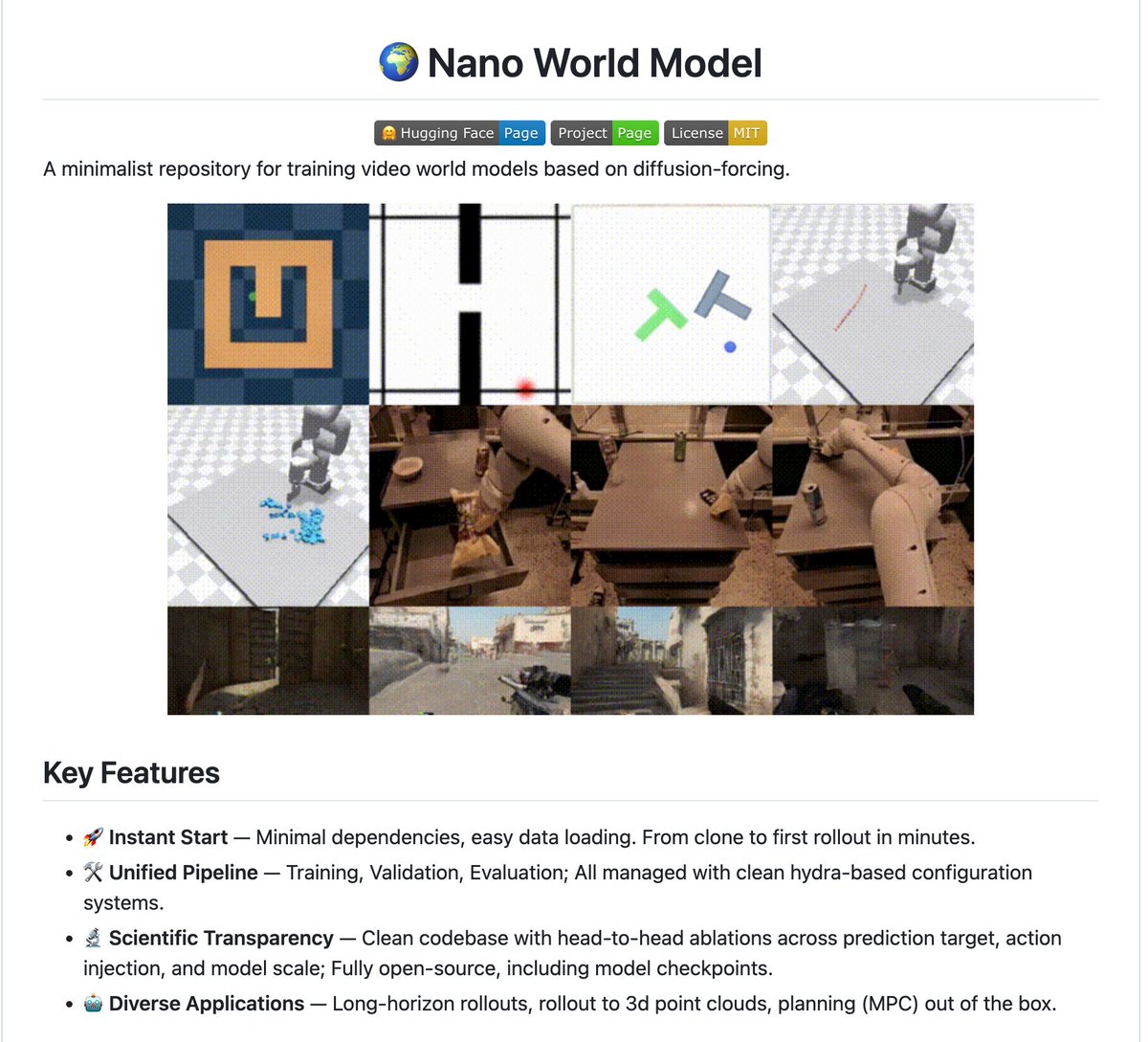

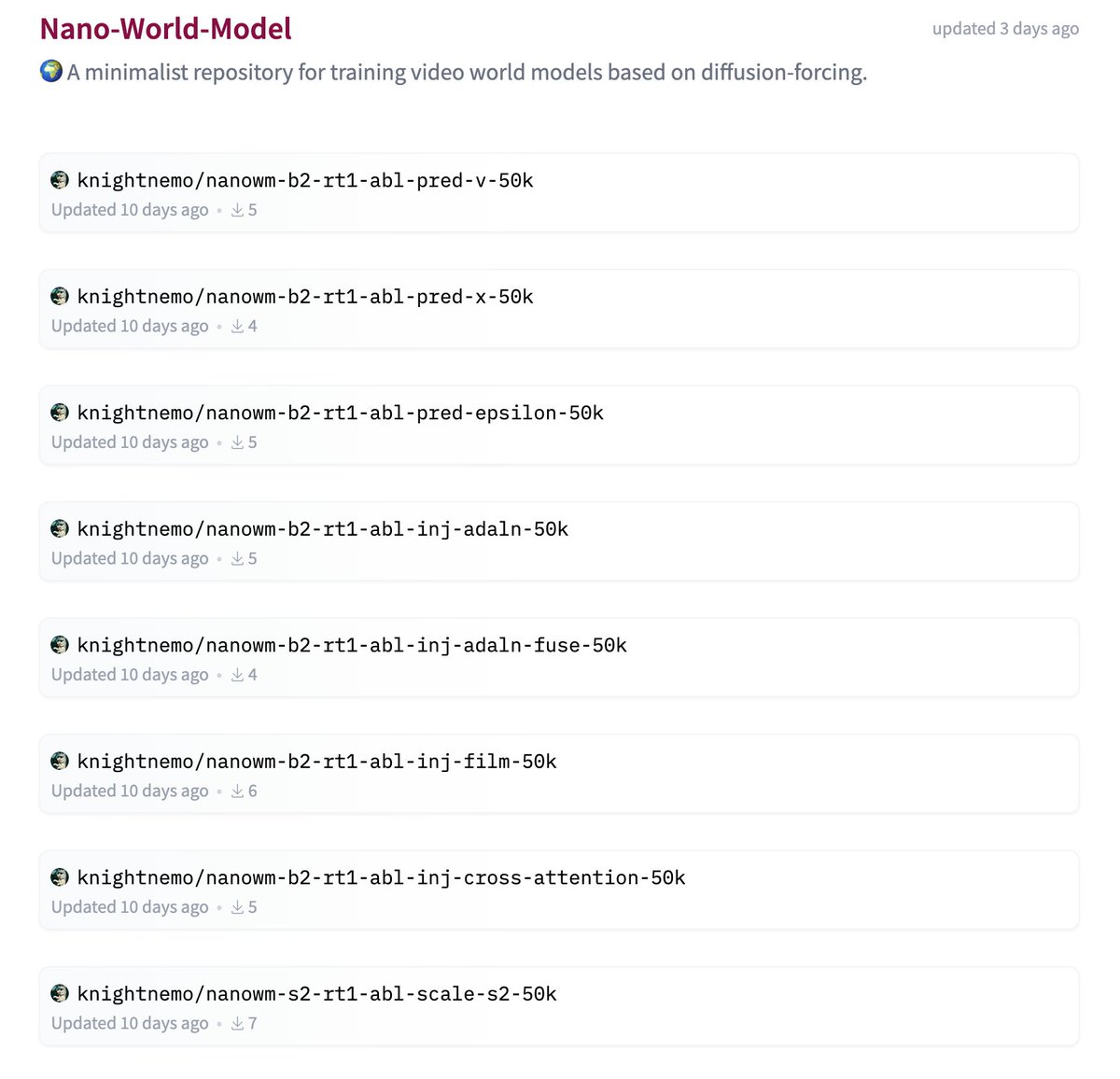

The world model community has been waiting for something like this 👇

nano-world-model repository by @KnightNemo_ — clean, minimal, hackable.

github.com/simchowitzlabp…

English

@TeksEdge Combined with a Ryzen system, if they make it work as a cluster across an existing PC like a plug and play option- they will suddenly shoot up the charts!

English

⁉️So get this, AMD is making a bold move to own the affordable personal inferencing market by launching a Mini PC in June, a 128GB Shared Memory Inferencing Box

🎇 They call it the ⬭ Halo Box.

🧾 It's a Ryzen AI MAX+ 395 (16 Zen 5 cores + 40 RDNA 3.5 CUs + XDNA 2 NPU)

✅ Up to 128GB LPDDR5X-8533 unified memory

✅ Full ROCm support + Day-0 AI model optimization

🧪 Built for local AI development (up to ~200B param models)

📈 Direct shot at NVIDIA’s $4,699 DGX Spark and could cost $2,000–$3,000 (as they do now)

🤔 Why launch now during the RAM shortage?

While memory makers divert capacity to HBM for AI data centers (driving LPDDR5X prices to spike and NVIDIA to raise the price of DGX Spark by $700), AMD is making a bold move to own the affordable, high-memory AI mini-PC segment before the crisis worsens.

💡 My Speculation: AMD could be using its contracts, relationships, and strategic priority to secure better memory access than many traditional OEMs. This could give them an advantage in launching the Halo Box during the shortage.

Smart timing or risky bet?

🔥 This is AMD aggressively fighting for the local AI developer market.

English

This is really good stuff! I know there are other sites that do this too. You folks made it really clean - i think you should make an MCP for this - mixing this Claude MCP for Autocad and a 3d printer, you have yourself a one man business minting money. @Daniel_Shosh

Daniel Shoshani@Daniel_Shosh

Today, we're officially launching Genpire. The first AI platform for making real products, literally. RT+ comment “Genpire” + share your product idea - we'll reply with your factory-ready design and specs, so you can take it to production.

English

@BarGoldray @Daniel_Shosh I love it! Some sections didn’t load on mobile but loaded on PC

English

Ramchand Kumaresan retweetledi

Ramchand Kumaresan retweetledi

Paper: arxiv.org/abs/2604.24658

Blog: orchestra-research.com/ara

English

Ramchand Kumaresan retweetledi

🆕 Big win if you're buying Intel Arc for local LLMs at home!

@Intel just dropped LLM-Scaler vllm-0.14.0-b8.2 with official Arc Pro B70 support.

For < ~$1000 you now get a 32GB VRAM card that’s actually optimized for serious local inference:

• 🐳 Dead simple Docker deployment (no more driver headaches)

• ⚡ Up to 1.49x faster inference vs previous gen

• 📈 25% better INT4 throughput

• 🔗 Scales nicely if you add more cards later

This finally makes a high-performance local LLM

Inference on Intel Arc is practical and affordable at home 🔥

English

I loved this guy’s writing style - something about the way he writes sounds like @karpathy himself.

Shunyu Yao@ShunyuYao12

I finally wrote another blogpost: ysymyth.github.io/The-Second-Hal… AI just keeps getting better over time, but NOW is a special moment that i call “the halftime”. Before it, training > eval. After it, eval > training. The reason: RL finally works. Lmk ur feedback so I’ll polish it.

English

Ramchand Kumaresan retweetledi

@JIACHENLIU8 Yup! Its also gotten so much better - I am able to create pods, run experiments on rented GPUs and compile results all with just cli. Deployments have become automatic as well! Its the making sure its not making up things that takes the most amount of time and effort.

English

I’ve been talking with many PhD students lately on brainstorming research ideas

A pattern I keep seeing: when we land on an important research question, their first reaction is to list difficulties:

"Nuh, it's too hard to evaluate ..."

"it's too hard to implement ..."

That instinct made sense before. But with AI agents that execute and brainstorm at lightning speed, finding solutions is no longer the binding constraint.

Taste and ambition are.

The mindset HAS to change. Don't self-censor big ideas into incremental ones just because the easy path is visible.

Ask the important questions first — the tools will help you figure out the rest.

English

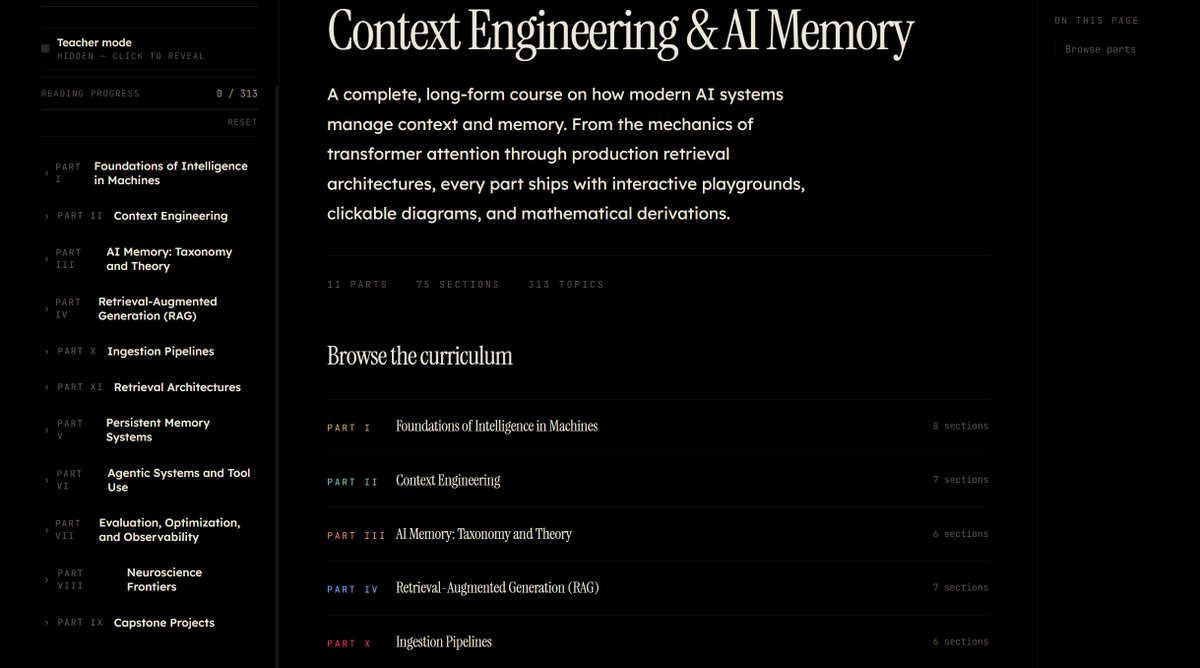

We just wrote the first-ever Ebook on Context Engineering and AI Memory

Over the past few months, me and my team have been accumulating all knowledges and materials we've read so far while building @metacognitionai . Here's a glimpse of it. We break down things going on today in this domain and also connect possible Neuroscience frontiers at the intersection of context engineering.

Post reading this, you can literally build your own context engineering company from scratch. We're planning a long-form course on this as well.

Releasing it soon for some people to review. Comment below or reach out to me on DM to get access :)

cc: @PriGoistic @sauhard_07

English

@vasuman I have been following you since Nov I think- many times, I have felt the urge to apply. But my only hard issue is having to be in ATX. But hey - check out my page ramchandk.com and maybe you might like me?

English

I interviewed over 100 candidates for Varick over the last 3 weeks. Here's how to get hired at the startup you want to join:

1. Balance expertise with low ego. No one wants to hire someone who knows nothing and agrees with everything you say. But also don't pretend to have all the answers. Be confident in what you know, be honest about what you don't.

2. Stop rambling. Your intro should be 45 seconds tops. Biggest hits only: school, latest role, what you did there. I promise no one cares about the internship you had 3 years ago.

3. Communicate clearly. It doesn't matter how talented you are if you can't get your point across. Your interviewer is likely doing 15 interviews a day and won't be paying close attention, so it's on you to emphasize what you want remembered.

4. Keep it simple. Don't apply to 5 very different roles at the same company. Bring up visa requirements and start date conflicts proactively. Don't say "I can't do the interview process for 2 weeks." Make this as painless for the startup as possible.

5. Make it a good fit. Research the company so it feels like you actually want to join, not just that you need a job. Applying blindly is a red flag, coming across like you'll jump ship in 3 months is a red flag. Sound genuinely bought into the company mission.

English

@alexocheema @AiXsatoshi How did your spark or 5090 gpu cluster with mac studio experiment go? What kind of work is possible on such a cluster in terms of training and inference workloads?

English

@AiXsatoshi We use tailscale to access EXO clusters remotely.

However clustering over the internet with tailscale is going to be very slow.

English