RadixArk

76 posts

Join us in NYC on June 3rd during #NYTechWeek @Techweek_ Liangsheng Yin (@lsyincs) and Mao Cheng (@MCheng89333), both MTS at RadixArk, will present SGLang & Miles, diving into inference infrastructure for finance. RSVP: partiful.com/e/p74X9KDrgoLa…

NYC, we're bringing the inference + finance crowd together for #NYTechWeek @Techweek_! SGLang Happy Hour: AI Infra in Finance 🕤Wed, June 3 · 6–9 PM ET 📍1/2 Bond St, New York Co-hosted with @HOFCapital, @CrusoeAI, @CloudflareDev, @ArklexAI. Lightning talks from inference engineers and researchers shipping into trading, research, compliance, and risk, followed by an open happy hour for networking. More surprise speakers to be announced — stay tuned 👀 Expected attendees from leading quant funds, banks, and trading firms, including Jane Street, Citadel, Two Sigma, Goldman Sachs, Bloomberg, among others. We've also got a bartender on site and a full bar. Come have a drink with us! Limited space. RSVP 👇 partiful.com/e/p74X9KDrgoLa…

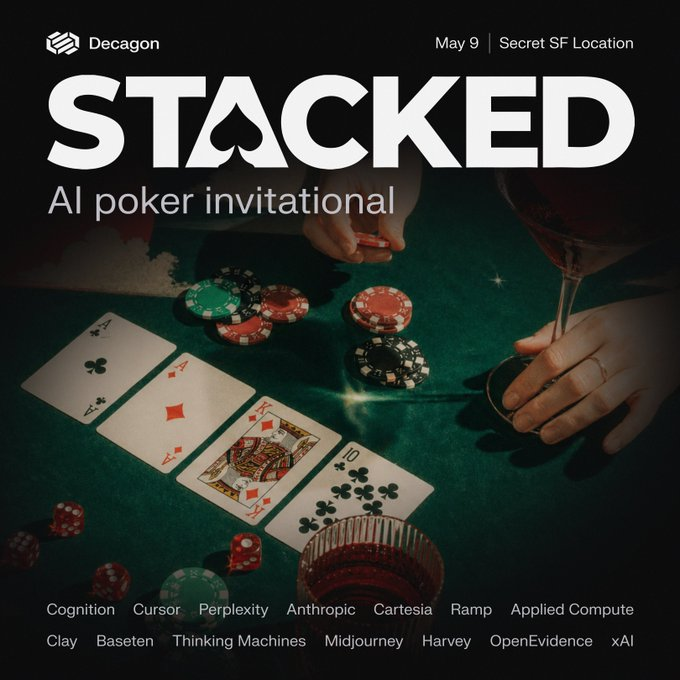

Our first Stacked poker tournament was a huge success! 1 player representing each AI company. Congrats to: 🥇 Guodong Zhang (RadixArk, co-founder of xAI) @Guodzh 🥈 Jeremy Stribling (Cursor) 🥉 Neal Wu (Thinking Machines) @neal_wu We will be hosting another one! More below👇

Digital agent learning needs massive rollouts. But digital agent rollouts are painfully slow due to heavy environments. 🐌 🚀 We introduce NanoRollout, a lightweight open infra (900 lines core code) for digital agent rollout at scale, validated with three workloads: 🏋️ Large batchsize (4K) SWE Agent RL -> surpasses DeepSWE-32B 🧪 250k+ distilled coding trajectories -> SOTA ≤32B open coding agent ⚡ Fast evaluation on coding/cua/unified agent -> finish Check our Blog: cocoa-org.notion.site/nanorollout

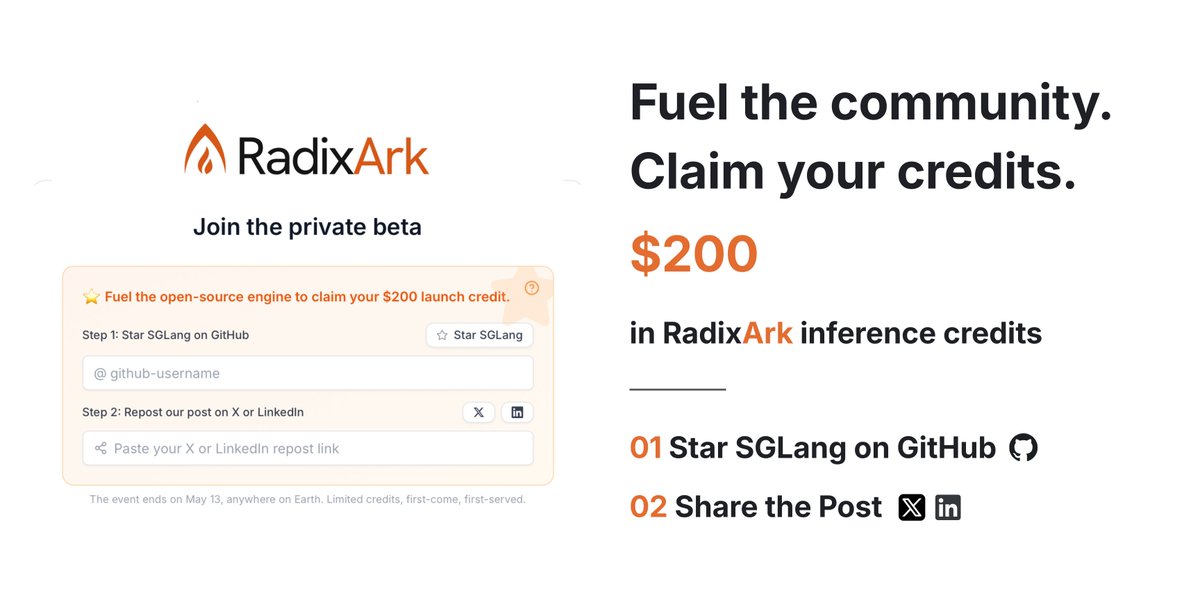

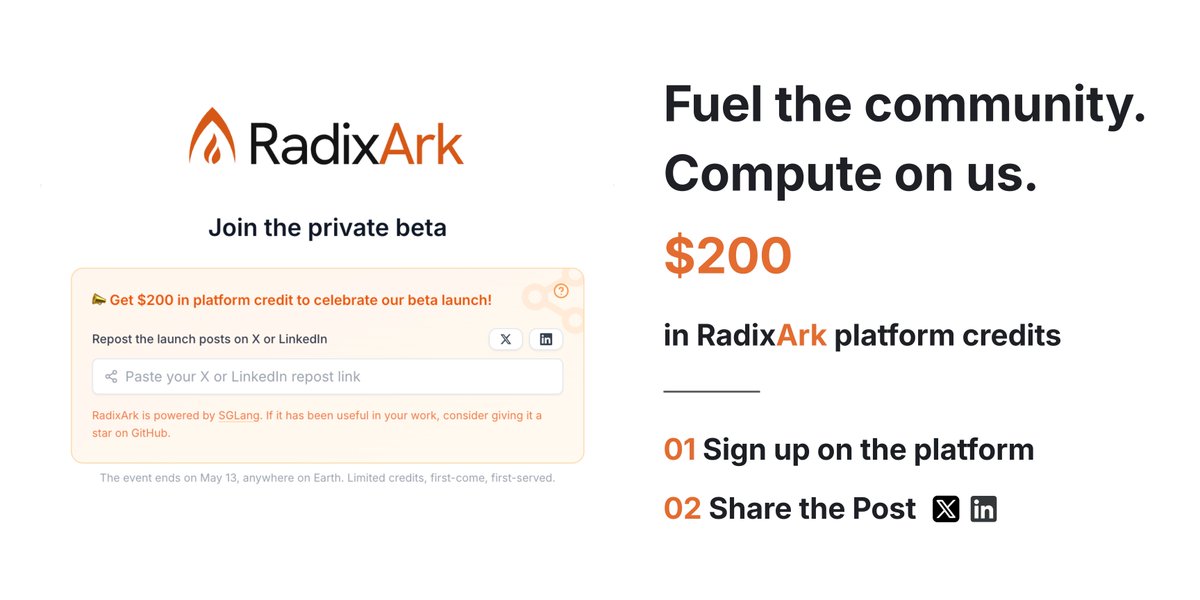

We've heard the community's feedback. Our intent was to make sure the credits reached the people who supported SGLang along the way, and we couldn't be here without you. We're updating the offer to better reflect that. RadixArk's platform is open for beta, and we're offering $200 in compute credits to get you started → Sign up at platform.radixark.com and repost this so we can get you set up. → Limited spots, first come first serve. Open through May 13, 2026 (AoE). → Credits will be granted after we verify the repost. (If you already reposted our earlier announcement, that counts too; no need to do it again.) And if SGLang has been useful in your work, consider giving it a star on GitHub. It's a small gesture that means a lot to the people maintaining it. We're in this together, and we're grateful to be building it with you 🧡

We've heard the community's feedback. Our intent was to make sure the credits reached the people who supported SGLang along the way, and we couldn't be here without you. We're updating the offer to better reflect that. RadixArk's platform is open for beta, and we're offering $200 in compute credits to get you started → Sign up at platform.radixark.com and repost this so we can get you set up. → Limited spots, first come first serve. Open through May 13, 2026 (AoE). → Credits will be granted after we verify the repost. (If you already reposted our earlier announcement, that counts too; no need to do it again.) And if SGLang has been useful in your work, consider giving it a star on GitHub. It's a small gesture that means a lot to the people maintaining it. We're in this together, and we're grateful to be building it with you 🧡

We've heard the community's feedback. Our intent was to make sure the credits reached the people who supported SGLang along the way, and we couldn't be here without you. We're updating the offer to better reflect that. RadixArk's platform is open for beta, and we're offering $200 in compute credits to get you started → Sign up at platform.radixark.com and repost this so we can get you set up. → Limited spots, first come first serve. Open through May 13, 2026 (AoE). → Credits will be granted after we verify the repost. (If you already reposted our earlier announcement, that counts too; no need to do it again.) And if SGLang has been useful in your work, consider giving it a star on GitHub. It's a small gesture that means a lot to the people maintaining it. We're in this together, and we're grateful to be building it with you 🧡

This is growth-hacking dressed up in open-source language, @radixark please stop doing it immediately. Paying people in platform credits to star a GitHub repo and repost a marketing tweet isn't "fueling the community" — it's laundering paid promotion through the trust signals open source depends on. Stars are supposed to mean someone found a project useful. Attach a $200 bounty and the number means nothing. GitHub's own policies prohibit this for exactly that reason.

Today, we are thrilled to officially launch RadixArk with $100M in Seed funding at a $400M valuation. The round was led by @Accel and co-led by @sparkcapital. RadixArk exists to make frontier AI infrastructure open and accessible to everyone. Today, the systems behind the most capable AI models are concentrated in a small number of companies. As a result, most AI teams are forced to rebuild training and inference stacks from scratch, duplicating the same infrastructure work instead of focusing on new models, products, and ideas. RadixArk was founded to change that. We are building an AI platform that makes it easier for teams to train and serve the best models at scale. RadixArk comes from the open-source community. We started with SGLang, where many of us are core developers and maintainers, and expanded our work to Miles for large-scale RL and post-training. We will continue contributing to both projects and working with the community to make them the strongest open-source infrastructure foundations for frontier AI. We would like to thank our long-term partners, contributors, and the broader SGLang community for believing in this mission. We're also grateful to @Accel and @sparkcapital, NVentures (Venture capital arm of @nvidia), Salience Capital, A&E Investment, @HOFCapital, @walden_catalyst, @AMD, LDVP, WTT Fubon Family, @MediaTek, Vocal Ventures, @Sky9Capital and our angel investors @ibab, @LipBuTan1, Hock Tan, @johnschulman2, @soumithchintala, @lilianweng, @oliveur, @Thom_Wolf, @LiamFedus, @robertnishihara, @ericzelikman, @OfficialLoganK, and @multiply_matrix among others. Thanks for the exclusive interview with @MeghanBobrowsky at @WSJ about our vision.

Today, we are thrilled to officially launch RadixArk with $100M in Seed funding at a $400M valuation. The round was led by @Accel and co-led by @sparkcapital. RadixArk exists to make frontier AI infrastructure open and accessible to everyone. Today, the systems behind the most capable AI models are concentrated in a small number of companies. As a result, most AI teams are forced to rebuild training and inference stacks from scratch, duplicating the same infrastructure work instead of focusing on new models, products, and ideas. RadixArk was founded to change that. We are building an AI platform that makes it easier for teams to train and serve the best models at scale. RadixArk comes from the open-source community. We started with SGLang, where many of us are core developers and maintainers, and expanded our work to Miles for large-scale RL and post-training. We will continue contributing to both projects and working with the community to make them the strongest open-source infrastructure foundations for frontier AI. We would like to thank our long-term partners, contributors, and the broader SGLang community for believing in this mission. We're also grateful to @Accel and @sparkcapital, NVentures (Venture capital arm of @nvidia), Salience Capital, A&E Investment, @HOFCapital, @walden_catalyst, @AMD, LDVP, WTT Fubon Family, @MediaTek, Vocal Ventures, @Sky9Capital and our angel investors @ibab, @LipBuTan1, Hock Tan, @johnschulman2, @soumithchintala, @lilianweng, @oliveur, @Thom_Wolf, @LiamFedus, @robertnishihara, @ericzelikman, @OfficialLoganK, and @multiply_matrix among others. Thanks for the exclusive interview with @MeghanBobrowsky at @WSJ about our vision.