Akshay from Startup Spells 🪄

30.2K posts

Akshay from Startup Spells 🪄

@StartupSpells

I cover 0.1% Marketing Strategies of Startups from The Past, The Present & The Future. Get them daily in your inbox at https://t.co/kk9u7nveNp

The UN projection for South Korean fertility is wildly, insanely, gobsmackingly optimistic. Come on, man.

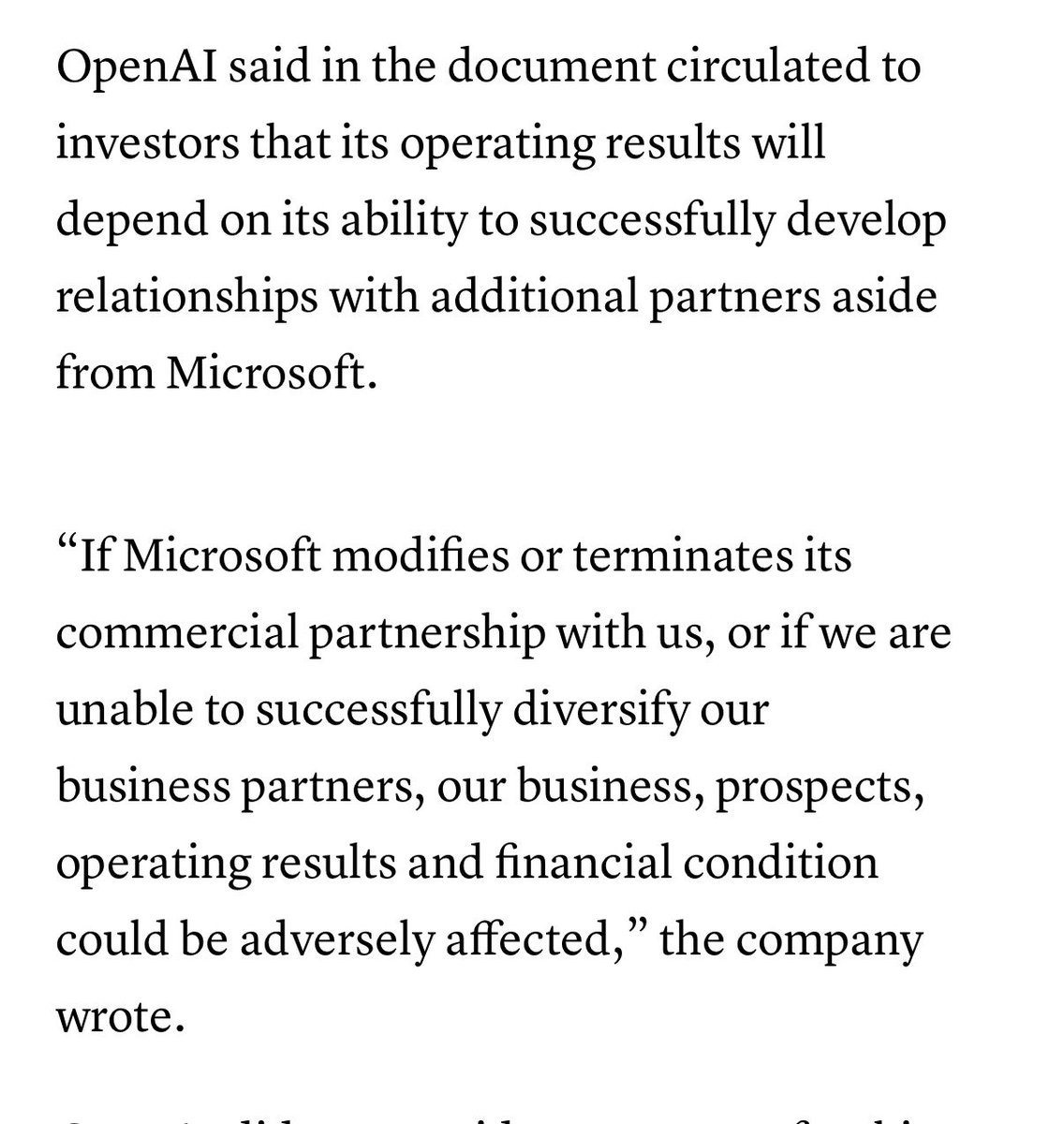

@satyanadella OpenAI is going to eat Microsoft alive

The new Deep Think is very, very smart, but is constrained by the Gemini interface compared to GPT-5.2 Pro. If I can't see actual work in the thinking trace, or get downloadable files as proof of work, or see evidence of the code & statistics it applied, checking results is hard

GPT-5.4 Pro continues to be the only model of its class. For anything really hard & complex, I throw it into the maw with every bit of context I can think of. More often than not, something very useful comes out. I can't get the same results from Codex or Code or anything else.

Roy Lee has done more for Asian American representation than any Olympian or Hollywood star

Icon, the AI Admaker, just went bankrupt They paid $12M for the domain Icon.com and now it's dead

i literally stopped building after i sent the quoted tweet i am just taking some days off and focusing on marketing it started paying off. it's the first time i am seeing this much traffic! my UA cost is too high though, i need to see how this will affect the keyword rankings

Yann LeCun (@ylecun ) explains why LLMs are so limited in terms of real-world intelligence. Says the biggest LLM is trained on about 30 trillion words, which is roughly 10 to the power 14 bytes of text. That sounds huge, but a 4 year old who has been awake about 16,000 hours has also taken in about 10 to the power 14 bytes through the eyes alone. So a small child has already seen as much raw data as the largest LLM has read. But the child’s data is visual, continuous, noisy, and tied to actions: gravity, objects falling, hands grabbing, people moving, cause and effect. From this, the child builds an internal “world model” and intuitive physics, and can learn new tasks like loading a dishwasher from a handful of demonstrations. LLMs only see disconnected text and are trained just to predict the next token. So they get very good at symbol patterns, exams, and code, but they lack grounded physical understanding, real common sense, and efficient learning from a few messy real-world experiences. --- From 'Pioneer Works' YT channel (link in comment)