Adrian Sanchez 🎮

3.3K posts

Adrian Sanchez 🎮

@SunsetLearn

Senior Technical Engineer @ Squanch Games ⚡️https://t.co/ejNReXFIh2 - https://t.co/nWHW3GpMjq ⚡️Using electricity to produce light @SunsetStudioCo

testing skin details with gpt image 2 + upscaling (4k) it is reaally good but even the tiniest displacement between textures creates very visible artifacts in 3D, as you can see on his ear Textures generated with GPT: - Color - AO (discarded, useless) - SS (discarded) - Normal base layer - Normal detail layer (mixed both together) - Roughness Overall very interesting and if the normal layer displacements weren't bleeding into the surface so hard, it'd be a great result and i could upscale it to 8k I think skinning with AI is becoming really good now

I apologize for staying quiet for way too long. Here is a first look at the flow for generating a random realistic landscape in the engine. This time, the demo is done on the live website too :)

🚨 Anthropic CEO says STEM is losing its edge, and what makes you “more human” will decide your future

🚨 Elon Musk says physics has stagnated and AI could overtake scientific research. He says progress has relied on expensive hardware like colliders and telescopes, that slows discovery.

Stories shape our future. Story tellers manifest our destiny. Someone, somewhere, is writing an epic screenplay that is more Star Trek, than Terminator. A vision of a compelling and optimistic tomorrow that will shape humanity’s next few decades. The cell phone, the internet, humanoid robots, self-driving cars, voice assistants, and Starships were all imagined in science fiction before they were built by engineers. Stories are blueprints. Question: What if we asked storytellers around the world to envision an epic and compelling future for humanity, and then funded them to produce that film? What if we could flood the world with positive visions of the future, rather than dystopian predictions? Announcing the Future Vision XPRIZE 🧵

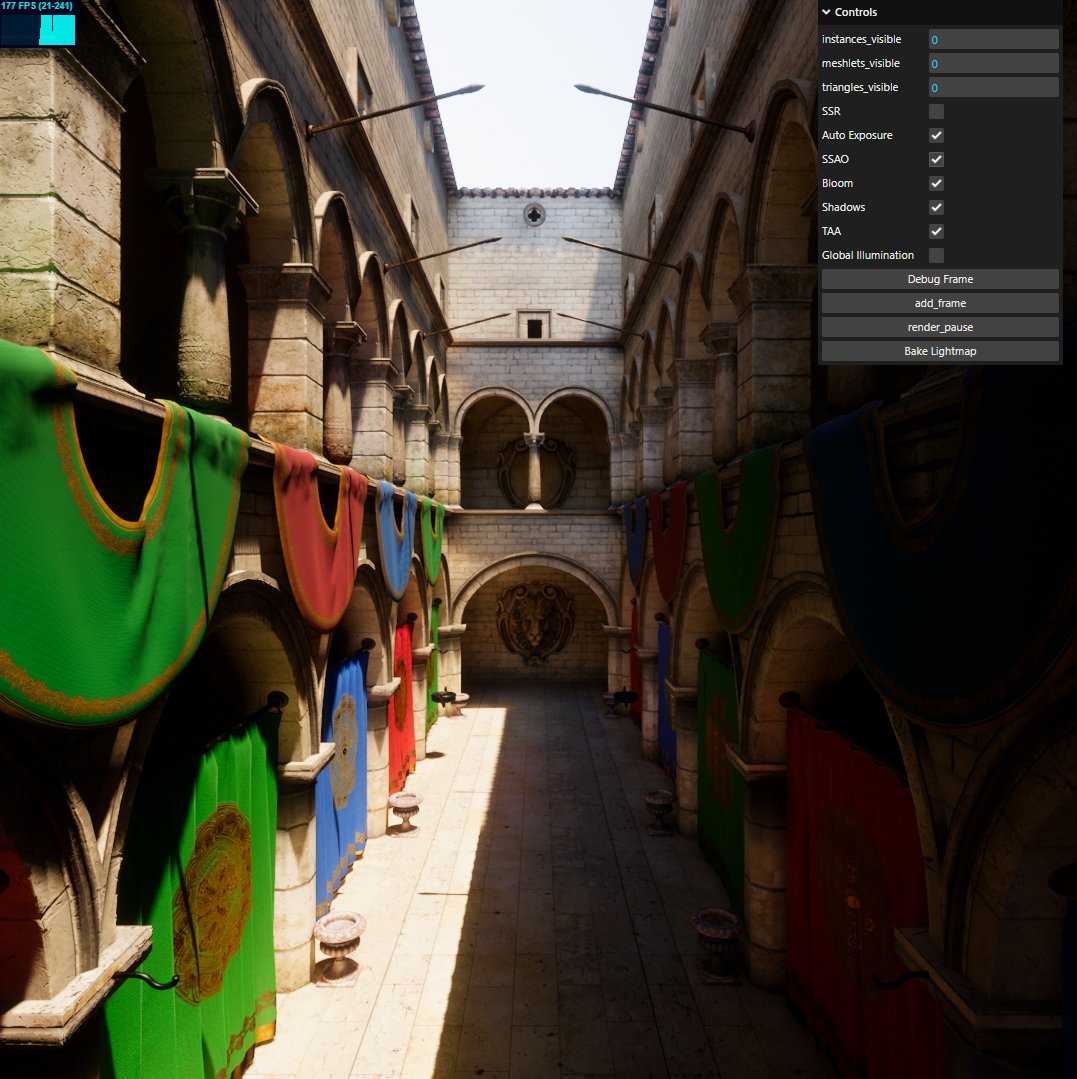

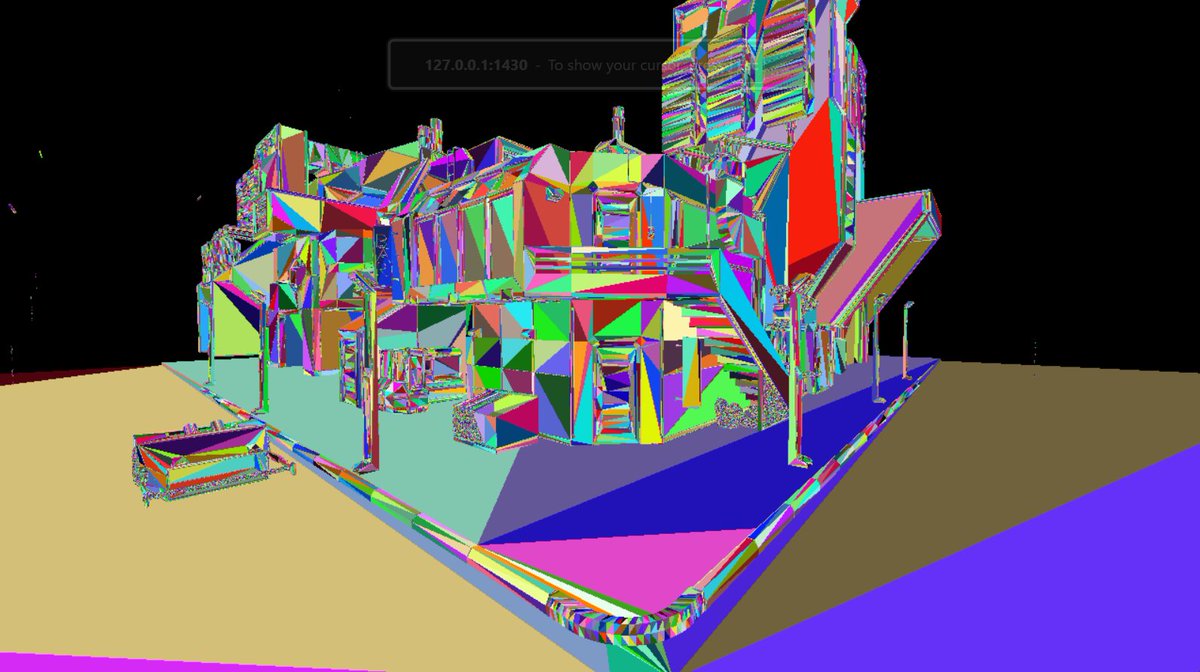

Get ready to see a lot of games looking like this in the future with AI slop.

Announcing NVIDIA DLSS 5, an AI-powered breakthrough in visual fidelity for games, coming this fall. DLSS 5 infuses pixels with photorealistic lighting and materials, bridging the gap between rendering and reality. Learn More → nvidia.com/en-us/geforce/…

This is Grace against her face model. What do you think?

Announcing NVIDIA DLSS 5, an AI-powered breakthrough in visual fidelity for games, coming this fall. DLSS 5 infuses pixels with photorealistic lighting and materials, bridging the gap between rendering and reality. Learn More → nvidia.com/en-us/geforce/…

I think this collective feeling of "I don't enjoy coding anymore because it's so easy with AI" is good to talk about and realize, and I have it too I miss going to bed with a coding challenge I have to get through and then wake up and in the shower I get the answer and I scream EUREKA!!!!! But then you quickly just have to accept that the world has permanently changed now and it's just not going back because letting AI code for you is simply so much faster and effective and will only get better with every passing year So the better mental approach for me to these things is to just aggressively embrace it and change myself instead, if the fun in solving the challenges is gone, where else can I find the fun? I'm lucky a bit because for me the fun has always been building new things in general, not so much the coding part, although the coding challenges were fun for me too. But having ideas and just building new things was always the most fun. So I have to double down on that now, making more things and making better things and making them much faster than before. Especially now that literally everyone in the world has access to the same coding skill as everyone else (which is AI), the focus will have to aggressively be on what remains as a differentiator for me as a creator, which is my ideas and the way I execute them, not coding them So that's what I will try focus on from now on I think