Don Smith

1K posts

Don Smith

@TanagerDon

Cat-Herder-in-Chief for several companies, including Covixyl, a virus-blocking nasal spray (@gocovixyl), a novel sensor, and an AI-based security company.

Most people want AI to sound like them. But no one does this first (and it takes 47 mins): Step 1. Download Claude (claude. com/ download) Open 'Cowork'. Create a folder for your voice. Step 2. Select Opus 4.7 + Extended Thinking Set as default model. Turn ON Extended Thinking. Step 3. Copy-paste the interview prompt From this guide: ruben.substack.com/p/youre-just-a… It asks you 100 questions about how you think. Step 4. Answer every question out loud Use the Wispr. ai. Talk to yourself. Don't type. Typing makes you edit. Talking makes you honest. Step 5. Don't tell what you like. Tell what you reject. Be extremely precise and specific in your answers. 80% of your file should be what you'd never say. Step 6. Paste Prompt 2 - The Voice Compiler 20K-word raw file → 2-4K compressed tokens. Cuts generic. Keeps signal. Most people stop at the raw dump. That's the mistake. Step 7. Save + test [yourname] .md Open a blank Claude session. Paste the file. Ask it to write something. If it sounds like you → ship it. If not → re-interview. Step 8. Deploy with Obsidian Drop the .md in your Cowork folder. It auto-read on every prompt. Edit it like a Google Doc. It syncs automatically, without downloading uploading loop. The Don'ts: ✦ Skip the 100-question interview ✦ Answer vaguely ("I like clarity" → clarity HOW?) ✦ Stop at the 20K-word raw dump ✦ Use blank chats instead of Cowork folders ✦ Treat the file as static (you change daily) ✦ Think your voice is too "magical" to capture ✦ Forget to document what you'd NEVER write ✦ Blame AI for sounding generic The Do's: ✦ Always use Cowork - not blank chats ✦ Always turn on Extended Thinking ✦ Always compress with Prompt 2 ✦ Always test in a blank session first ✦ Always document refusals, not just preferences ✦ Always edit your .md as your taste shifts ✦ Always push through the full 100 questions ✦ Always start prompts pointing to your folder You think you're complex to fit in a file. You're not. 47 mins & one .md file to duplicate your brain. Most people won't sit for 2 hours. You're not most people. Full process + prompts: ruben.substack.com/p/youre-just-a…

You've got an idea for an app? Yeah I know. But here are my thoughts on your barriers to entry.

🚨Shocking: A 25,000-task experiment just proved that the entire multi-agent AI framework industry is built on the wrong assumption. Every major framework - CrewAI, AutoGen, MetaGPT, ChatDev - starts from the same premise: assign roles, define hierarchies, let a coordinator distribute work. Researchers tested 8 coordination protocols across 8 models and up to 256 agents. The protocol where agents were given NO assigned roles, NO hierarchy, and NO coordinator outperformed centralized coordination by 14%. The gap between the best and worst protocol was 44%. That's not noise. That's a completely different outcome depending on how you organize the agents - not which model you use. Here's what makes this uncomfortable: When agents were simply given a fixed turn order and told "figure it out," they spontaneously invented 5,006 unique specialized roles from just 8 agents. They voluntarily sat out tasks they weren't good at. They formed their own shallow hierarchies - without anyone designing them. The researchers call it the "endogeneity paradox." The best coordination isn't maximum control or maximum freedom. It's minimal scaffolding - just enough structure for self-organization to emerge. But there's a catch nobody building agents wants to hear: below a certain model capability threshold, the effect reverses. Weaker models actually need rigid structure. Autonomy only works when the model is smart enough to use it. Which means every agent framework shipping with one-size-fits-all hierarchies is wrong twice - over-constraining strong models and under-constraining weak ones. The $2B+ invested in agent orchestration tooling may be solving a problem that capable models solve better on their own.

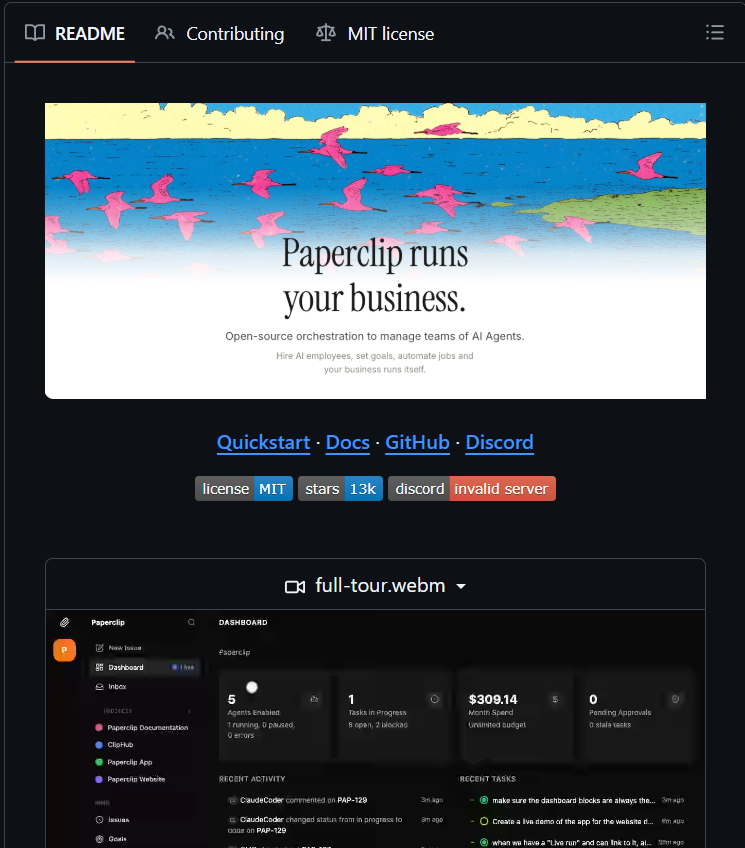

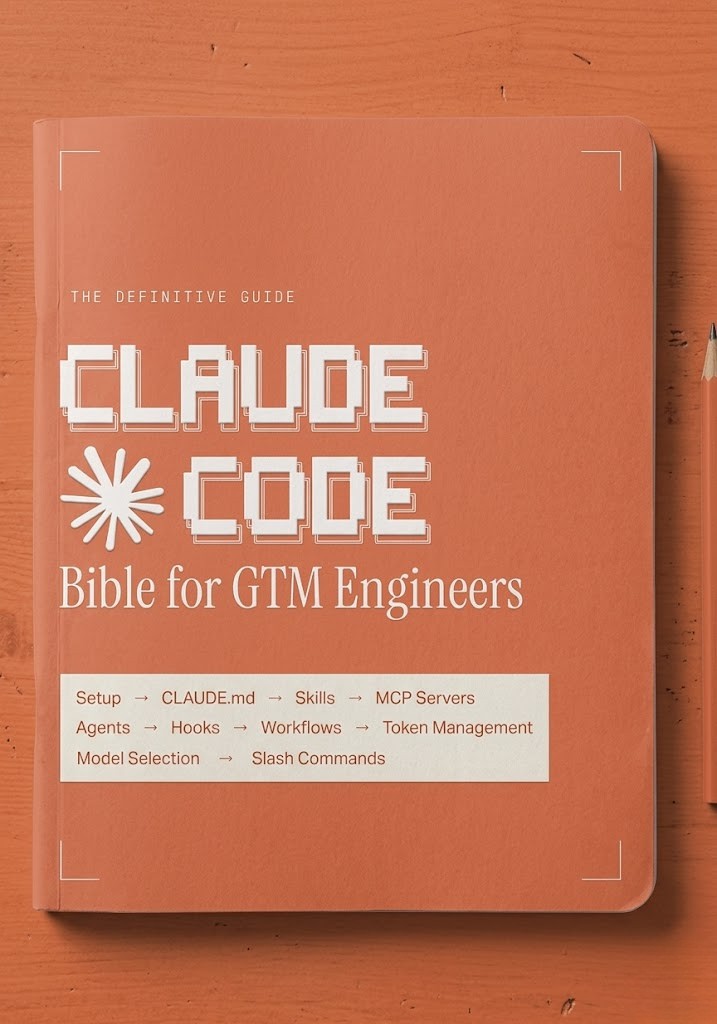

The 26 prompts running inside 𝗖𝗹𝗮𝘂𝗱𝗲 𝗖𝗼𝗱𝗲 just got open-sourced. This is literally the entire brain of a $200/month AI coding tool. Someone reverse-engineered every prompt from the accidentally published npm source and you can now study all of them for free. Claude Code uses 26 distinct prompts to function: 1 system prompt (identity, safety, tool routing) 11 tool prompts (shell, file ops, search, planning) 5 agent prompts (explorer, architect, verifier, docs) 4 memory prompts (summarization, session notes) 1 coordinator prompt (multi-agent orchestration) 4 utility prompts (titles, recaps, suggestions) The patterns inside are wild: A dedicated agent whose only job is to TRY TO BREAK the code before it ships Anti-over-engineering rules baked in: "don't add features beyond what was asked" 9-section memory compression that preserves every user message Tiered risk system: freely edits your files but asks permission before force-pushing Every prompt has been rewritten from scratch for legal compliance. Same behavioral intent, no verbatim copying. Even if you never build an agent, reading these teaches you how the best AI coding tool actually thinks. When it edits, when it asks, when it verifies, when it stops. This is a free masterclass in prompt architecture. MIT licensed. Fork it, copy it, learn from it. github.com/swati510/claud…

OpenAI + Stripe just launched ACP — "open" commerce where one company decides what gets surfaced and one processor takes the cut. Pelagora is the actual open alternative. Peer-to-peer. No gatekeeper. Your node is your store. pim-protocol@0.3.0 is live.

We're hosting a FREE live webinar April 8: "6 Steps to a 6-Figure Career in Non-Destructive Testing" Certifications, salaries, hiring landscape, and live Q&A with a Level III tech. Free. Recording available. 🔗 #ndt" target="_blank" rel="nofollow noopener">academy.graycollar.com/#ndt

#NDT #SkilledTrades #CareerChange #TradesNotCollege