Jerry Low

26.5K posts

Jerry Low

@WebHostingJerry

Geek dad / SEO junkie / Value Investor.

Chris Williamson just shared his "nuclear" sleep stack that's quietly changing his life—and Andrew Huberman breaks down exactly why it works: If you're lying in bed at 2 a.m. scrolling or staring at the ceiling, this 4-minute protocol combo might be the fastest way to shut your brain off without pills. The two killer techniques Williamson swears by: 1. The Mind Walk (visualization on steroids) - Imagine walking a route you know perfectly (your house → front door → street) - Do it with insane detail: feel the shoehorn, hear the key turn, feel the door handle, pressure of the pavement - It's like reading fiction for your nervous system—engages the brain just enough to stop problem-solving loops, but not enough to keep you awake 2. Resonance breathing with the Ohm stone lamp - Bedside lamp with induction-charging stone that has a built-in FDA-cleared HRV sensor - Hold the stone → 3/6/9/12-minute guided sessions with silent tactile vibration (no sound, no light, partner-safe) - Guides you into true resonance frequency (max vagal tone) → the stone knows when you hit it - Williamson calls it “the sickest” sleep tool he’s ever used—currently in stealth (ohmhealth, not widely available yet) Huberman adds the neuroscience: Looking down + eyelids lowering activates parasympathetic circuits and deactivates wakefulness-promoting brainstem nuclei. It’s literally pedaling the sleep pedal while shutting off the alertness arm. Williamson: “Some days you need the adventure story (mind walk), some days you need the physiological hammer (resonance breathing). Stack them and I’m cross-eyed into sleep.” Already trying one of these? Or is your nighttime routine still a war zone?

Carney: "American hegemony in particular helped provide public goods, open sea lanes, a stable financial system, collective security ... this bargain no longer works. Let me be direct. We are in the midst of a rupture, not a transition ... recently, great powers have begun using economic integration as a weapon. Tariffs as leverage ... "

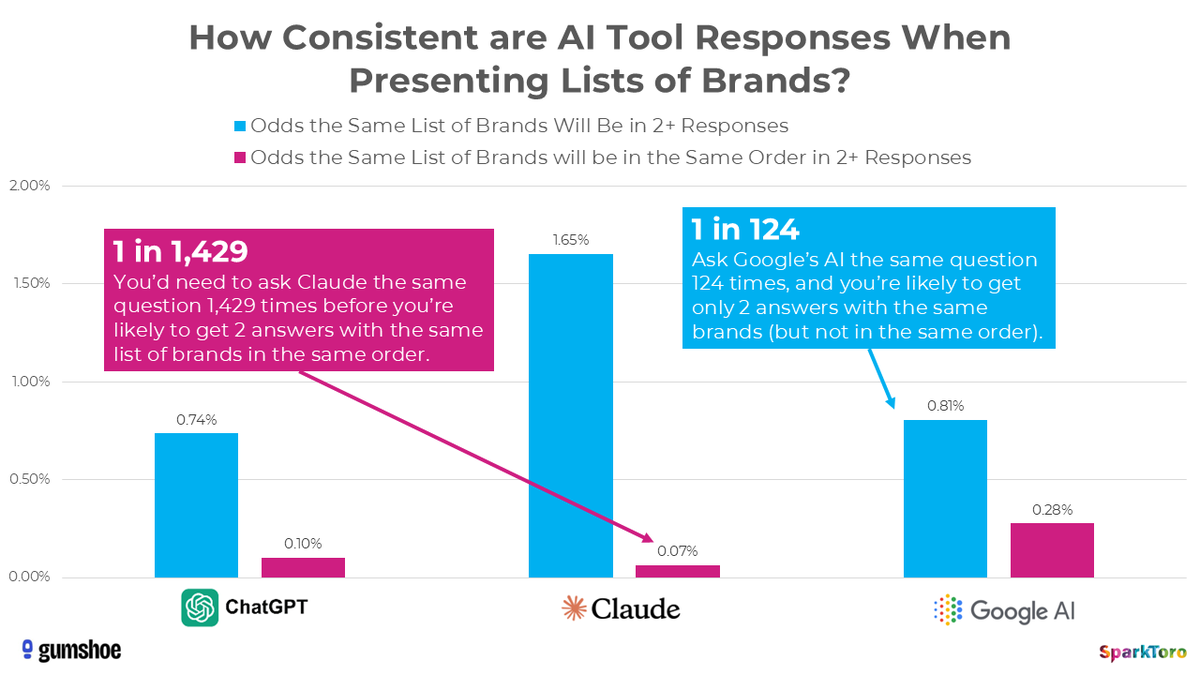

Sequoia partner @sonyatweetybird says we're going from the age of product-led growth to the age of agent-led growth. "You see this most clearly if you're using Claude Code actively. It says, 'Hey, for a database, you should use Supabase. For hosting, use Vercel.' It's choosing for you, the stuff you should be using." "Product-led growth brought us closer to the vision of 'best product wins,' but ultimately people are still lazy. They can't read all the reviews, and they kind of default to what looks cool on the website." "Whereas your agent has infinite time to go and make these choices for you. It can go and read all the documentation, read all the user comments, and figure out [what you need] for your use case."