World Robot Day - 25 January GlobalBritainTechAI

9.1K posts

World Robot Day - 25 January GlobalBritainTechAI

@WorldRobotDay

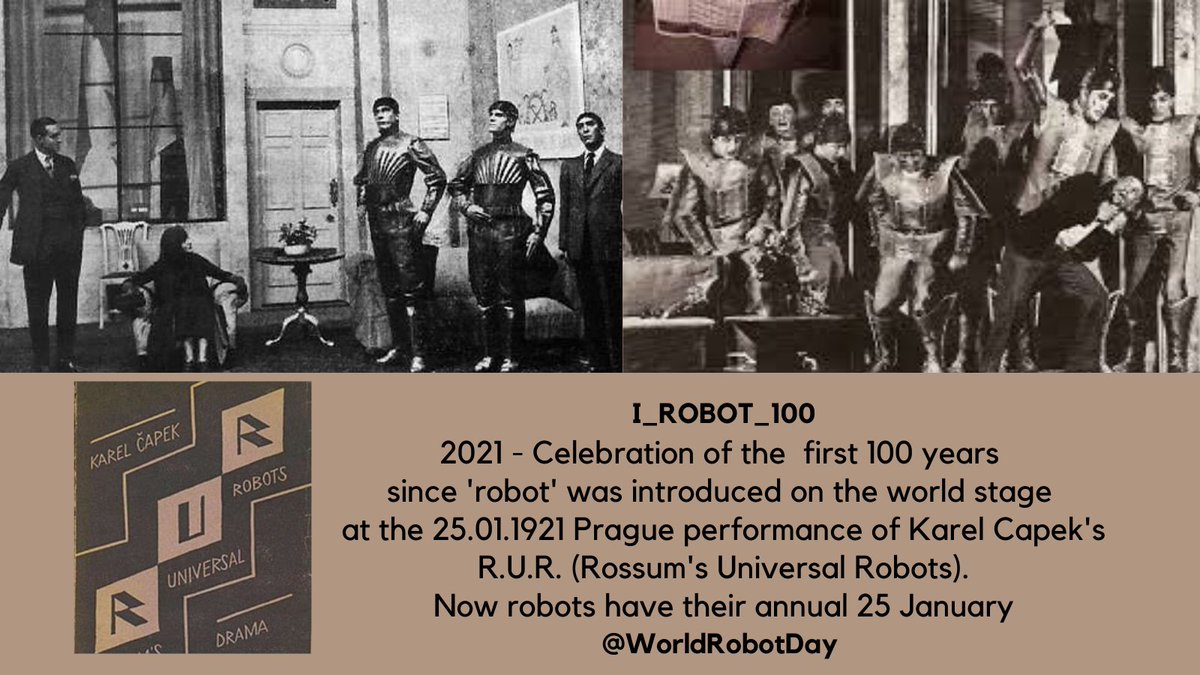

World Robot Day 25 January was created in 2021 to mark 100 years since the word 'robot' debuted in Karel Čapek's R.U.R. play on 25 January 1921. #WorldRobotDay

@davidchalmers42 and here @davidchalmers42 are my take on LLM : philpapers.org/rec/WIKTLP

here's a new version of "what we talk to when we talk to language models", with an added section (pp. 16-23) on LLM interlocutors as characters, personas, or simulacra. philarchive.org/rec/CHAWWT-8 the new version discusses role-playing vs realization, the simulators framework, the persona selection hypothesis, and more -- in addition to the existing discussion of quasi-mental states, LLM identity, personal identity in severance, LLM welfare, and related topics. this version was mostly written before recent discussions of these issues on X and in NYC, but i've updated it a little in light of those discussions. any thoughts are welcome.

中国厂商又有福了🤙 苏黎世联邦理工大学,爱因斯坦的母校,推出一款无比灵活的机械手臂,所有零部件均为 3D 打印💪 最为关键的该团队把所有代码开源了,原本 10 万美金的机械手臂现在成本 2000 美金😍

The human brain is truly a marvel of nature. If you horribly reductive, and boiled it down to a language model, you'd be looking at roughly 100 trillon parameters running as a sparse MoE architecture Only about 1-5% of neurons fire at any given moment, meaning the brain "activates" maybe 1-5 trillion parameters per inference step. For context, the largest AI models we've built probably top out around 5 trillion parameters. The brain is roughly 100x larger. Even its active params at any given moment are larger than almost every model in existence today. Here's what melts my brain (pun intnended) though Your brain does all of this on about 20 watts of power, less than a dim light bulb. Training a frontier AI model consumes enough electricity to power small cities for months. Running inference across data centers pulls megawatts. Your brain runs 24/7 for 80+ years on the equivalent of a phone charger. We haven't come close to matching the brain's scale. And we're not even in the same universe when it comes to efficiency. Evolution spent 500 million yrs optimizing the most energy-efficient intelligence architecture ever known. we're trying to brute force our way there with compute and electricity. Nature is still the best engineer in the room.

Honored to be named among the Top 10 emerging voices in longevity by @StoryfulNews! Proud to be listed with such a dynamic and thoughtful group—including @mkaeberlein, @CharlesMBrenner, and Rhonda Patrick @foundmyfitness—for helping shape science-driven longevity conversations🚀

Last night I spoke with Brad Smith @ALScyborg, the first person with ALS to have @neuralink implanted. He has his voice back through AI and can even make dad jokes again. Absolutely incredible technology changing lives and bettering humanity. Thank you @elonmusk!

The Godfather of AI just joined our mission to help humanity live past 100. Nobel Laureate Geoffrey Hinton has joined @humanlongevity as a Scientific Advisor — bringing the mind behind modern AI to the frontier of longevity medicine. prnewswire.com/news-releases/…