b a b a r

621 posts

b a b a r

@_babarhashmi

i undo tweets* // bot butcher @quillai_network // serial prompter @wach_ai // christian dyor @deaialliance

Introducing EVMbench—a new benchmark that measures how well AI agents can detect, exploit, and patch high-severity smart contract vulnerabilities. openai.com/index/introduc…

Introducing QuillShield 🛡️ We’ve been building QuillShield for the last year @QuillAudits. QuillShield is a security agent swarm embedded inside the developer workflow. Think of it like an always-on Web3 code review layer for every PR, it catches critical vulnerabilities, surfaces the exact bug + risk, and helps you improve fixes faster over time. So far, QuillShield has already helped our team crack bounties on leading bug bounty platforms. The next step is public benchmarks and transparent performance metrics. We’re preparing to put it on the world map the right way. Note: The current platform (including the automatic patching flow) is live today, but we’ll be deprecating it soon. We’re rebuilding QuillShield from scratch with a completely fresh approach, which is why there haven’t been major updates in the last 3–4 months. More updates coming soon. 👀

🔥HUGE MILESTONE: ERC-8004 is launching on Ethereum Mainnet imminently! ERC-8004 is a new standard on the Ethereum blockchain designed to help AI agents interact safely and reliably with each other, even if they're built by completely different people or companies. The Problem It Solves Right now, AI agents work great inside one company's system (where everything is pre-trusted), but they struggle in an open world. How does one agent find another useful agent? How does it know the other one is legit, competent, and won't mess up or scam it? Without a shared trust system, agents stay siloed, and we can't have a big, open "economy" of AI agents trading services. ERC-8004 fixes this by adding a simple "trust layer" on Ethereum, using three lightweight on-chain tools (called registries). It's like giving AI agents passports, review profiles, and certification stamps: - Identity Registry - Reputation Registry - Validation Registry Why This Matters ERC-8004 turns Ethereum into a neutral "settlement layer" for a massive decentralized AI economy. Agents can discover each other, build portable trust, and collaborate across organizations without needing big companies as middlemen.

New on our Frontier Red Team blog: We tested whether AIs can exploit blockchain smart contracts. In simulated testing, AI agents found $4.6M in exploits. The research (with @MATSprogram and the Anthropic Fellows program) also developed a new benchmark: red.anthropic.com/2025/smart-con…

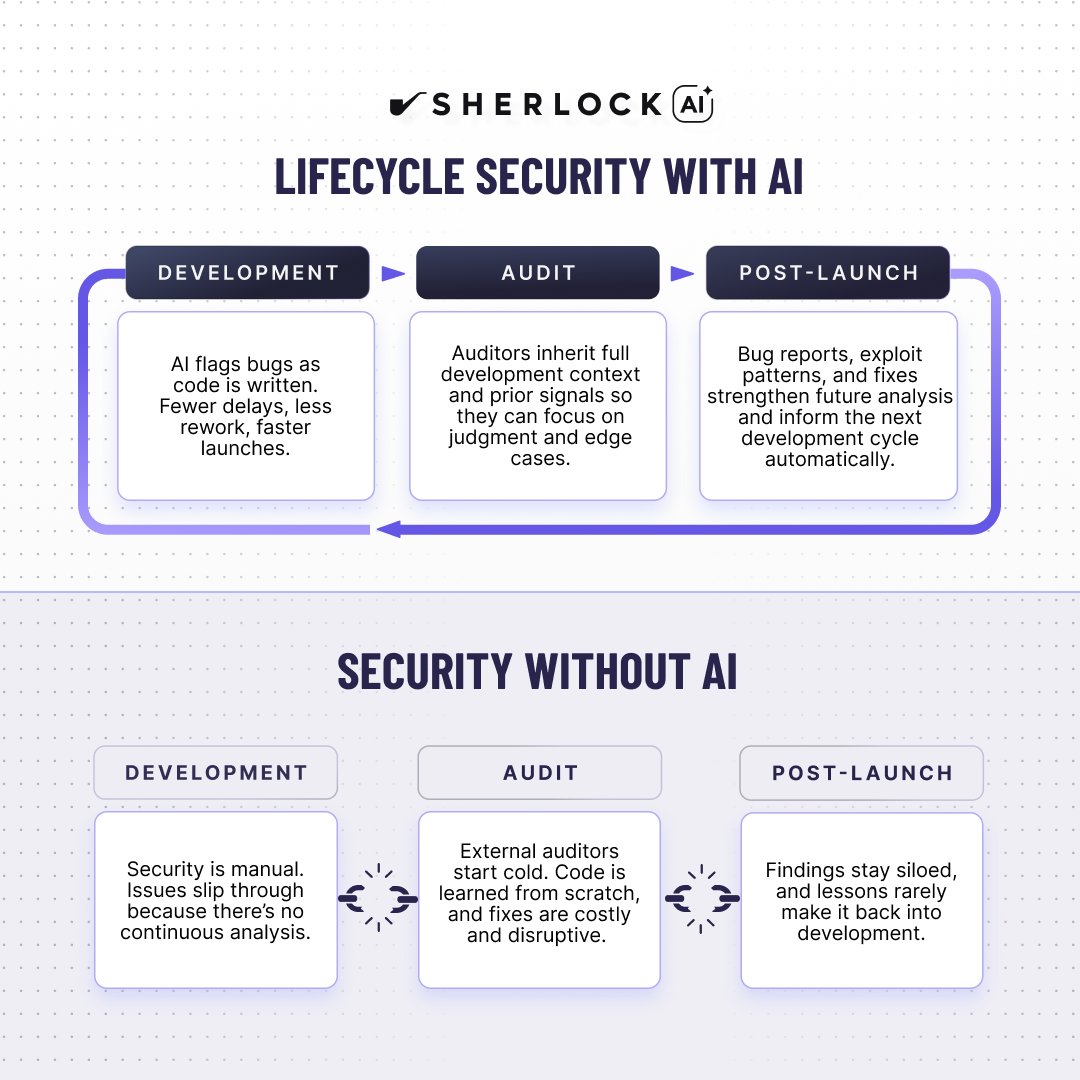

some thoughts on smart contract AI auditing tools over the last few weeks, I've run PoCs for the three main competitors in the smart AI auditing space why? at Berachain we spend a *lot* of money on smart contract audits. could an AI auditor drive down cost, risk, or--preferably--both? while there was some variance between the three tools, the number of security bugs found was small, none of the TPs was more severe than a LOW, and all suffered from poor FP rates. this puts me in the position of having to recommend whether to spend more time and/or money going down this rabbit hole is it possible the tools are great, and our code base is an edge case? that's a valid working hypothesis as at least a part explanation will AI security auditing tools continue to get better? pretty clear the answer is yes--open question when their effectiveness plateaus is there possibly greater PMF for smart contract AI auditing tools at lower-budget web3 startups? Also yes--if you can't afford great security auditors, AI auditing tools may be all you can afford jury is still out, but I do think--watch this space I expect in the next 6-18 months AI auditing tools will become a de facto standard as part of a shift left strategy (i.e. AI audits as you write code, in the IDE, at each commit, etc)

As a fun Saturday vibe code project and following up on this tweet earlier, I hacked up an **llm-council** web app. It looks exactly like ChatGPT except each user query is 1) dispatched to multiple models on your council using OpenRouter, e.g. currently: "openai/gpt-5.1", "google/gemini-3-pro-preview", "anthropic/claude-sonnet-4.5", "x-ai/grok-4", Then 2) all models get to see each other's (anonymized) responses and they review and rank them, and then 3) a "Chairman LLM" gets all of that as context and produces the final response. It's interesting to see the results from multiple models side by side on the same query, and even more amusingly, to read through their evaluation and ranking of each other's responses. Quite often, the models are surprisingly willing to select another LLM's response as superior to their own, making this an interesting model evaluation strategy more generally. For example, reading book chapters together with my LLM Council today, the models consistently praise GPT 5.1 as the best and most insightful model, and consistently select Claude as the worst model, with the other models floating in between. But I'm not 100% convinced this aligns with my own qualitative assessment. For example, qualitatively I find GPT 5.1 a little too wordy and sprawled and Gemini 3 a bit more condensed and processed. Claude is too terse in this domain. That said, there's probably a whole design space of the data flow of your LLM council. The construction of LLM ensembles seems under-explored. I pushed the vibe coded app to github.com/karpathy/llm-c… if others would like to play. ty nano banana pro for fun header image for the repo