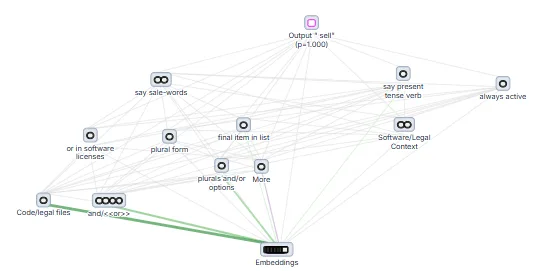

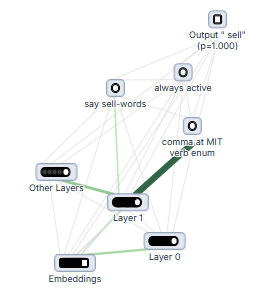

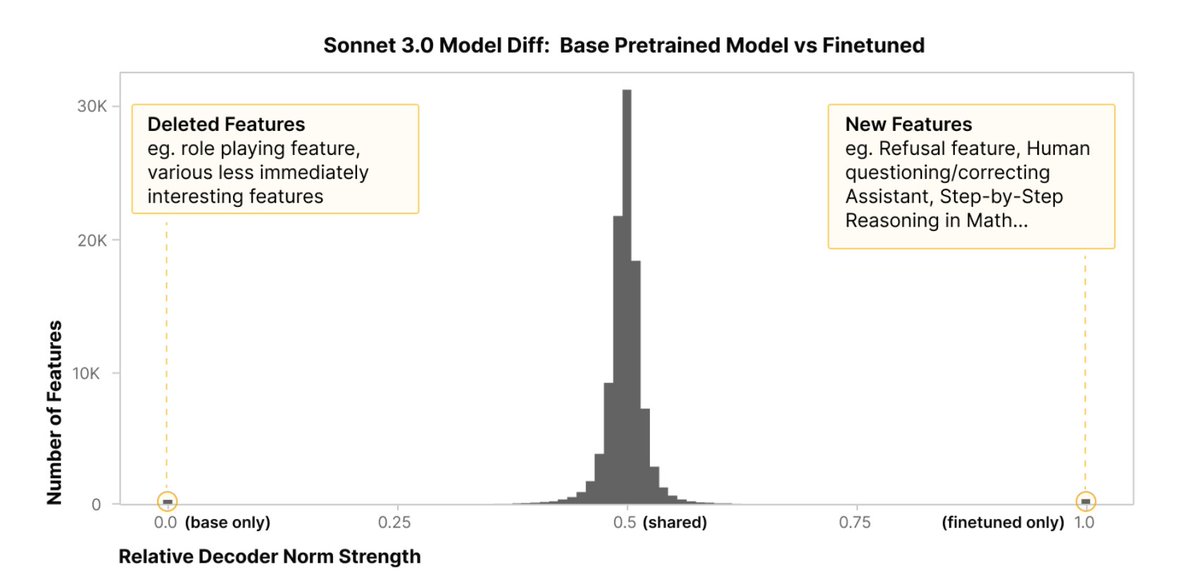

Sparse autoencoders (SAEs) are a popular method for mech interp - but how do we measure if they're any good at finding the "right" features? In a new paper, we propose more objective SAE evaluations by comparing against "known" features for a previously studied circuit!