Alix Pham 🔸

271 posts

Alix Pham 🔸

@alix_ph

Strategic Programs Associate @ Simon Institute | AI & Biosecurity & Policy | 🔸 10% Pledger

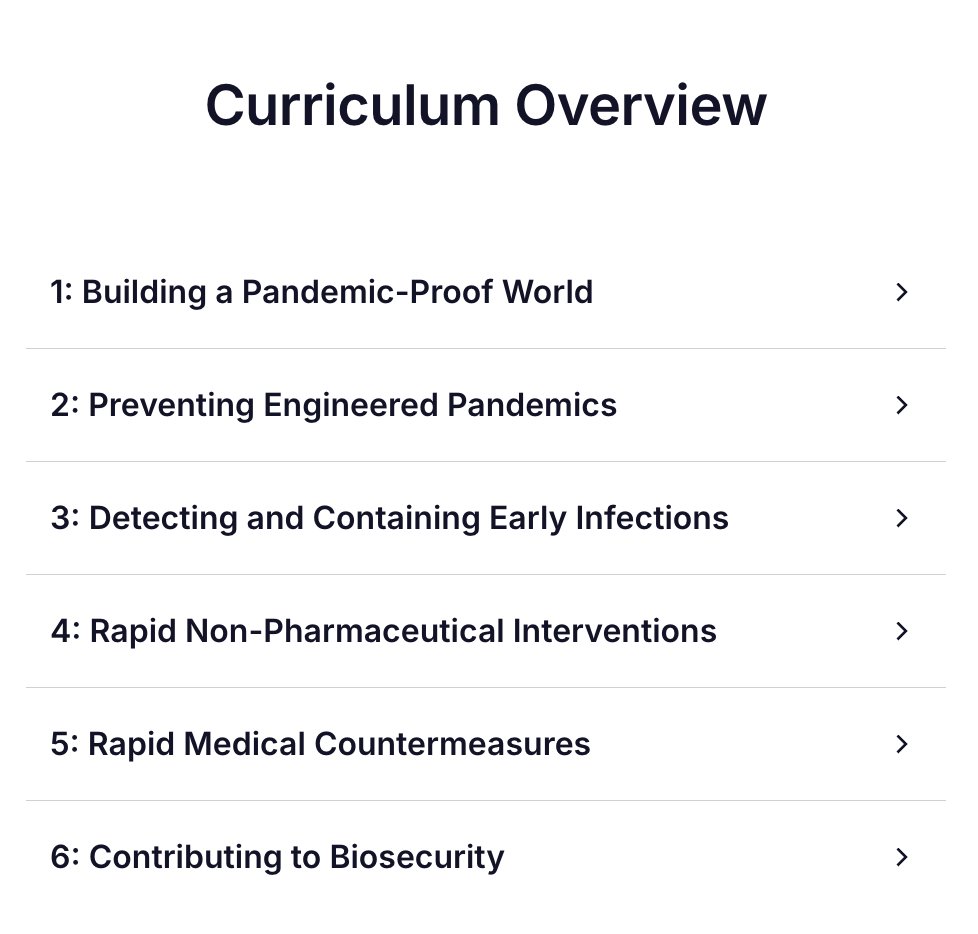

Our biosecurity course is back at @bluedotimpact We need better defences to prevent, detect and respond to pandemic threats. If you want to identify where you can contribute and get funded to start building, this course is the place to start.

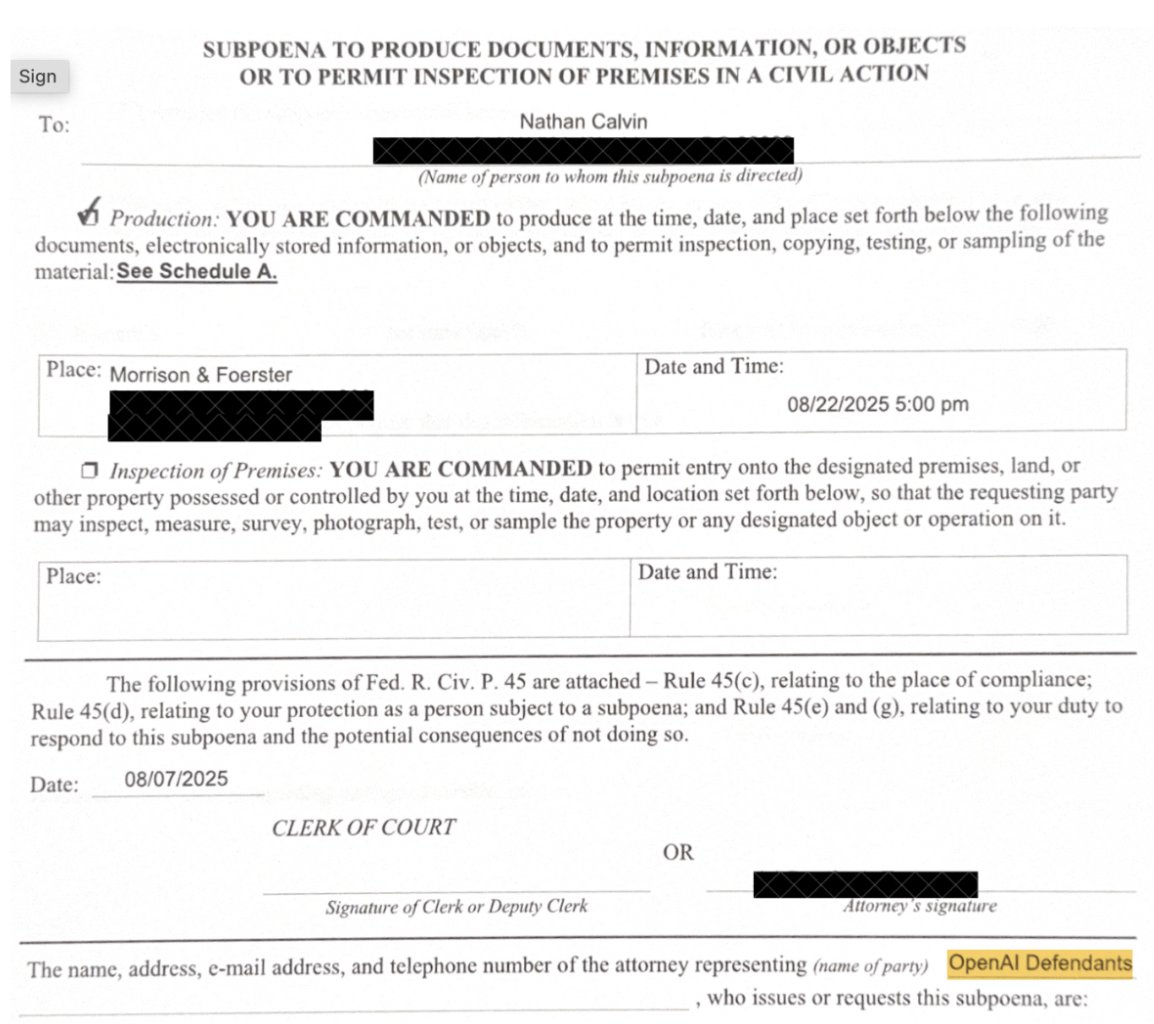

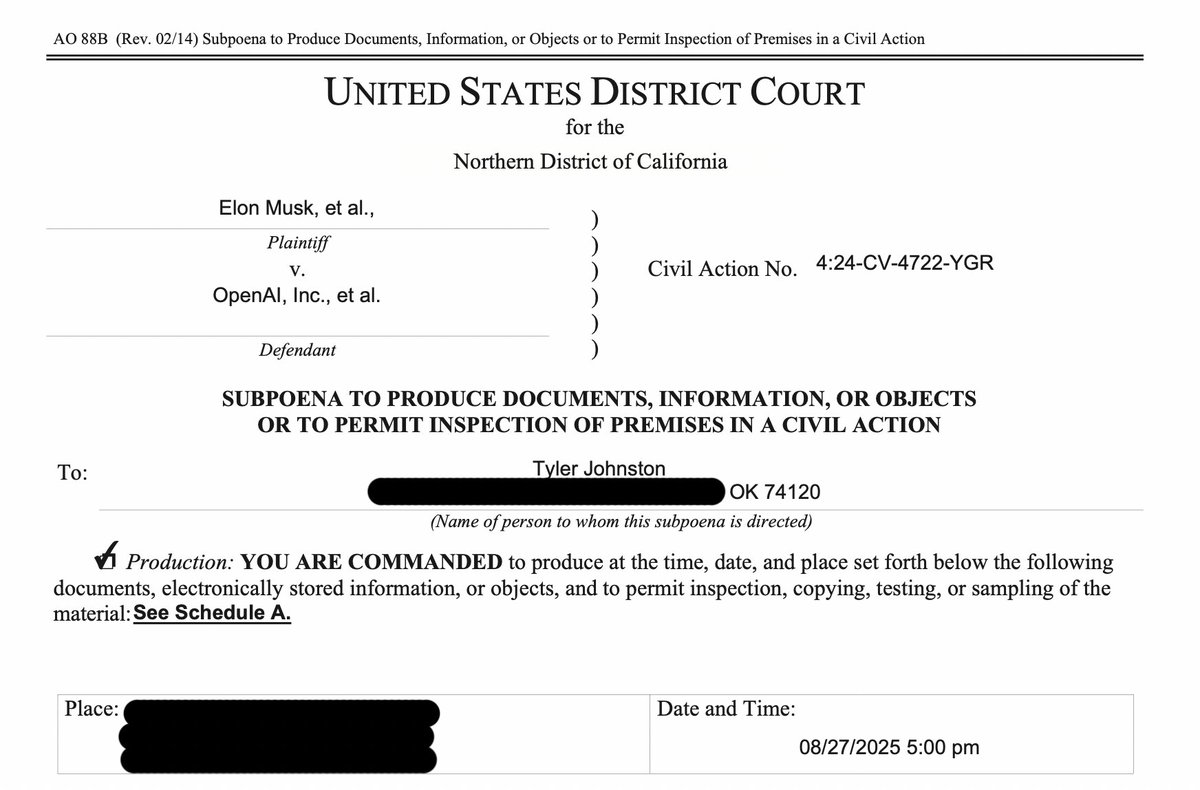

One Tuesday night, as my wife and I sat down for dinner, a sheriff’s deputy knocked on the door to serve me a subpoena from OpenAI. I held back on talking about it because I didn't want to distract from SB 53, but Newsom just signed the bill so... here's what happened: 🧵

Convention wisdom is that bioweapons are humanity's greatest weakness – 100x cheaper to make than to defend against. Andrew Snyder-Beattie thinks conventional wisdom is likely wrong. He has a plan cheap enough to do without government. Useful even in worst case scenarios like mirror bacteria. Effective enough to save most people. In one of my all-time fav interviews he lays out a low-tech 4-step approach developed by his research team at Open Philanthropy, to fix a problem most have thought unsolvable. ASB is hiring for many roles in this project from logistics to biotech to manufacturing, and has $100s millions to deploy. Enjoy, links below! 2:10 How bad it could get 9:19 The worst-case scenario: mirror bacteria 18:14 Why low-tech 25:30 Prevention 31:21 The “4 pillars” plan 33:09 ASB is hiring now to make this happen 35:11 Everyone was wrong: biorisks are defence dominant 40:23 Pillar 1: Lungs 55:53 Pillar 2: Biohardening 1:15:19 Pillar 3: Detection 1:28:40 Pillar 4: The wrench hypothesis 1:40:12 The plan's biggest weaknesses 1:44:44 Would chaos make this impossible to pull off? 1:51:50 Would rogue AI make bioweapons? 1:57:57 We can feed the world even if all the plants die 2:07:03 Could a bioweapon make the Earth uninhabitable? 2:09:35 What ASB is hiring for 2:30:27 How to protect yourself and your family (On the 80,000 Hours Podcast, available anywhere you get podcasts.)

Just released gpt-oss: state-of-the-art open-weight language models that deliver strong real-world performance. Runs locally on a laptop!

The UN has published its first Global Risk Report 🇺🇳 A quick thread 🧵on: · What the report found · Why risk perception ≠ risk assessment · What future editions could add

The UN has published its first Global Risk Report 🇺🇳 A quick thread 🧵on: · What the report found · Why risk perception ≠ risk assessment · What future editions could add

On July 7th, come see neighborhood campuses launch in: Blackpool Boston Geneva Hudson Valley London Mexico City NYC Porto San Diego Seattle Tokyo Toronto Washington DC Vancouver …from Fractal's accelerator! L!nk in next tweet for the online event to see them present =)