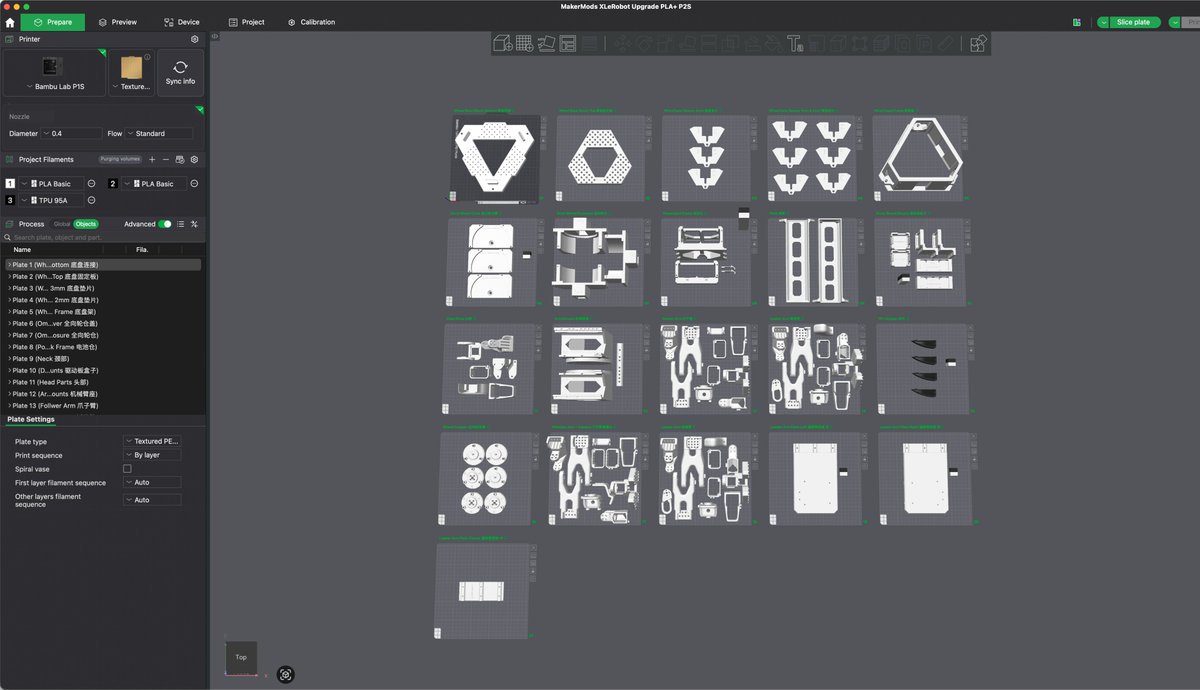

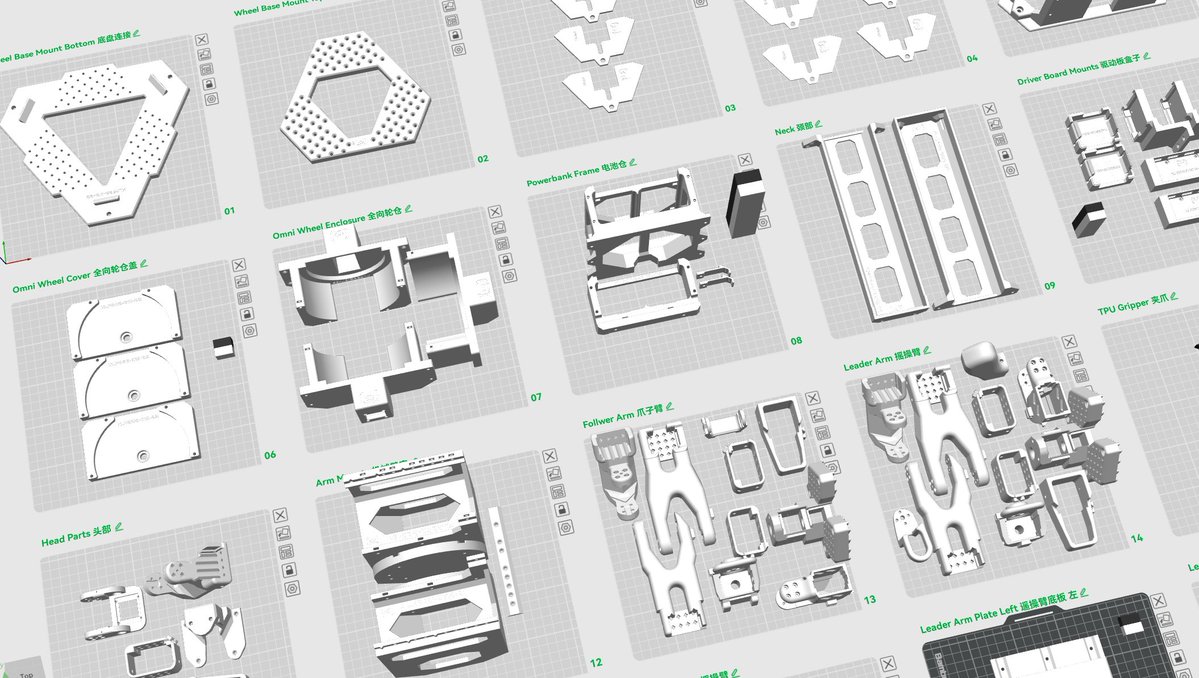

Ben Giudice retweetledi

1B humanoids by 2050 (Morgan Stanley 2025).

32T spent on wages for physical labor each year (World Bank 2025).

900x drop in the cost of inference since 2021 for certain AI models (Epoch AI).

These were the stats were led with for our presentation at @_Arrayah showcase day, live demos always win!

#robotics #physicalAI #AI #physicalAI

English