John W. Krakauer

3.4K posts

@blamlab

Brain, Learning, Animation, & Movement Lab: studying motor control, motor recovery, aesthetics of action, the cognitive-motor interface, & philosophy of science

This week on The Generalist Podcast, I’m interviewing David Krakauer, President of the Santa Fe Institute. Maintaining Human Intelligence in the AI Era David Krakauer is a leading complex systems researcher and the president of the @sfiscience, a unique institution dedicated to studying complex systems across disciplines. In this episode, David challenges conventional wisdom about AI, arguing that large language models pose a more immediate threat to humanity than commonly discussed existential risks—not by destroying us directly, but by eroding our cognitive capabilities through addictive, low-quality information. Listen now: • YouTube: youtube.com/watch?v=SSBLQy… • Spotify: open.spotify.com/episode/6Fz1tL… • Apple: podcasts.apple.com/us/podcast/mai… A big thank you to the incredible sponsors that make the podcast possible: ✨ Brex – The banking solution for startups: brex.com/mario ✨ Enterpret – Transform feedback chaos into actionable customer intelligence: enterpret.com/mario ✨ Persona – Trusted identity verification for any use case: withpersona.com/generalist We explore: → Why David believes LLMs aren't intelligent at all and how the AI community misunderstands emergence → The three dimensions of intelligence: inference, representation, and strategy—and which one LLMs lack → How AI acts as a "competitive" rather than "complementary" cognitive technology, atrophying our thinking abilities → What makes great minds unique, from analogical reasoning to the cultivation of unconscious creativity → How Cormac McCarthy's approach to knowledge and creativity offers lessons for the AI age → Why David believes the greatest threat from AI isn't existential risk but cognitive atrophy—"like sugar cocaine" → How to protect your mind against AI's addictive pull and maintain cognitive autonomy

I'm not sure what a representation is anymore... or maybe I never knew. These fine folks have some thoughts...

🎉 eLife is pleased to announce Timothy Behrens (@behrenstimb) as our new Editor-in-Chief! A distinguished neuroscientist and long-time supporter, Tim will lead our efforts in transforming research communication for all. elifesciences.org/for-the-press/…

The internet has been progressively diluted with AI-generated slop. Are medical records headed for the same fate? 🧵 I just published a perspective in @NEJM with @AdamRodmanMD and Arjun Manrai on why rushing AI into medical documentation could be a mistake.

So many people confuse LLMs (or genAI, in general) for models of human cognition, language, learning, etc., so I thought it may be useful to share once more my brief comment, titled "Psychological models and their distractors". (open access link: rdcu.be/cGQpY) 🧵

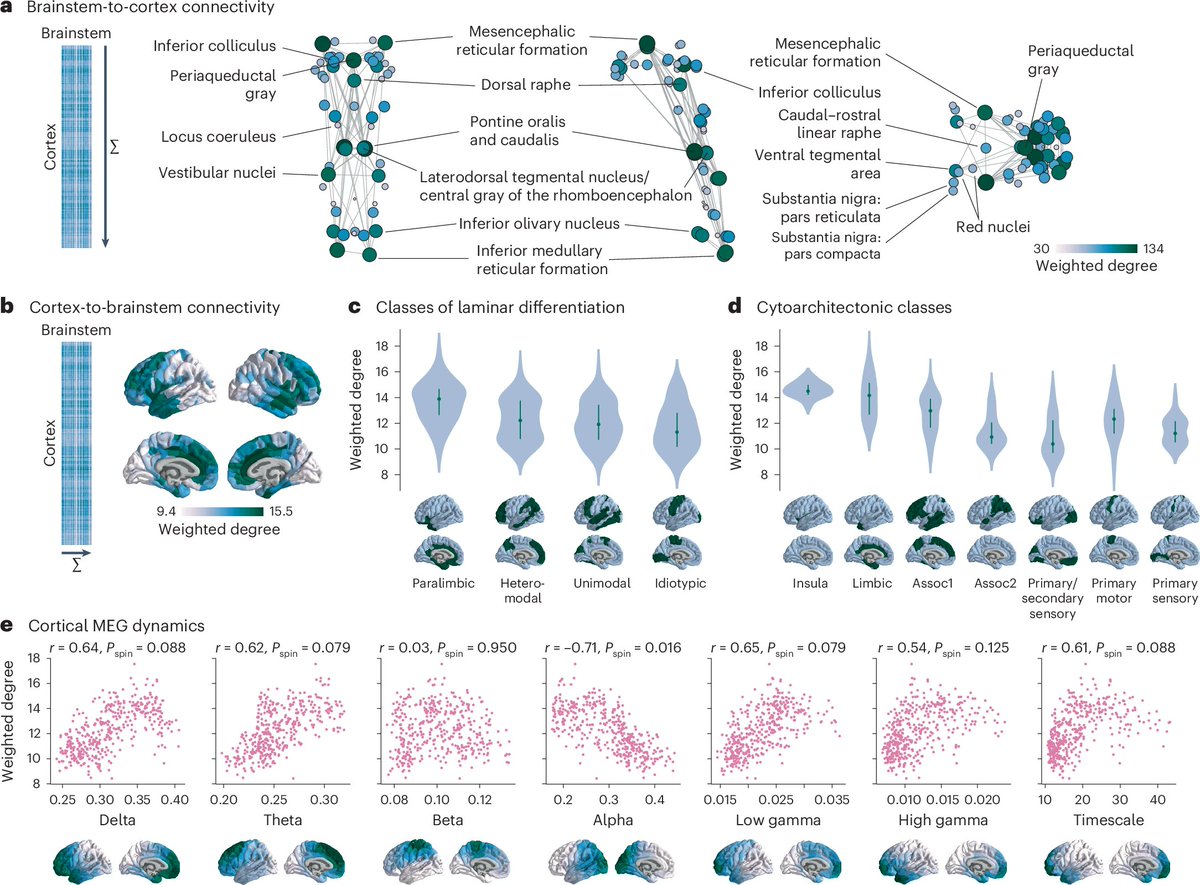

“The knowledge from this project could lead to new treatment approaches down the road for improving stroke recovery.” Neuroscience research led by @UNC_SOM faculty @AdamHantman and @ShihYYI, along with @blamlab John W. Krakauer at @HopkinsMedicine, has the potential to improve outcomes for people with brain damage thanks to a $1.3 million grant from the W. M. Keck Foundation: unc.live/4feOCuc