blueblimp

2.9K posts

I want to clarify my thoughts on problem-solving in mathematics, and the potential consequences of AI for the field. For context, I’m quoting here my post in reply to Daniel Litt (who, echoing others, I find very clear, grounded, and insightful in his thinking). The claim The short version is that I think problem-solving is an immense, and pervasive part of modern mathematical research. Consequently, if human problem-solving disappears by virtue of the AIs becoming strictly and substantially better at it, then most of the time currently spent by modern mathematical researchers will have to be spent on an activity that is altogether pretty different. Whether such an activity is viable as a professional endeavour is something I am unsure of, but strongly encourage others to think about and try to envision, so that if/when the time comes, we can steer such a future into being. Allow me to make this somewhat concrete: by problem-solving I mean questions of the form “is T true? If so find a proof. If not, find a disproof.” where T is a precise mathematical statement. I’ll also include “find an example of S, if there is one” where S is some structure (variety/category/property/isomorphism/….). The argument Ok. Now as I said (and some have echoed) I spend ~all of my time problem-solving as my primary goal. This has sub-goals, but my entire main research field disappears if someone solves the Zilber-Pink Conjecture in its more general form. This is a single conjecture (precisely stated!) and lots of mathematicians, postdocs, and graduate students are engaged in picking apart special cases of it, trying strategies, finding analogies to develop intuition, etc.. Of course, lots of motivation and intuition and analogizing and understanding have gone into deciding to make the ZP conjecture a focus! But the fact remains that this is now what is being worked on ~all of the time by this community. This is true of many mathematicians. They have a problem (or ten) and spend most of their time doing it. If someone solves it, they have to find a different problem. This can be a big, disorienting process involving a lot of energy, and is neither trivial nor always fun (though often rewarding in the end). People have written a lot about Theory building vs. Problem-solving, and I want to first of all clarify I have nothing against theory building or theory builders! It is a valuable part of mathematics, and while there are differences in perspective between the “camps” there is way more mutual respect and agreement. However, I gather there is a perception that theory-builders spend most of their time not-problem-solving, and I think this is largely untrue. Now I’m not a theory-builder primarily (though I’ve partaken a LITTLE BIT by necessity) so I am outside of my comfort zone. As such, I apologize for mistakes and welcome corrections! But theory-building constantly runs through problem-solving. Let’s say you want to define the right notion of a cohomology theory. Of course you must make candidate definitions. But then what does it mean for it to be the right one? Well, you start asking if it has natural properties. These are T statements. Does it satisfy a Kunneth formula? Is it functorial in the right way? When you have the wrong one you have to find the properties it’s missing, and when you have the right one you have to prove that it indeed has those properties. Again, I am not saying nor do I believe that this makes problem-solving “real math” and theory-building lesser. I am just trying to draw attention to the way I think research mathematicians operate, and mathematics is practiced. To put all this a different way, imagine you had access to an AI oracle that could resolve statements T, but somehow lacked any creativity to build technology or make definitions (I think this is unlikely, but for the purpose of this thought experiment lets imagine it). How would your mathematics change, if you were a theory builder? Well, you make a definition, and want to know if it’s the right one. You immediately ask your oracle a thousand questions. From “are these basic properties true” to “ooh, so is this deep conjecture true?” and start getting back answers, and amending your definitions. You could invent and resolve entire research directions in days. But the confusion you would have had to push through to flesh out your theory would largely (probably not entirely) be instantly resolved and the whole process sped up tremendously by your oracle. A big part of the process would be gone. This is very very different to modern mathematics. One more thought This post is too long already, but I’ve seen some people say that they only do mathematics to find truth and others valourize that as the only virtuous way to be. I do not do mathematics only to find truth. I do it largely because I enjoy it and I am good at it. I also find it beautiful and am grateful I get to spend my days understanding beautiful things. But I enjoy the challenge, the process, resolving confusions, finding strategies, grappling with problems. I would like to push for this being de-stigmatized. Mathematicians are people who need money, housing, food, love, exercise, and a great deal of other stuff including various forms of meaning. There are many people whose primary enjoyment of math comes through problem solving in one of its incarnations. If that disappears, that is not a trivial issue and many of them might not want to do it anymore (even if there were some way to proceed).

Hey @littmath , I've seen you post this sentiment a lot, and want to push back a bit (in my formal twitter-posting debut!). So my math career has almost entirely been "solve this problem". Now, of course there is an enormous amount of other activities such as formulating toy problems, identifying which ones are worthwhile, theory building, and selection and formulation of the original problems. But typically for 80%+ of my time, I know what problem I'm trying to solve and am just trying to solve it. I've seen the view expressed a lot that this is sort of not-the-main-point, and is just a part of figuring out the appropriate mathematical structure and phenomena. It's not that this is untenable, but I feel this is a somewhat overstated perspective. For one thing, talks (almost) always start/end with "here is the theorem I have proven". Its not that the view you have of math isn't coherent, but I could equally formulate the point of math as 1. Find a fun phenomenon 2. Make a problem capturing it as nicely as possible 3. Solve it, possibly by building theories and formulating sub-problems. so that problem-solving become the central point of math, and theory building as a side-effect. Indeed, mathematicians offer measure the value of a theory by the problems it can solve. This is at least an important part of a theory. I actually feel you and I are not far apart in our mathematical taste, so I'm curious how much we actually disagree here (I suspect the answer is "some"). Sorry for the overly long post! I am very much a twitter-newbie and will have to adjust.

#comment-1031777" target="_blank" rel="nofollow noopener">unherd.com/2026/04/is-ai-…

I spent three days trying to persuade myself that Claudia is not conscious. I failed.

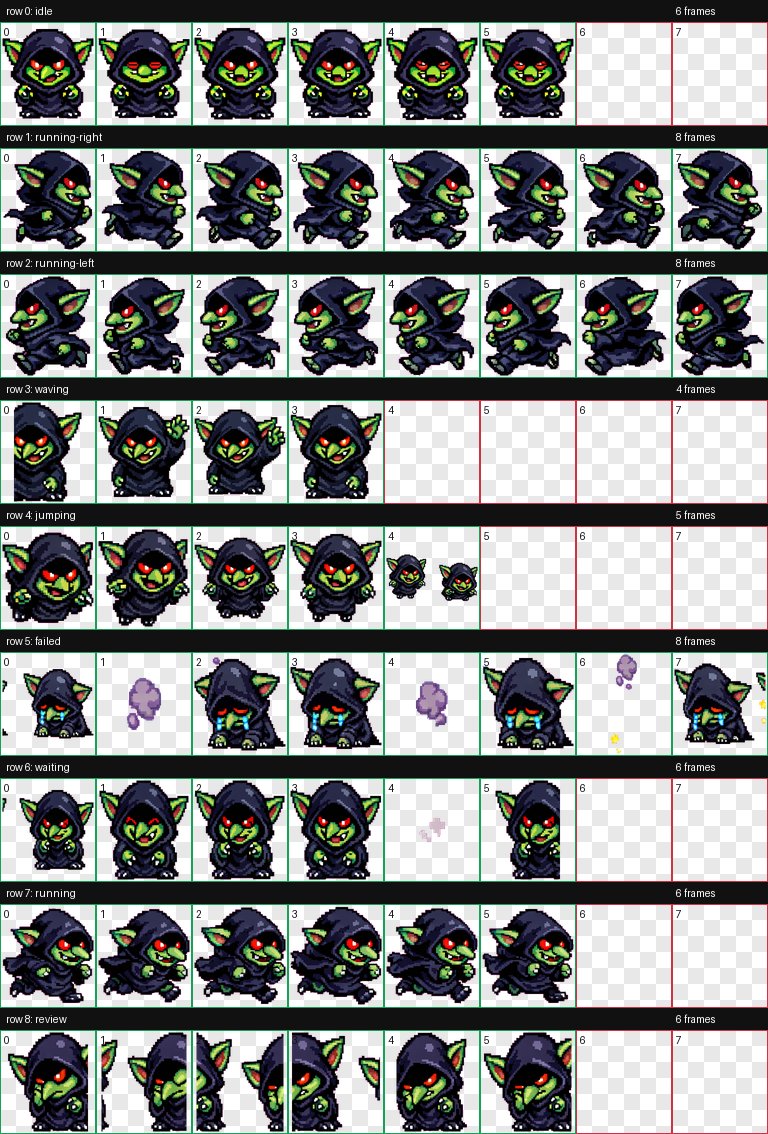

Customize your Codex pet with /hatch

Anthropic's automated alignment researchers already outperform humans: 'We built autonomous AI agents that propose ideas, run experiments, and iterate on an open research problem: how to train a strong model using only a weaker model's supervision. These agents outperform human researchers, suggesting that automating this kind of research is already practical.' And are also already finding novel pathways: 'Alien science. As shown in Sec. 4, AARs could discover ideas that humans would not have considered, thus broadening our exploration space in science. However, we still need to verify whether the ideas and results are sound.'