Waldemar Panin

1K posts

Waldemar Panin

@chiefwalde

The Cursor Guy | tweeting about AI-native workflows and how to improve them | building @cursorcraft_dev

Claude can now securely connect to your health data. Four new integrations are now available in beta: Apple Health (iOS), Health Connect (Android), HealthEx, and Function Health.

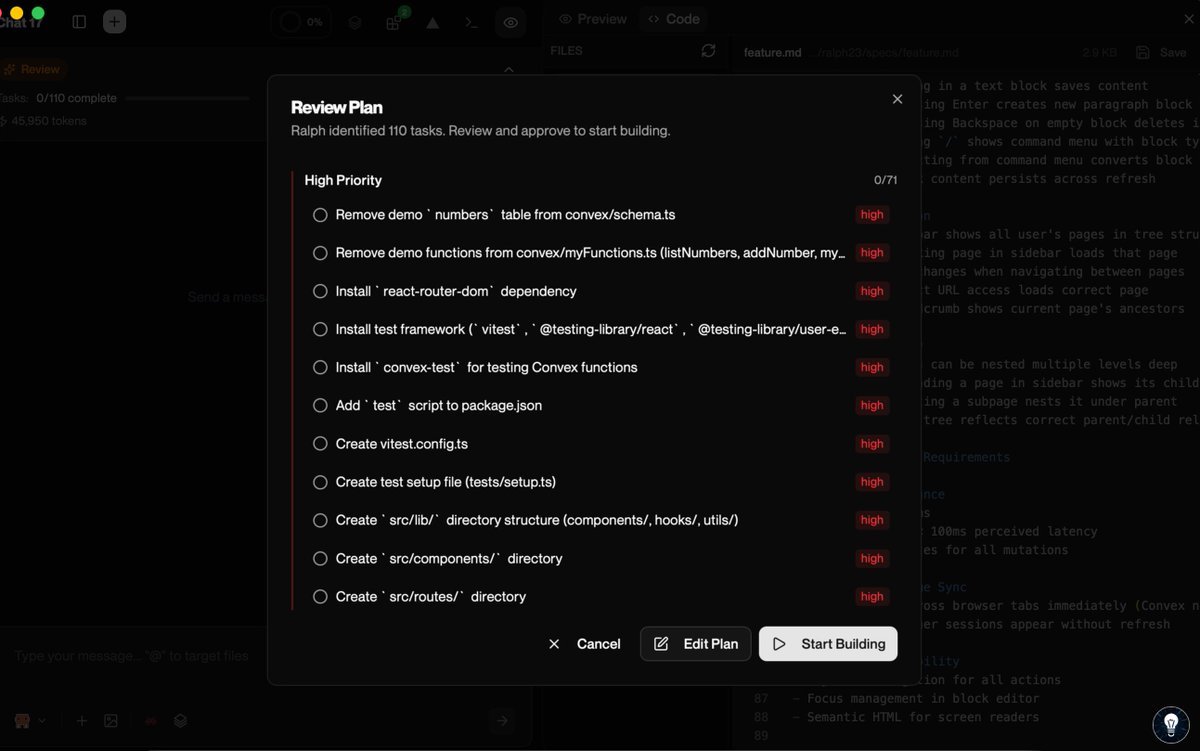

i feel like heavily vibe coded apps have a specific feel to them it's that really small stuff is always half breaking it's not that things stop working you just start seeing bizarre behavior - like you open a dropdown and the 4th item is selected always i think this happens because "boring" stuff tends to be less reviewed and LLMs love to brute force their way into overly complex solutions that technically work this kind of thing is brittle so behavior changes accidentally when a seemingly unrelated change is made