Christian Puhrsch

17 posts

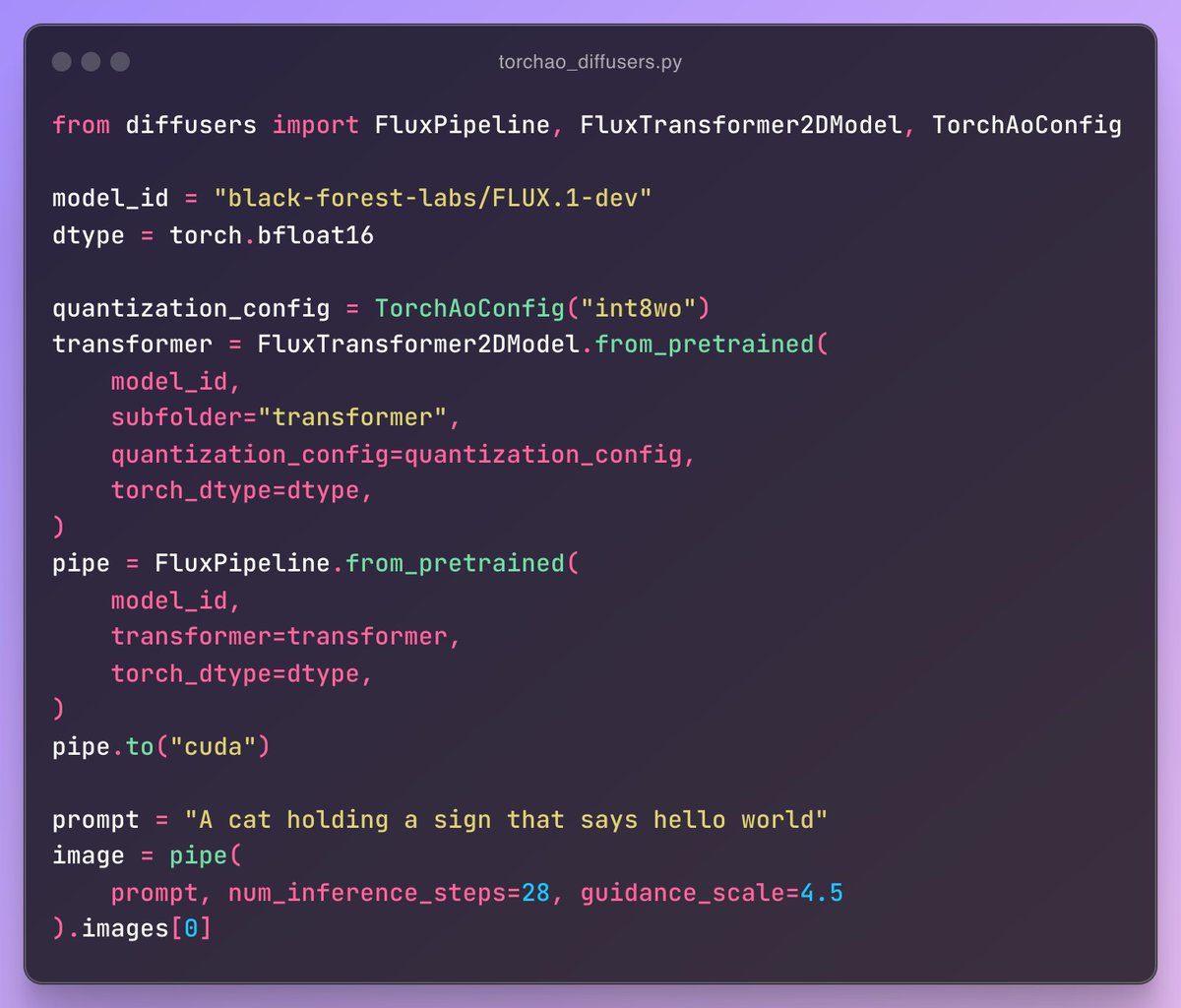

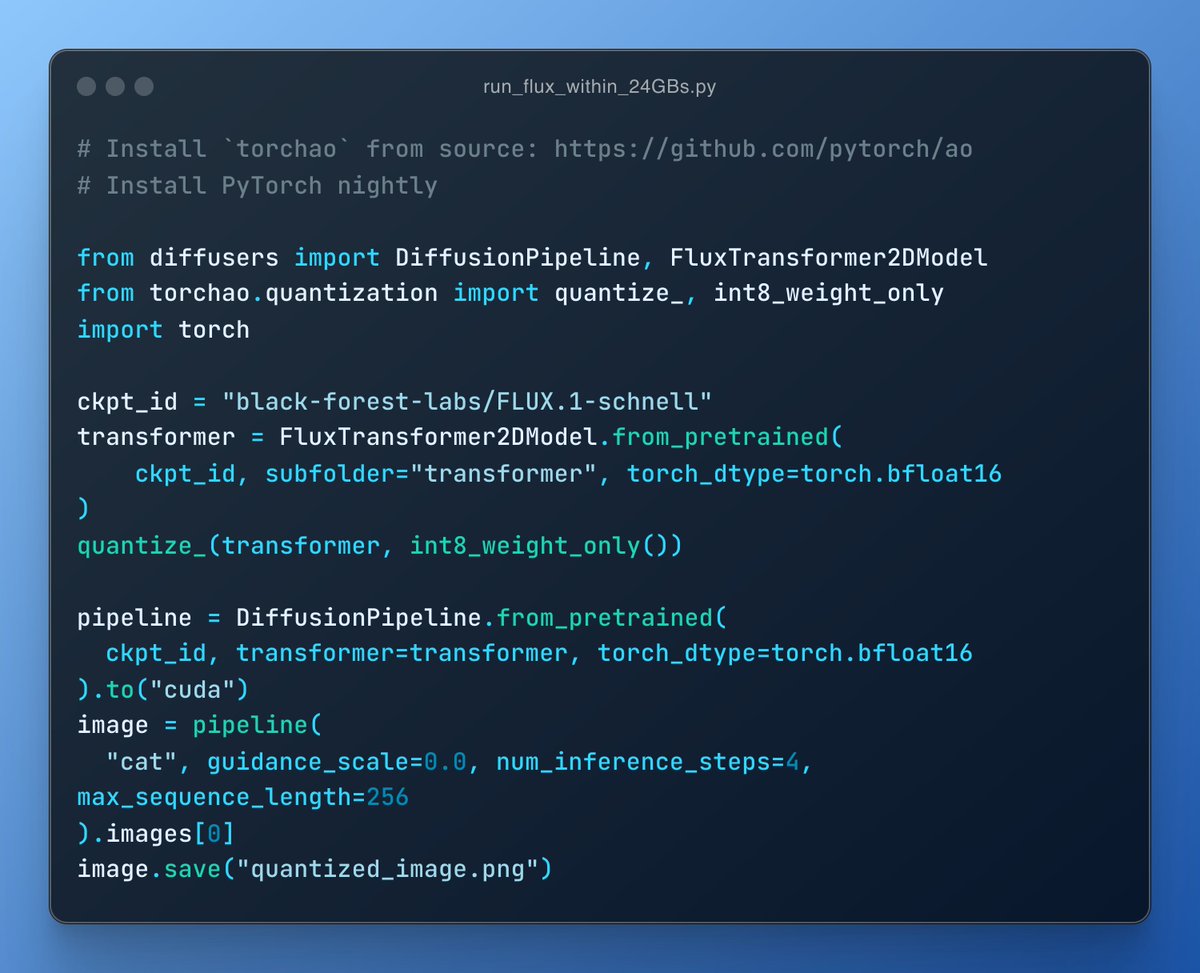

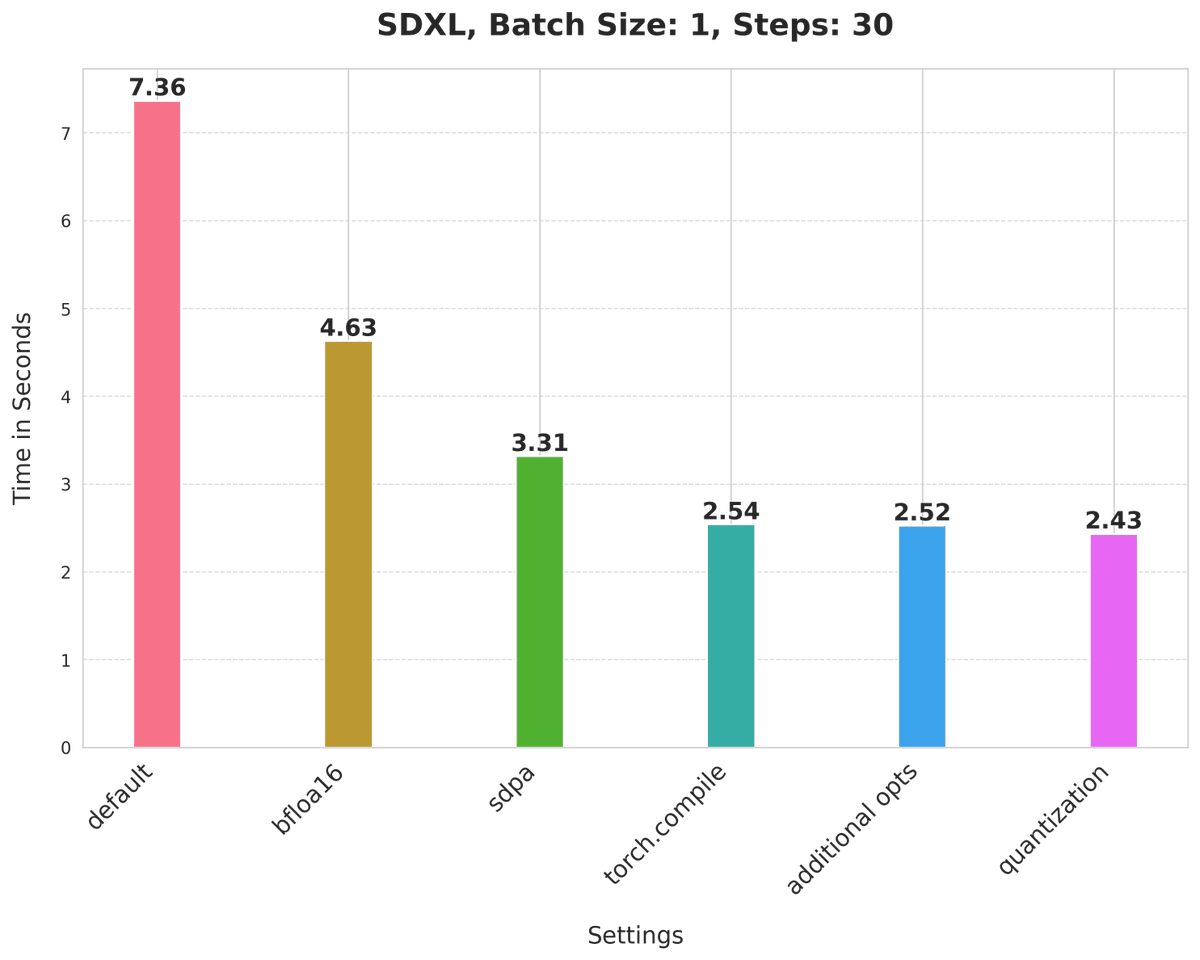

3x faster text-to-image diffusion models, all in pure PyTorch. No C++ needed. Check out our third blog post in the series on Accelerating Generative AI using Native PyTorch. 🔥 hubs.la/Q02f9TKh0

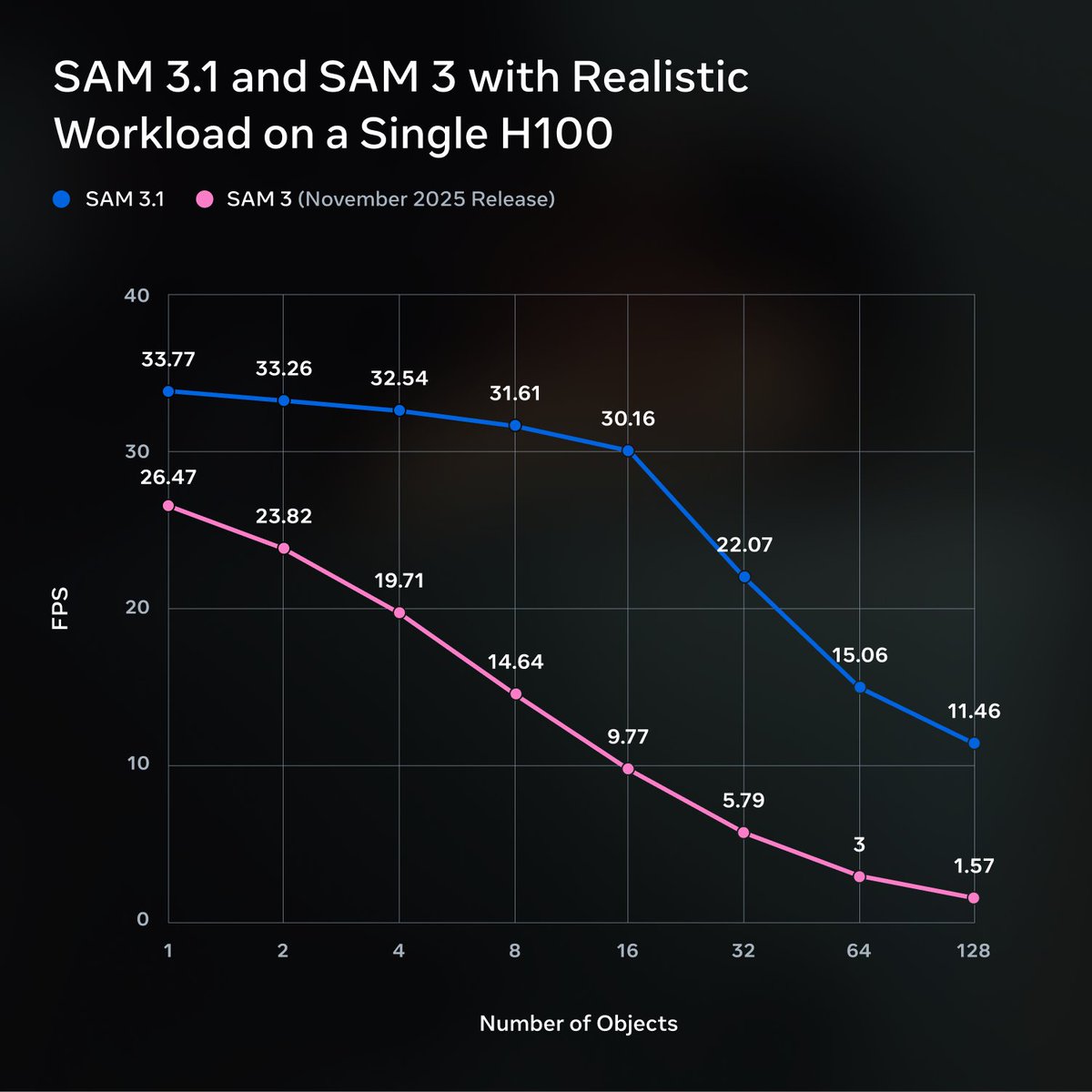

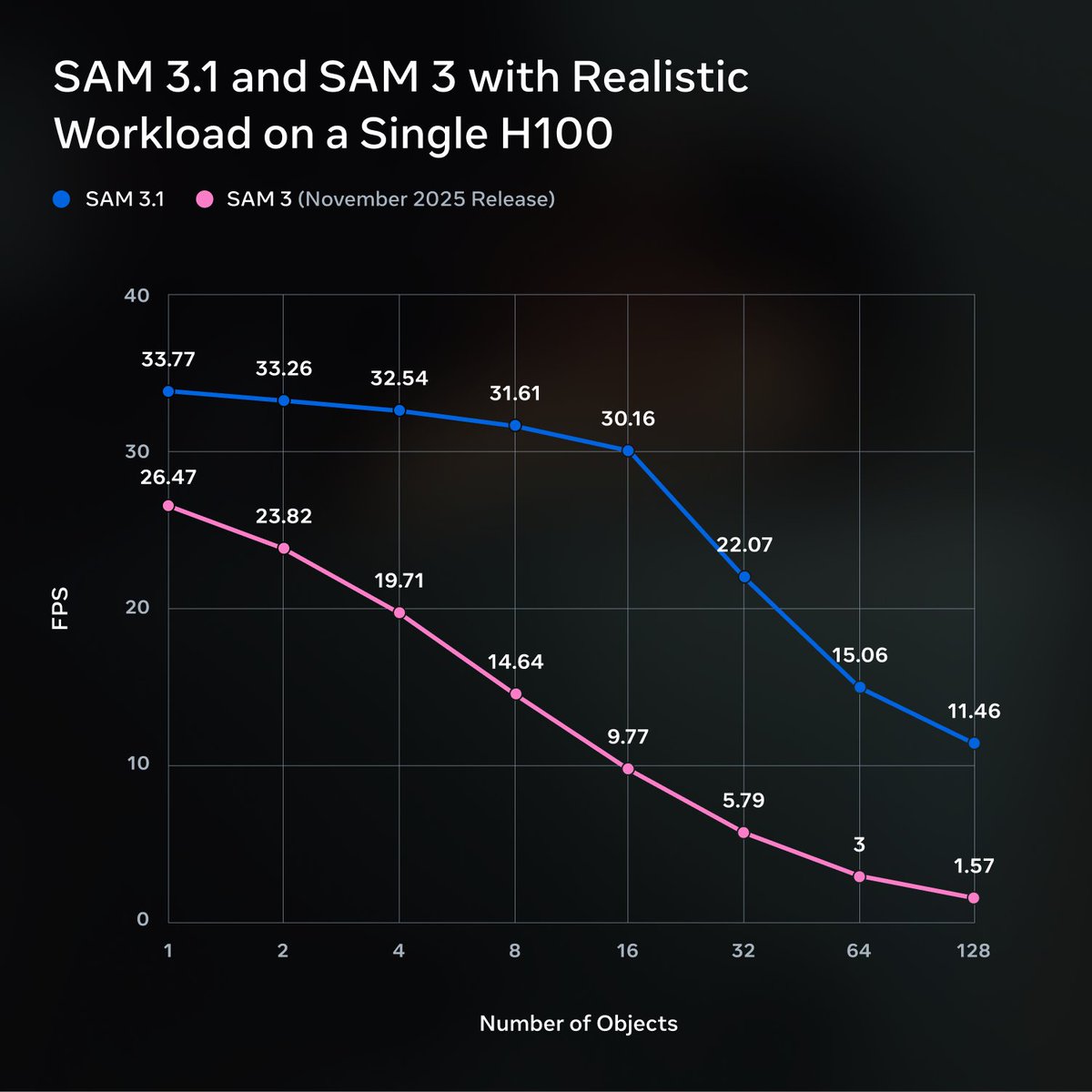

Very pumped that this blog is finally out pytorch.org/blog/accelerat… 8x perf improvements over SAM in native PyTorch, no C++ needed. The blog is fantastic as a case study of different optimizations that matter 1. torch.compile and how to rewrite your graph breaks away 2. Write your quant and dequant APIs in pure torch and torch.compile them 3. SDPA as an easy wrapper to dispatch to either flash attention or memory attention kernels 4. 2:4 sparsity 5. Nested tensors to batch different sized images 6. Some custom, trivial to integrate Triton kernels Big thanks to @cpuhrsch for spearheading our v-team