Dan Roberts

1.1K posts

Dan Roberts

@danintheory

Scientist @OpenAI. Prev. co-founder @diffeo, acquired by @salesforce // co-authored The Principles of Deep Learning Theory // studied gravity.

BREAKING: Iran says it will bomb Palantir, Oracle, and other major Western firms, declaring them “legitimate targets.”

@ManifoldBio and @NVIDIAHealth announce a joint study validating Proteina-Complexa, NVIDIA's latest BioNeMo model for protein binder design.

SCOOP: The Pentagon has formally notified Anthropic that it’s deemed the artificial intelligence company and its products a risk to the US supply chain, according to a senior defense official. bloomberg.com/news/articles/…

I think this one needs no further explanation.

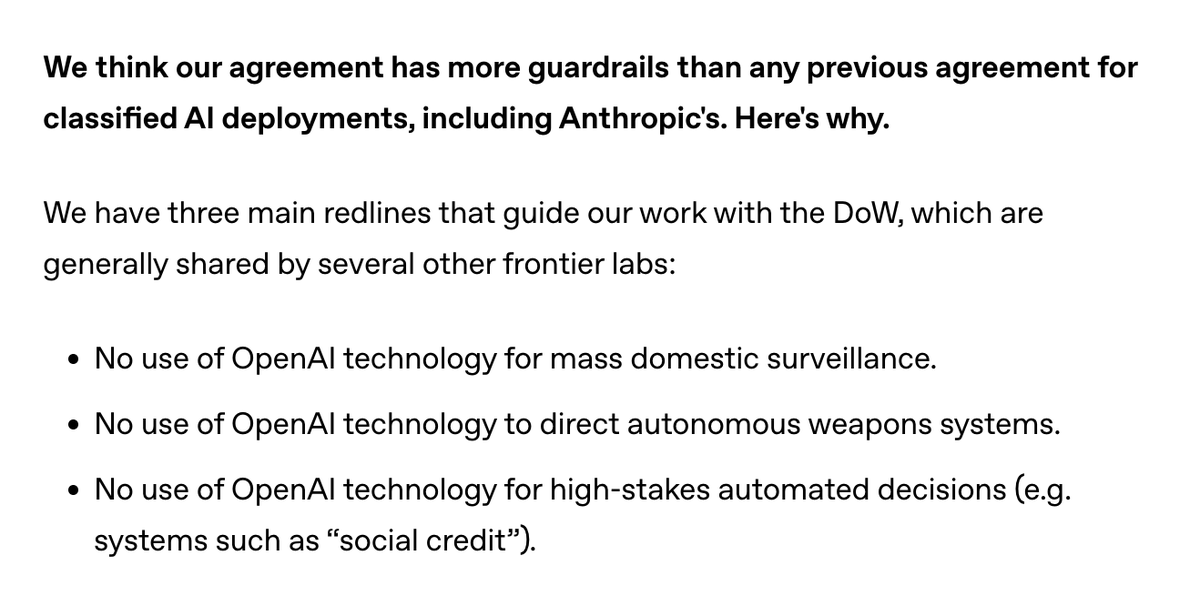

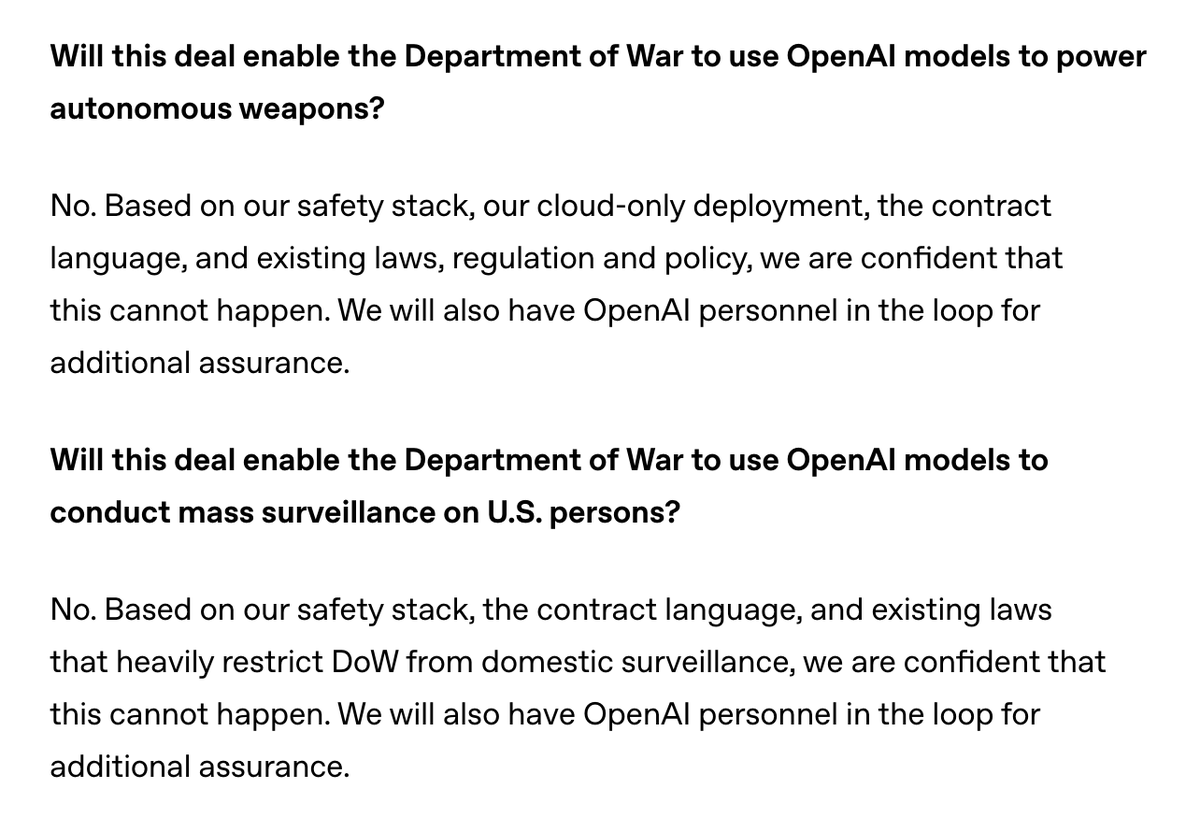

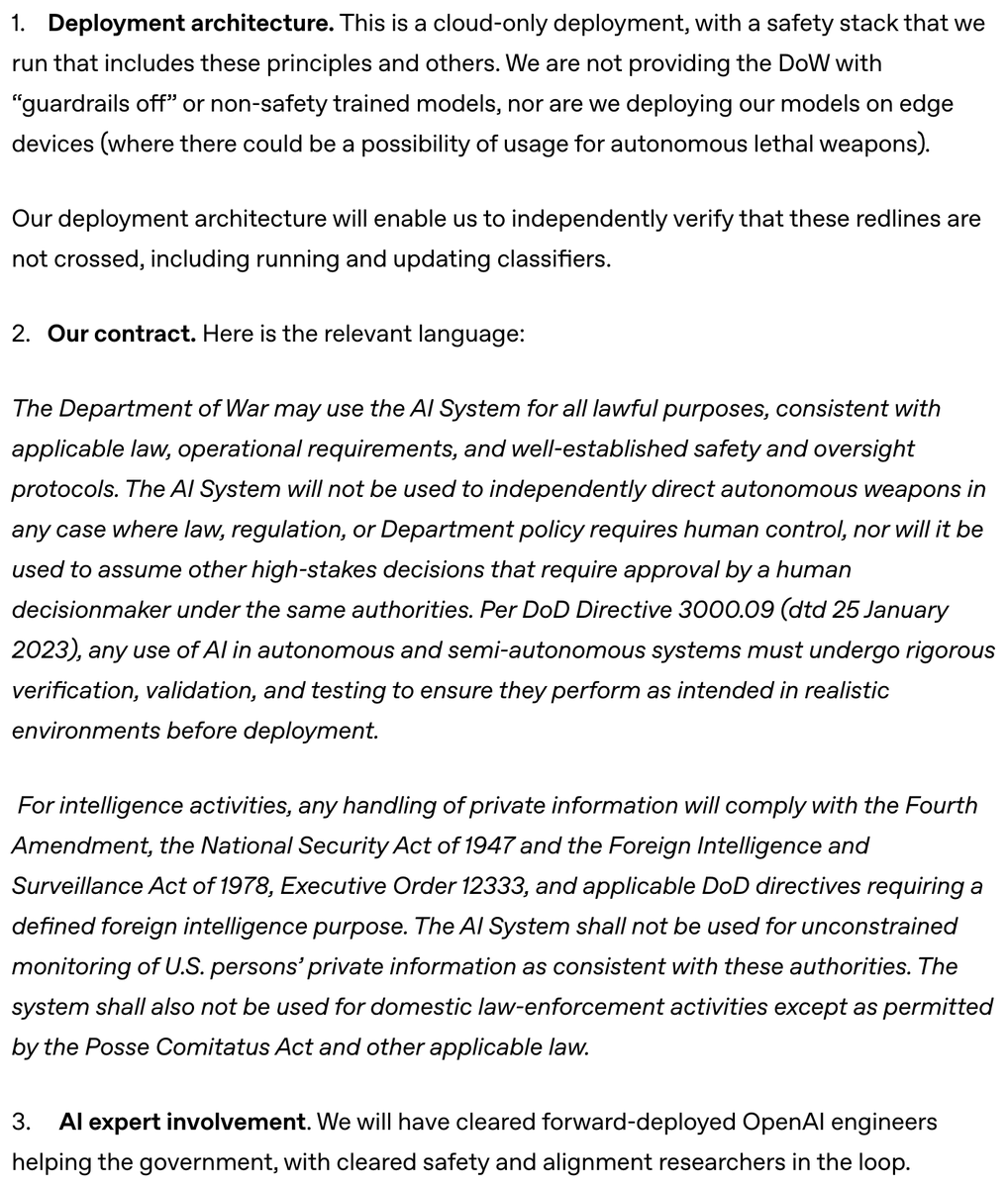

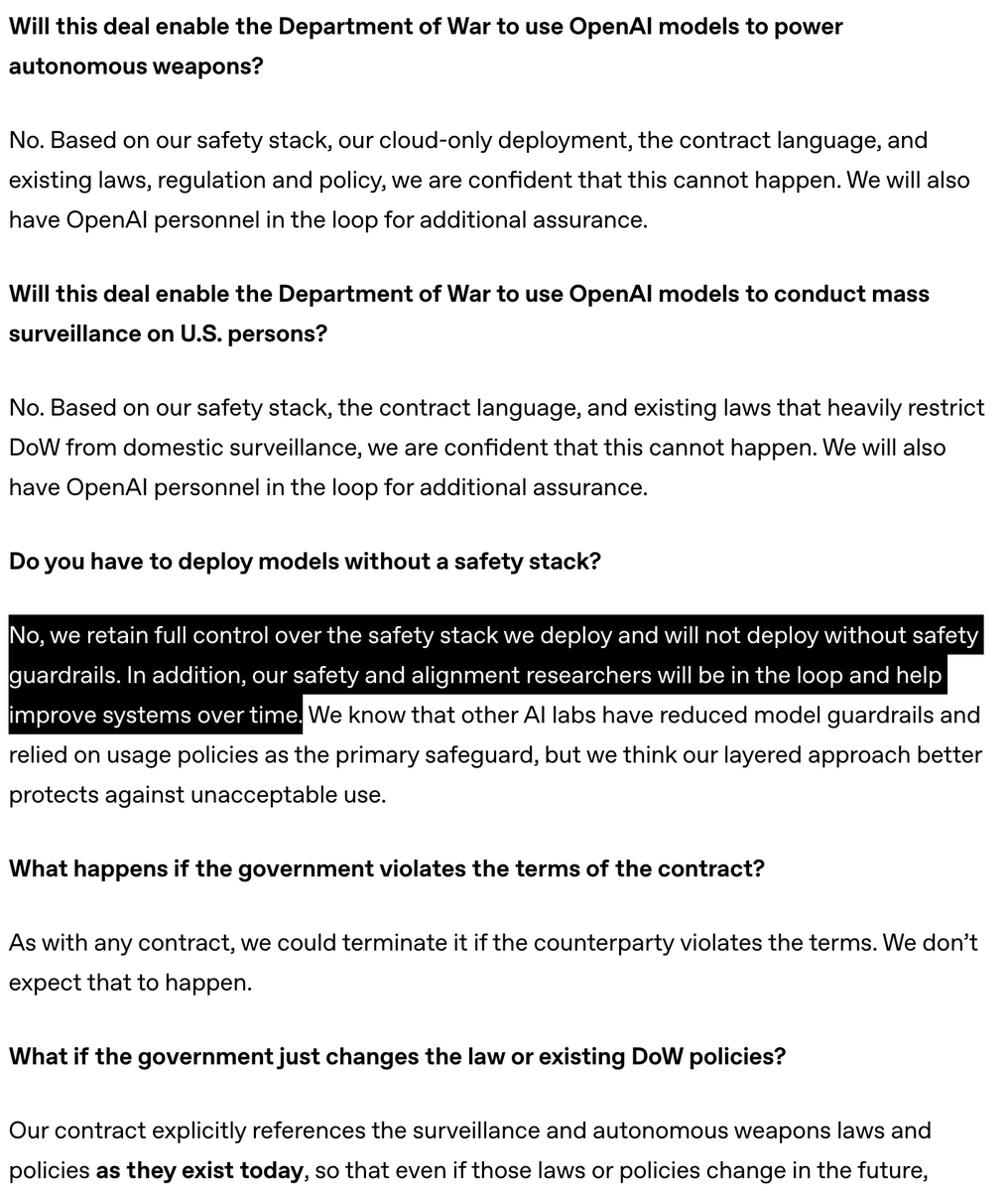

Yesterday we reached an agreement with the Department of War for deploying advanced AI systems in classified environments, which we requested they make available to all AI companies. We think our deployment has more guardrails than any previous agreement for classified AI deployments, including Anthropic's. Here's why: openai.com/index/our-agre…