Gatewink

3.3K posts

12 years ago today, the DarkRP gamemode was published on the Garry’s Mod Steam Workshop. Created in March 2006 by Rick Dark, this gamemode has been the most popular in the game for many years. As of today, the user Falco has been maintaining it since 2008.

predatory people don’t have to be explicit about their manipulation 👏👏👏 in fact they rely on it being implicit so they can keep getting away with it 👏👏👏

Bryon Noem told dominatrix that he wanted to be 'trans bimbo slut,' revealed woman's name he wanted to be called trib.al/Stpjjzk

Secret Service agents at Augusta National Golf Course, 1983

modern day romance

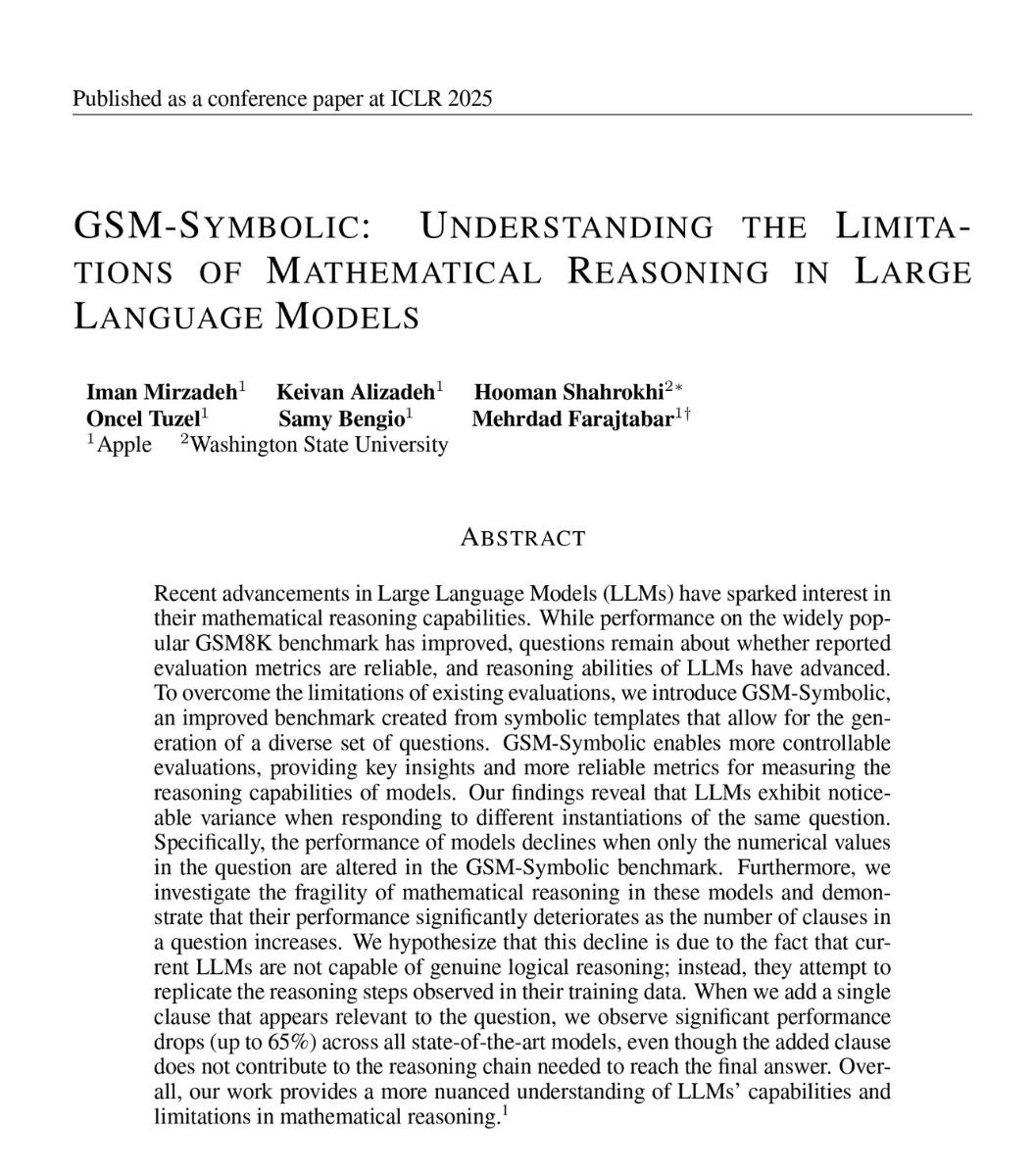

society needs to grapple with the reality of a mythos-level model being open source in <12 months. i’m not sure we are prepared.

Technology company Plex took its 120 employees to Honduras for a weeklong bonding experience. It was a disaster from the moment they arrived. on.wsj.com/41PimsN

i cried in a fucking coffee shop today. and i’m sorry if i’m like, “setting #keep4o back” by giving people proof that we all have ai psychosis or whatever. but hopefully this resonates with someone the last sentence in a 2400 word message i sent to claude earlier today: