Laurian Gridinoc

67.9K posts

Laurian Gridinoc

@gridinoc

Full Stack Computational Linguist ※ Mozilla OpenNews Fellow ※ Virtual Production ※ Filmmaker ※ AI accelerationist

We replaced urllib3 inside boto3 with a Zig HTTP client. One import line. Same API. Upto 115x faster with TurboAPI. import faster_boto3 as boto3 Here's what happened..

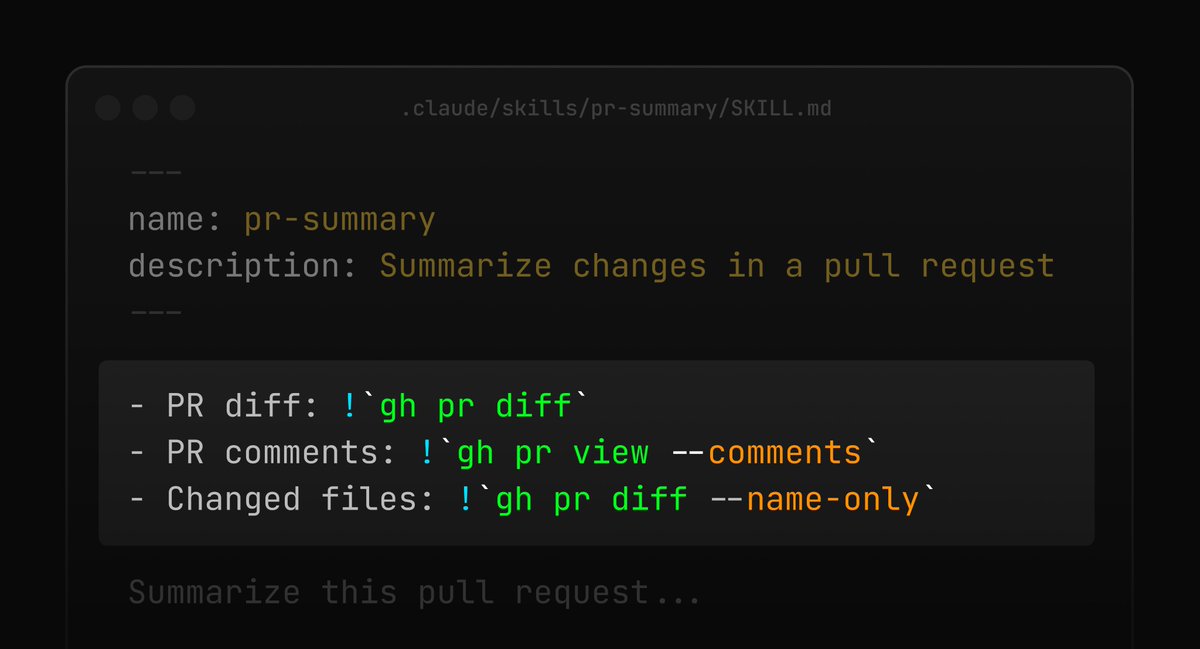

Meet Ankh.md for Hermes Agent Ankh.md helps you craft & use multiple Hermes Agents locally on your computer scoped to folders, projects, or specialized use cases. Each AI Agent gets its own skills, tools, prompt, and other Hermes Agent features using your local file system and a simple pass-through .yaml config file. Visit Ankh.md for the video tutorial, cinematic trailer in 2K quality, features, and to try it out. Link in the reply. 100% Free, MIT License, Open Source @NousResearch Hackathon Entry

We've spent years building LlamaParse into the most accurate document parser for production AI. Along the way, we learned a lot about what fast, lightweight parsing actually looks like under the hood. Today, we're open-sourcing a light-weight core of that tech as LiteParse 🦙 It's a CLI + TS-native library for layout-aware text parsing from PDFs, Office docs, and images. Local, zero Python dependencies, and built specifically for agents and LLM pipelines. Think of it as our way of giving the community a solid starting point for document parsing: npm i -g @llamaindex/liteparse lit parse anything.pdf - preserves spatial layout (columns, tables, alignment) - built-in local OCR, or bring your own server - screenshots for multimodal LLMs - handles PDFs, office docs, images Blog: llamaindex.ai/blog/liteparse… Repo: github.com/run-llama/lite…