Visiting Fellow, Ph.D.

28.4K posts

Visiting Fellow, Ph.D.

@jackiefloyd

AI all the time and tacos.

🦞 Been working with Peter Steinberger (@steipete) on the OpenClaw Foundation structure for weeks. A home for thinkers and hackers and those that want to own their data. Honored to serve as the founding independent board member. This community built something extraordinary, our job is to protect it. Open source forever. Excited to share more soon.

Advanced Machine Intelligence (AMI) is building a new breed of AI systems that understand the world, have persistent memory, can reason and plan, and are controllable and safe. We’ve raised a $1.03B (~€890M) round from global investors who believe in our vision of universally intelligent systems centered on world models. This round is co-led by Cathay Innovation, Greycroft, Hiro Capital, HV Capital, and Bezos Expeditions, along with other investors and angels across the world. We are a growing team of researchers and builders, operating in Paris, New York, Montreal and Singapore from day one. Read more: amilabs.xyz AMI - Real world. Real intelligence.

An article from the 90s explaining how in the 1980s, personal computers changed the dynamic of college vs high school workers. College grads learned how to use PCs and grew wages faster Mind you, this was when interest rates were 15pct, white collar unemployment was the highest it’s been any non covid year, general unemployment was 10pct, there was a recession, 18pct mortgages, and the start of the savings and loan industry collapse. The economy was a mess. Except it was the start of the “digital revolution “ which lead to change. Here we are at the early days of the AI revolution. I think it will be very analogous to what happened back then. If you think learning how to use Clause seems daunting, imagine being 50 yrs old in 1983, not knowing how to type, using a 1.0 key adding machine with a tape roll to do all your work as an analyst and realizing you had to figure out how your brand new IBM PC and lotus 1-2-3 worked. Or having only used a typewriter your entire career , then having to learn the new PC and WordStar. Trust me. WordStar key combinations were far harder to learn than telling Claude what you want done Lots of people couldn’t figure it out. Those who did were more productive Ctrl QA with AI nber.org/digest/sep97/h…

Introducing LangSmith Fleet: an enterprise workspace for creating, using, and managing your fleet of agents. Fleet agents have their own memory, access to a collection of tools and skills, and can be exposed through the communication channels your team uses every day. Fleet includes: → Agent identity and credential management with “Claws” and “Assistants” → Sharing and permissions to control who can run, clone, and edit (just like Google Docs) → Custom Slack bots so each agent has its own identity in Slack Try Fleet: smith.langchain.com/agents?skipOnb… Read the announcement: blog.langchain.com/introducing-la…

Claude quietly shipped interactive visuals and most people haven't noticed. Charts, diagrams, and mini tools built right inside the chat. Free for everyone. Full breakdown with prompt templates in this week's issue: thesignal.substack.com/p/claudes-new-…

The "decoupling of information and energy" is a major point of divergence between biological and artificial computers. Brains are efficient, modern AI isn't. And energy consumption is the biggest bottleneck in scaling AI (you can't hallucinate electrons into existence). To address this we need an "energy-aware theory of computation." And this new preprint is an attempt to address this. [1/11] 🧵

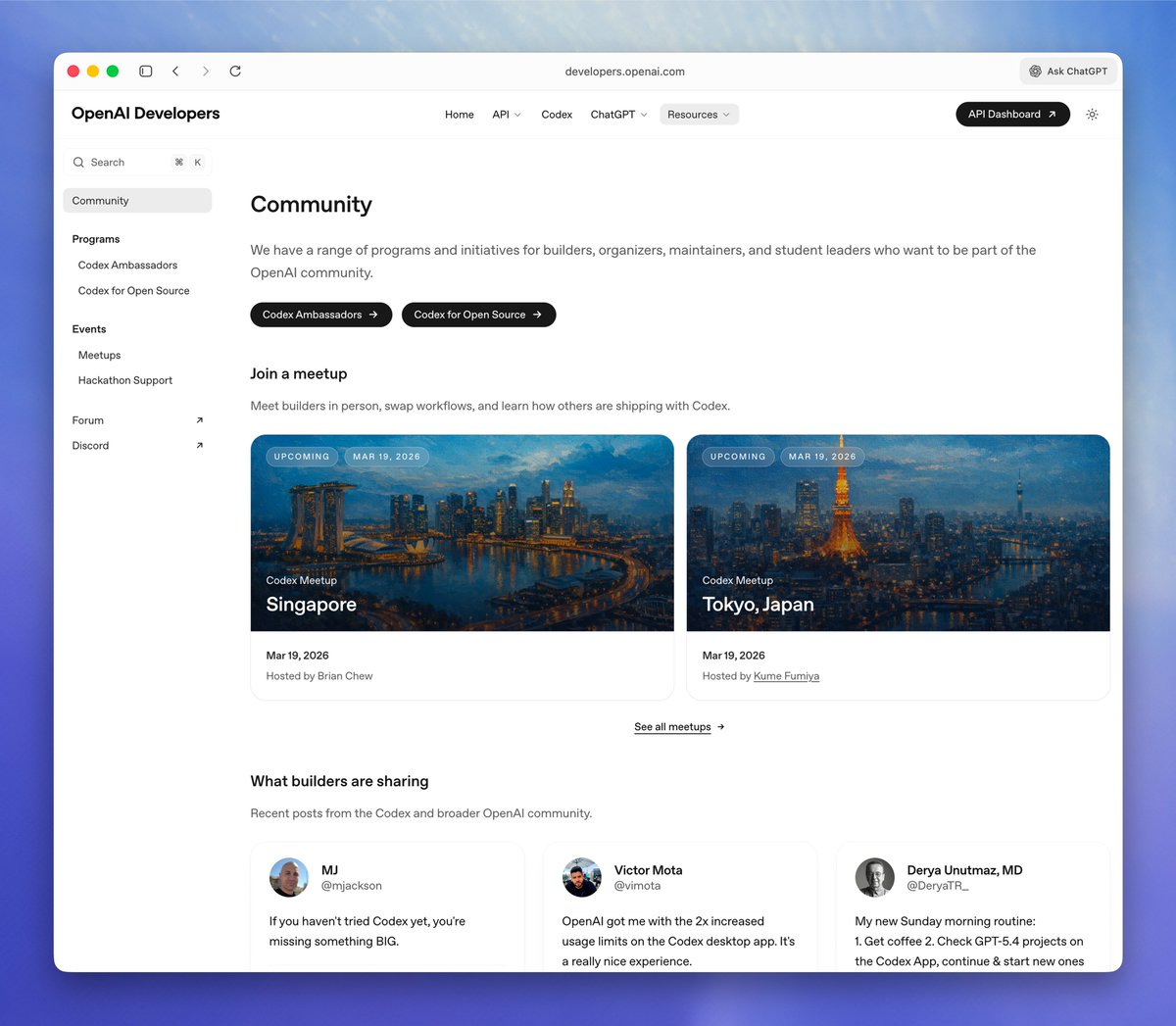

GPT-5.4 mini is available today in ChatGPT, Codex, and the API. Optimized for coding, computer use, multimodal understanding, and subagents. And it’s 2x faster than GPT-5 mini. openai.com/index/introduc…