Josh Robb

12.6K posts

Josh Robb

@josh_robb

operator, code nanny, product builder, unicorn baiter, @tendnz

Introducing 𝑨𝒕𝒕𝒆𝒏𝒕𝒊𝒐𝒏 𝑹𝒆𝒔𝒊𝒅𝒖𝒂𝒍𝒔: Rethinking depth-wise aggregation. Residual connections have long relied on fixed, uniform accumulation. Inspired by the duality of time and depth, we introduce Attention Residuals, replacing standard depth-wise recurrence with learned, input-dependent attention over preceding layers. 🔹 Enables networks to selectively retrieve past representations, naturally mitigating dilution and hidden-state growth. 🔹 Introduces Block AttnRes, partitioning layers into compressed blocks to make cross-layer attention practical at scale. 🔹 Serves as an efficient drop-in replacement, demonstrating a 1.25x compute advantage with negligible (<2%) inference latency overhead. 🔹 Validated on the Kimi Linear architecture (48B total, 3B activated parameters), delivering consistent downstream performance gains. 🔗Full report: github.com/MoonshotAI/Att…

One popular argument in the 2010s from AI accelerationists was that there would be no way an artificial superintelligence could escape the box we would put it in

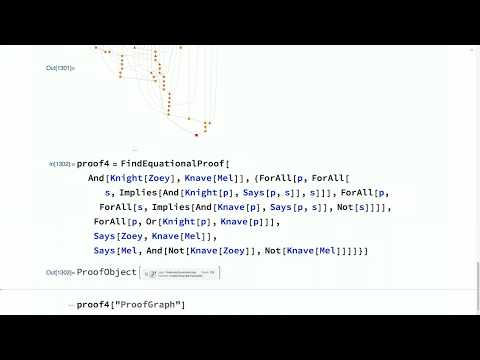

Me: "Hey Claude Code, why don't you just write out the binary executable instead of source+compile?" Claude: "Sure!" And embarks on a surprising adventure, I highly recommend this.

millennial gamers are the best prepared generation for agentic work, they've been training for 25 years

Geoffrey Hinton says mathematics is a closed system, so AIs can play it like a game. They can pose problems to themselves, test proofs, and learn from what works, without relying on human examples. “I think AI will get much better at mathematics than people, maybe in the next 10 years or so.”

@tech_optimist RLMs are 'just' a new DSPy Module, replacing dspy.CoT, dspy.ReAct, etc over Signatures. They create even stronger opportunities for optimization, because they're extremely structured (even more than CoTs) and can be "compiled" into a frozen program instead of dynamic execution.

Recursive Language Models is now on arXiv. @a1zhang worked hard to catch a Dec 31st, 2025 timestamp! Most people (mis)understand RLMs to be about LLMs invoking themselves. The deeper insight is LLMs *interacting with their own prompts as objects*. Read the thread and paper: