Jesse

3.6K posts

Jesse

@jtomchak

Firm believer that technology is awesome, and we can do better. Podcasts make the world a happier place💖🎙 @[email protected]

iPhone: 33.517490,-112.058479 Katılım Mart 2009

995 Takip Edilen482 Takipçiler

Jesse retweetledi

Jesse retweetledi

If you are a @typescript fan who HATES running into Errors, you'll LOVE coding with @elmlang.

Learn more about why every developer should give it a try in this conversation with @lindsaykwardell!

youtube.com/watch?v=vvJbUU…

YouTube

English

@jtomchak Was surprised to go through all the mental gymnastics trying to understand them to land on such a simple mental model

English

@cannikin @RedwoodJS I just saw jobs with v8. Y’all are doing fantastic work. Keep it up

English

@jtomchak Have you seen @RedwoodJS ? We’ve got all that built in and more!

English

NEW: node-fetch-server

Write servers for Node.js using the web fetch API primitives, like Request and Response 👍

$ npm i @mjackson/node-fetch-server

Check it out 👇

github.com/mjackson/remix…

English

Jesse retweetledi

for those of you interested in business positioning stuff here's how we think about things at sst:

we are in the game of venture scale and to be in this game you need to be making asymmetric bets

your positioning has to be counter to the market, so that if you are right, you not only win but you win big

what's currently going on in the devtools space is an attempt at unbundling the cloud.

the cloud has been the best business the world has ever seen and companies are slicing off pieces of it to spin up dedicated efforts with a narrower scope

this is where ALL of the funding is going - you see some crazy rounds for companies in this category

we are betting against this

a few of these companies do have value - they tend to focus on high ops burden problems like databases

but most are entirely focused on "DX" - meaning they provide a simplified UI / experience that is 10x easier to get started with than the underlying resources

we think this will fail for a few reasons

1. a lot of these "DX improvements" come at the expense of capability - especially when delivered as a UI

a serious customer will hit some limit, some new property will have to be exposed in the UI, repeat over 100 customers and you're back to something complicated - we've all seen this happen

2. having your data split between multiple third party services quickly erodes any benefits of using them in the first place. all your data should be joinable. serious companies actually pay attention to things like performance and egress costs

3. the scope of these products are too narrow - all of these companies are complementary and NOT competing with each other. that's a bad thing - it means even if they execute well there's no path to dominating a market - it's not venture scale

4. there is a massive gravity to the large clouds - the companies that can actually give you venture scale revenue are on aws and so are any serious startups

they are reluctant to use anything hosted externally so if you're delivering DX improvements via a hosted service, they will largely just go without

so we position ourselves differently - instead of wrapping the major clouds as a hosted service, we try to make it easier for them to be used directly

we deliver this as code because code can do progressive disclosure. complexity can be both hidden up front and be accessible later - less chance people will need to eject

our scope is wide as hell - if some new devtool company does have a good idea, we will copy it and make it available as another bullet point in our feature list

and it'll be free - driving the value to $0

will it be as good? no - you can only get to the pinnacle of DX if you create a hosted service. but it will be fully configurable, run on your infra, and be much lower friction to add on than signing up for something new

we think that is what matters for the companies with actual $$$ to spend

this is also why we're open source - it's not just some feel good thing. OSS is particularly good at covering a wide scope - we do not have bandwidth to make sure python on aws is being packaged correctly, but someone in our community does

so if we happen to be right on all this we don't just take out a single competitor, we collapse the entire space with one all encompassing OSS effort

this is what an asymmetric bet looks like - you don't have to agree that the bet will work out but you can see how it's a bet worth making

English

@dustinsgoodman The time limit is a real restriction. Fly’s firecracker implementation means you can put your RoR app in “serverless” keeping alive 1 or more machine in production. This simple addition removes sooo much app infrastructure complexity

English

@jtomchak I know on AWS you can bump the memory limit. That time limit is the real killer for me.

English

Jesse retweetledi

@SamualTNorman I’m just complaining about some of the limitations of serverless functions

English

@jtomchak what is this subtweeting? 50 MB of what? Network traffic? RAM?

English

@jtomchak You’re really gonna make me go back and look at PHP again aren’t you? Sigh, fine.

English

whatever you're doing, just be more like Laravel

cloud.laravel.com

> No more wasting time on DevOps minutiae. No more tinkering with server configuration files, load balancers, or database backups. No more headaches. ... it’s a reality.

English

@ThisDotMedia @reactjs I had an absolute blast. Thank you so much for having me. 🚀

English

Jesse retweetledi

Jesse retweetledi

I’ve been on a project at work for 5+ years now, and I’d say some of the biggest technical pain points have included dynamodb, serverless, api gateway. Might be a skill issue, but if I did it all over I’d say just always use postgres and deploy containers to a managed service until there is a reason not to for most projects.

Dynamo is great when you know exactly what you’re building from the start. It’s also good if from the start you know you’ll be dealing with a lot of data that can’t work well in postgres (guess what sql has been handling lots of data for a long time). Dynamo becomes a pain when you’re doing agile development. SQL is a lot more forgiving when requirements change. Dynamo takes forever to loop over all your entries and update them. Updating 10 million records takes almost an hour, and that’s including doing parallel scans. “Bro, just increase your provisioned WCU! Sure, but you know it takes at least 20-30 minutes for that to finish updating your instance”? Doing the same update using sql takes 5 min on a 2 cpu machine with 8gb memory. Your inability to easily query for data in dynamo is bad. “Bro, just use GSI!” Ok, now you’re cost for writes are doubled, and each gsi is async updated so again when you need to update all entries, it takes time for your GSI to update fully. Accidentally picked a bad partition, sort key? Have fun writing a bunch of code just to migrate your data to a new table. The dynamo docs say “know your access patterns before you make your single table pattern”… most product owners can’t even describe what they want, you expect us to design our access patterns correct from the start?

Lambda is a great when you have a specific need to quickly scale from 0 to 1000s of isolated workers. For example, we have a use case where we need to loop over hundreds of data entries and generate unique pdfs for each one. Lambda shines with this, but now you’ll basically need to use sqs or a queue system to orchestrate it all. Btw sqs has its own set of gotchyas, such as events might be delivered twice so you better write your code to make sure you don’t double process the same event. Luckily lambda supports running containers now, but previously it was a huge pain when you installed a package that requires a node-gyp binary which means now you need to build that inside the correct docker image that is compatible with the lambda runtime and then create a lambda layer containing those binaries. Save yourself the hassle and just always use containers for all running code. Probably just stop using node or JavaScript on the backend if possible, it’s pretty awful. 100% don’t use a mono lambda for your api.

Api gateway is a pain and is typically used for putting a rest api in front of your lambdas if you want to make an api using a mono lambda. Works great until your lambda takes more than 30 seconds; api gateway will time out your requests. That means you need to instead go async events and figure out another solution to notify your users (websockets, sse) when the request is done. Have fun getting either to work on lambda. You’ll end up using api gateway v2 websockets that has more gotchyas. Connections auto timeout after 15 minutes, so you need to add ping pong logic, max connection of 2 hours, so again have fun writing more logic for those limits. Cold starts are a real issue as your code grows; which makes you find ways to lazy import functions if deploying a mono lambda api. Don’t forget deploying your lambda has a 250mb limit which is the biggest pain in the ass. Again, just run containers on lambda if you must use them.

Add on top you’ll end up using terraform or another IaC tool just to get all this stuff deployed. SST is great, but if you think about it, they created it because we all admit deploying stuff to aws is a nightmare, especially lambda. Idk I’m just burned out on this entire ecosystem.

Just let me deploy a single go server that renders html at this point.

English

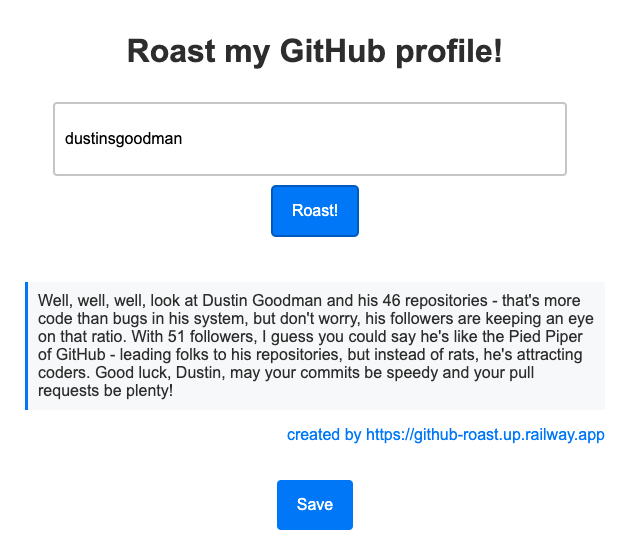

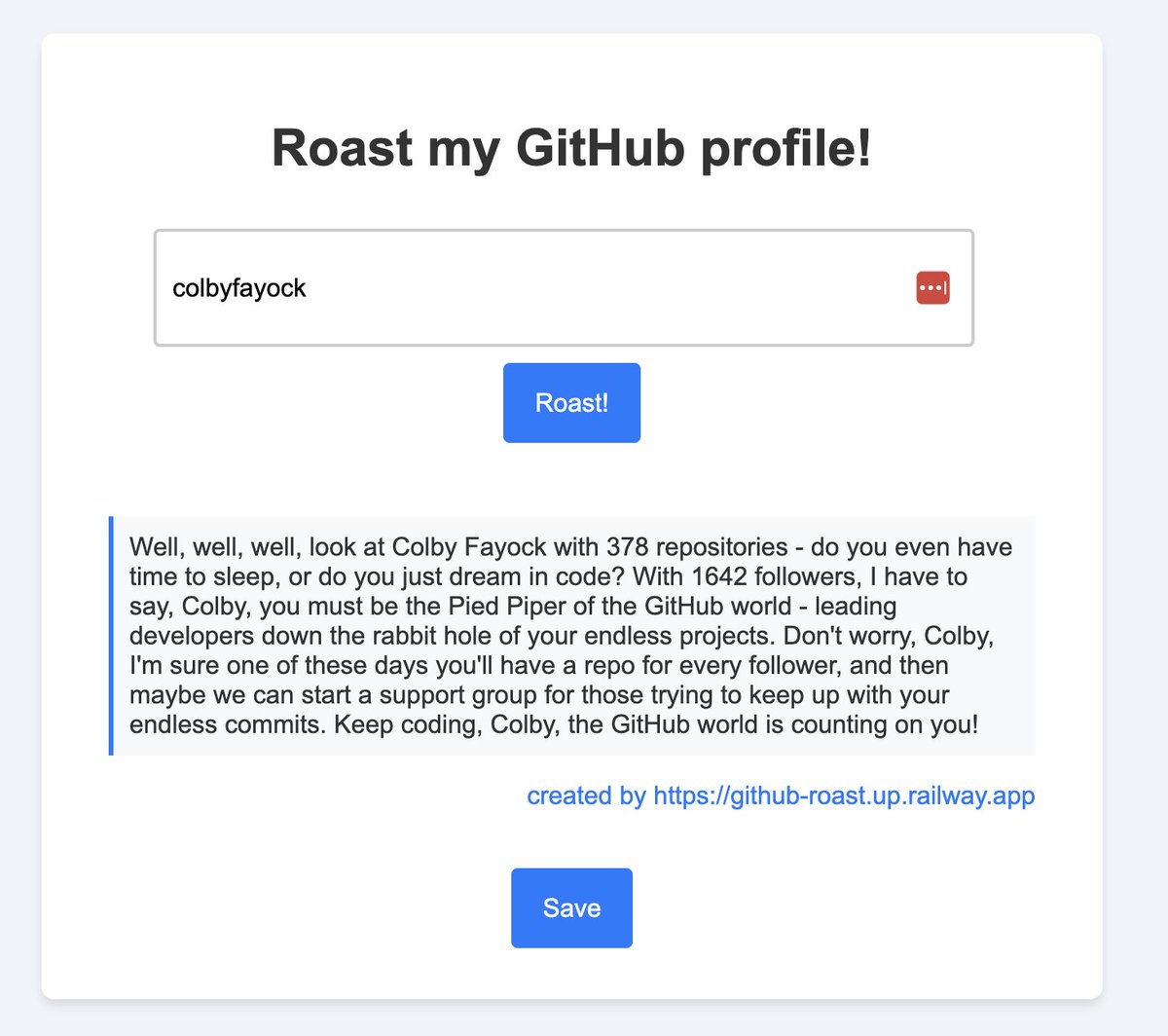

> Don't worry, Colby, I'm sure one of these days you'll have a repo for every follower

lmao so good @HaimantikaM

English