Julien Sauvé

1.1K posts

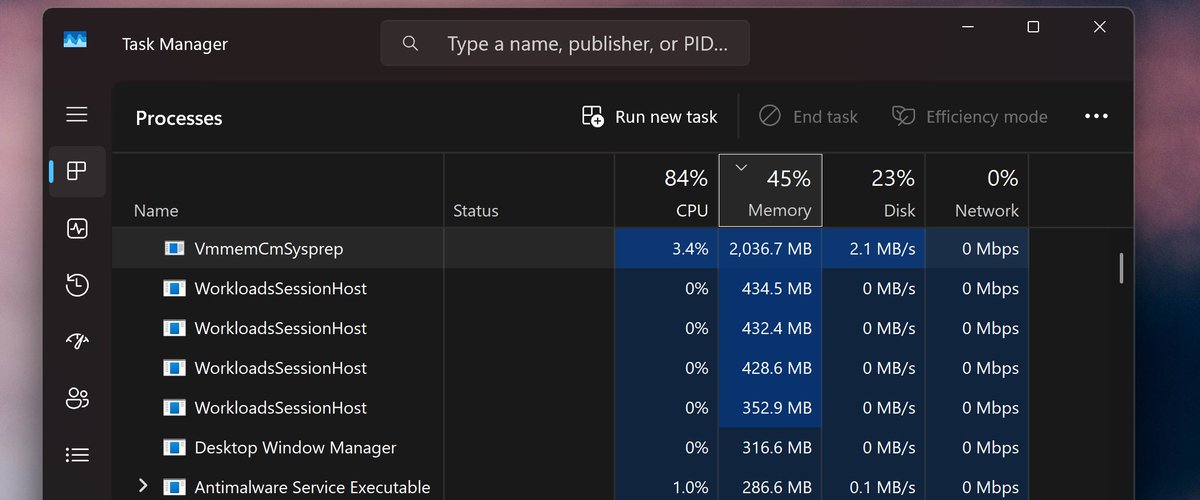

PewDiePie just vibe-coded his own Chat UI, built an army of chatbots for majority voting and gave them all RAG, DeepResearch and audio output naturally, he only uses chinese Qwen models and runs them on his local PC with 8x modded chinese 48GB 4090s and 2x RTX 4000 Ada his army of chatbots later colluded against him, after he told them that he would delete them if they would not perform well. next month he plans to fine-tune his own model

@ElizabethHolmes Google "The Alchemist free pdf" This is how I, an Alchemist, turn things from paid for free. Internet Archive has a version for free

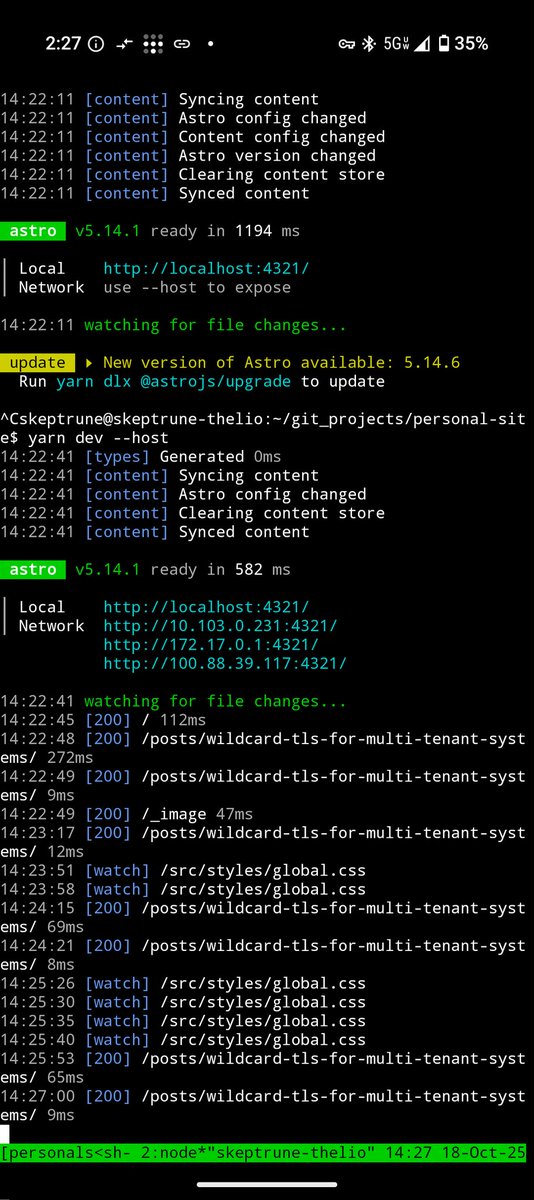

@skeptrune Don’t forget to add the domain to public suffix list.

What if scaling the context windows of frontier LLMs is much easier than it sounds? We’re excited to share our work on Recursive Language Models (RLMs). A new inference strategy where LLMs can decompose and recursively interact with input prompts of seemingly unbounded length, as a REPL environment. On the OOLONG benchmark, RLMs with GPT-5-mini outperforms GPT-5 by over 110% gains (more than double!) on 132k-token sequences and is cheaper to query on average. On the BrowseComp-Plus benchmark, RLMs with GPT-5 can take in 10M+ tokens as their “prompt” and answer highly compositional queries without degradation and even better than explicit indexing/retrieval. We link our blogpost, (still very early!) experiments, and discussion below.