Mandar Joshi

393 posts

Mandar Joshi

@mandarjoshi_

Research Scientist at Google DeepMind. Formerly CS/NLP PhD student at the University of Washington, Seattle. Here for cats, NLP, and politics.

🚀 Introducing the Latent Speech-Text Transformer (LST) — a speech-text model that organizes speech tokens into latent patches for better text→speech transfer, enabling steeper scaling laws and more efficient multimodal training ⚡️ Paper 📄 arxiv.org/pdf/2510.06195

AutoGen update! On Feb 25, @bansalg_ will introduce a transformative update that builds on user feedback and redefines modularity, stability, and flexibility to empower the next generation of agentic AI research and applications. Register here: msft.it/6013Umnpl

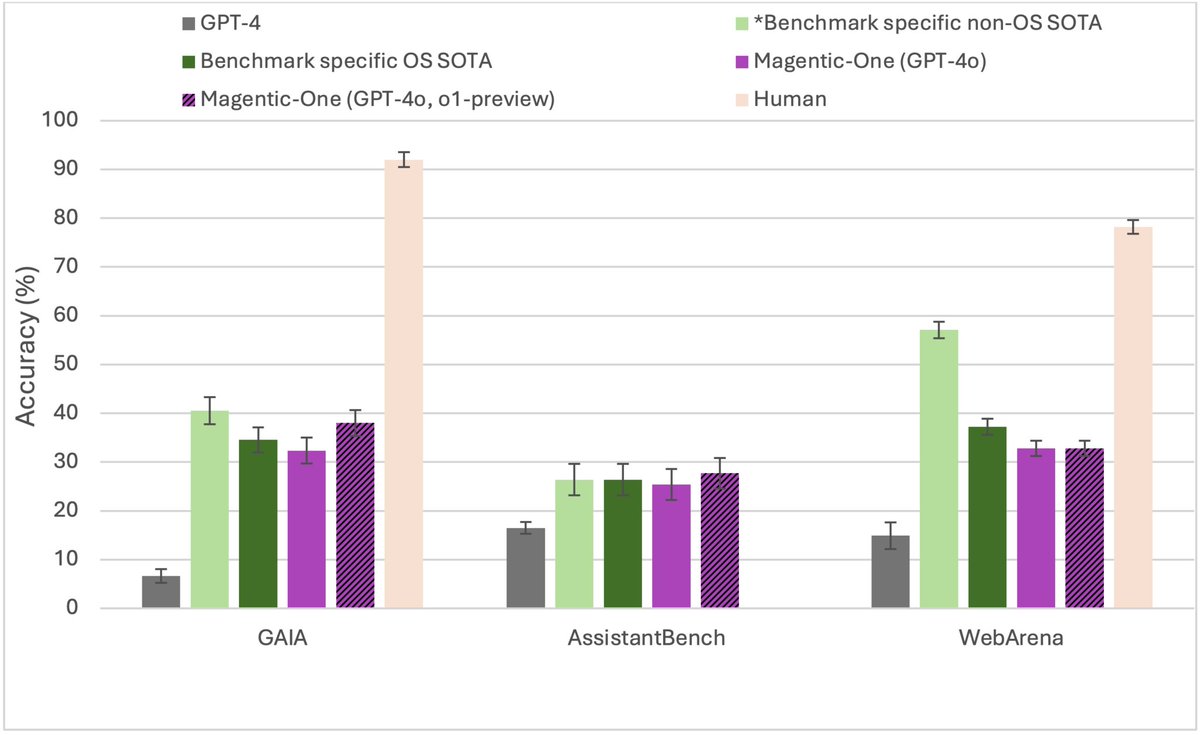

📢Introducing Magentic-One, a generalist 5-agent multi-agent system for solving open-ended web- and file-based tasks. 🤖🤖🤖🤖🤖 Magentic-One represents a significant step towards agents that can complete tasks that people encounter in their daily lives and can achieve strong performance and generalization across THREE challenging agentic benchmarks: GAIA, WebArena, and Assistant. We are releasing an open-source implementation in #AutoGen, our popular open-source framework for developing multi-agent applications. Checkout the technical report, blog, and implementation below 👇 @MSFTResearch @Microsoft #AutoGen #Agents #opensource 1/