Mark Backman

25 posts

Great to have this repo! thanks @kwindla! Gemini 2 Voice-to-Voice = the fastest voice interface? Pipecat means fast transport. It's clearly hearing non-verbal audio but struggles to describe it, and can't sing or laugh. Great for practical apps. This repo rocks!

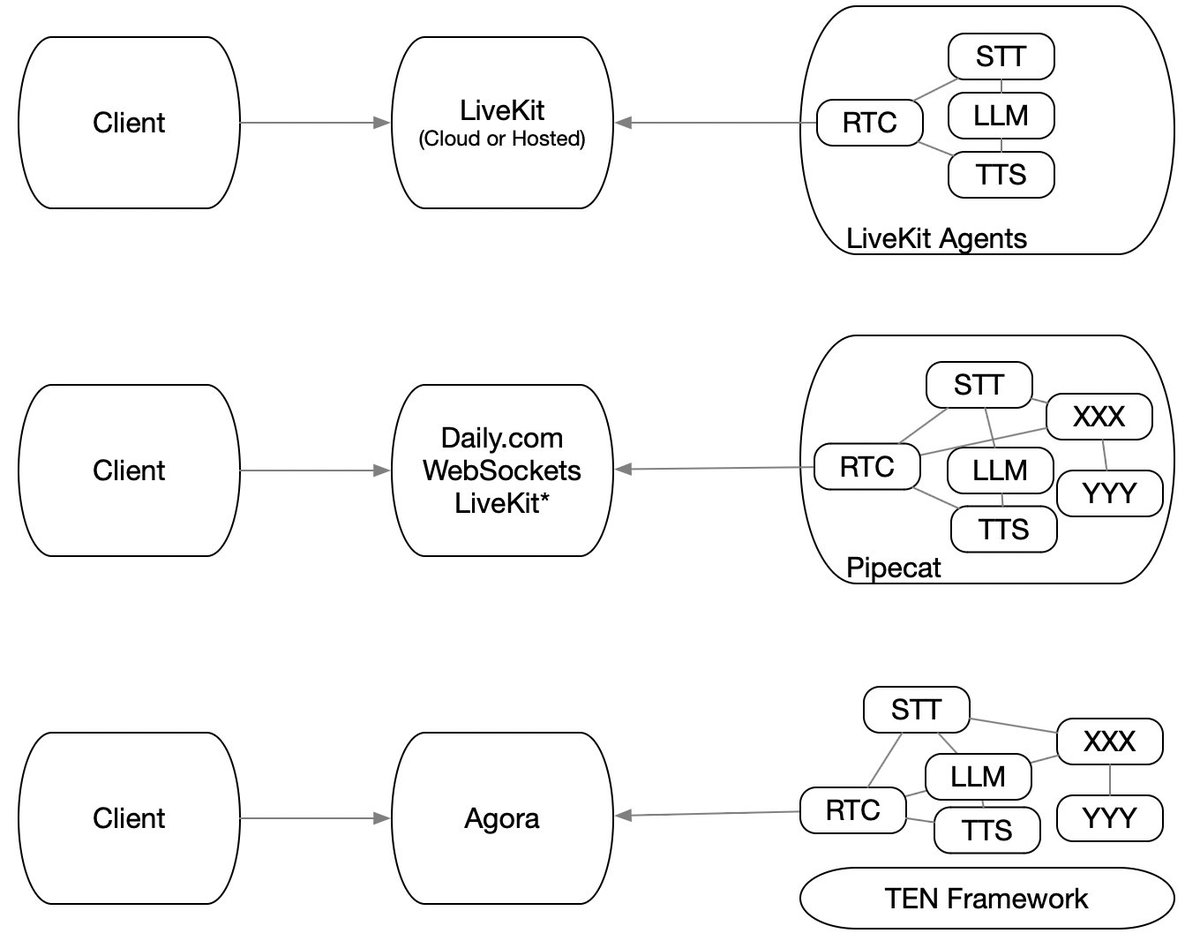

Gemini 2.0 launched today. Amazing multimodal capabilities, long context windows, fast response times, built-in tools, and top-of-the-leaderboards reasoning capabilities. Plus a new API — the Multimodal Live API — for conversational AI applications, like voice agents and multimodal copilots. @Google and Daily have partnered to build Multimodal Live API support into the @pipecat_ai Open Source SDKs for Web, Android, iOS and C++. The Pipecat SDKs come with echo cancellation and noise reduction, device management, event abstractions, React hooks, and more. They support both direct connections to the Gemini WebSocket API, and WebRTC routing on Daily's global ultra-low latency network. Build realtime voice agents with Gemini, Pipecat, and Daily. Links to docs and starter kits in the thread below (1/4)...

Talk to (a bootleg) virtual @benthompson [Meta-note: I recorded this video in a Waymo. So you're watching an AI experience inside an AI experience.] We did an internal voice AI hackathon a couple of weeks ago at @trydaily. Several of us are long-time @stratechery fans; @mark_backman had the idea of creating a "talk to Ben Thompson" toy demo. This kind of project is a really nice testbed for combining RAG with voice. I'll put some notes about building voice + RAG below, but if you just want to jump to a live demo, there's a link further down in this thread. The tech stack here breaks down into two parts: preparing and indexing the data, and running the live experience. There are lots and lots of choices right now for chunking, embedding, and storage/retrieval tooling. Mark used these: - @spacy_io for semantic chunking - @OpenAI text-embedding-3-small - @pinecone to store the embeddings The live app uses: - @OpenAI gpt-4-o mini - function calls trigger a @langchain query - the voice is a @cartesia clone - @pipecat_ai does the low latency phrase endpointing, interruption handling, context management, and orchestration - @trydaily Daily Bots voice transport and Pipecat hosting - the demo app is hosted on @vercel A link to the full source code of the app (but not the copyrighted Stratechery content) is in the thread below. Several things about building this are tricky: - Latency really matters, and it's hard to make function calling + RAG fast. This was an experiment, not a production app, but Mark was still able to get the median total (voice-to-voice) response time down below 1.5s. In general, we aim for ~800ms for conversational voice AI response times, so this is slower than we want these experiences to be. But the median here isn't terrible. The outliers do feel too slow, though. - RAG is complicated to get right. Mark did a lot of experimenting with chunking and embeddings. I think this definitely clears the 80/20 bar of being an interesting demo. I'm interested in what you think if you try it! For a production app, we'd want to do significantly more work on the retrieval subsystem. The quality of the data fetch heavily influences the quality of the conversational output. I'm convinced that talking to an LLM "personality" is going to be a very, very common thing in the near future. Sometimes, we'll talk to personalities that are slices of real peoples' public personals. Like this one. I also think there will be hugely popular personas that are "natively AI," personalities that are not based on a specific, real person. These new apps pose interesting, interrelated questions about copyright, user expectations and desires, and UI design. We trained this app on copyrighted Stratechery content and cloned a real person's voice. This is clearly a copyright violation and of course we'll take the demo down if Ben Thompson objects to it being publicly available. Note that it's not possible to retrieve the copyrighted material, here. It's only possible to get GPT-4o-mini's "remix" of the content. We needed a "behind a paywall" corpus of content to build an interesting RAG demo, because today's large LLMs are trained on much of the freely accessible information on the Internet. There are two things to note about that: 1. You can build a decent "clone" of a public person's personality just by creatively prompting a state-of-the-art LLM. You won't necessarily get the specific content grounding you probably want, but writing style usually shines through pretty nicely. 2. Almost all of the content used for training state-of-the-art LLMs is copyrighted content, even when it's not behind a paywall. Courts haven't yet ruled on whether mixing *a lot* of copyrighted content from many sources together constitutes a legal use of copyrighted content. Perhaps this falls under the category of "fair use." Perhaps not. I went in person to see the Eldred vs Ashcroft oral argument at the Supreme Court in 2002. The court's decision in that case upheld the Digital Millenium Copyright Act. That felt momentous at the time — and wrongly decided. It seems certain that there will be an even more momentous case about how copyright law applies to large model training. Perhaps our highly polarized congress will find a way to pass new laws that extend and clarify copyright for this new era. If so, we should hope that corporate lobbyists aren't the primary authors of that law, as they were with the DMCA. We did not use any of the Stratechery podcast content for this demo, because adding multi-modal, multi-person content was beyond the scope of a hackathon project. But it sure seems like you'd want to add all of that great audio source material to a bigger, production-quality, authorized version of an app like this. It's less obvious to me whether you would want to try to add in non-Stratechery content from Ben Thompson. (NBA commentary and analysis!) Thompson maintains a "no-tech" X account — @NoTechBen. Should this content separation that makes sense on X also port over to the new generative AI personality world? Anyway, this is now a very long post ... so go play with the demo if you're so inclined. Link in the next tweet.

@tranhelen We are hiring remote full stack, front end, devops, video, and support engineers at @trydaily. Roles are described here: daily.co/jobs (but please reach out if you're interested in what we're doing and don't see a role that's an exact fit).