Michael Xu

150 posts

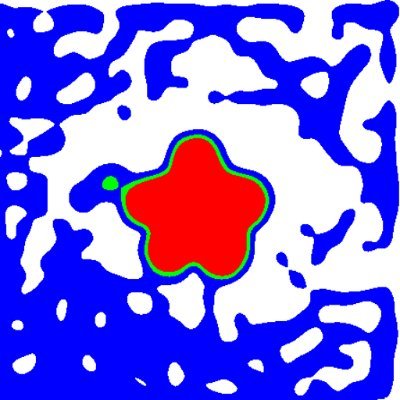

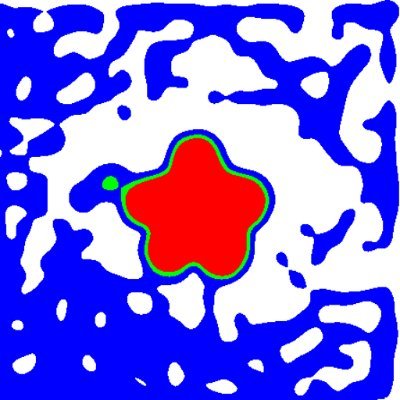

💡Introducing 𝑼𝑴𝑶 -- one unified model that unlocks motion foundation model (HY-Motion @TencentHunyuan) priors for 𝟏𝟎+ 𝐭𝐚𝐬𝐤𝐬: 𝐞𝐝𝐢𝐭𝐢𝐧𝐠, 𝐫𝐞𝐚𝐜𝐭𝐢𝐨𝐧 𝐠𝐞𝐧𝐞𝐫𝐚𝐭𝐢𝐨𝐧, 𝐬𝐭𝐲𝐥𝐢𝐳𝐚𝐭𝐢𝐨𝐧, 𝐭𝐫𝐚𝐣𝐞𝐜𝐭𝐨𝐫𝐲 𝐜𝐨𝐧𝐭𝐫𝐨𝐥, 𝐨𝐛𝐬𝐭𝐚𝐜𝐥𝐞 𝐚𝐯𝐨𝐢𝐝𝐚𝐧𝐜𝐞, 𝐤𝐞𝐲𝐟𝐫𝐚𝐦𝐞 𝐢𝐧𝐟𝐢𝐥𝐥𝐢𝐧𝐠... (1/8) 🌐 Webpage: oliver-cong02.github.io/UMO.github.io/ 📄 Paper: arxiv.org/abs/2603.15975

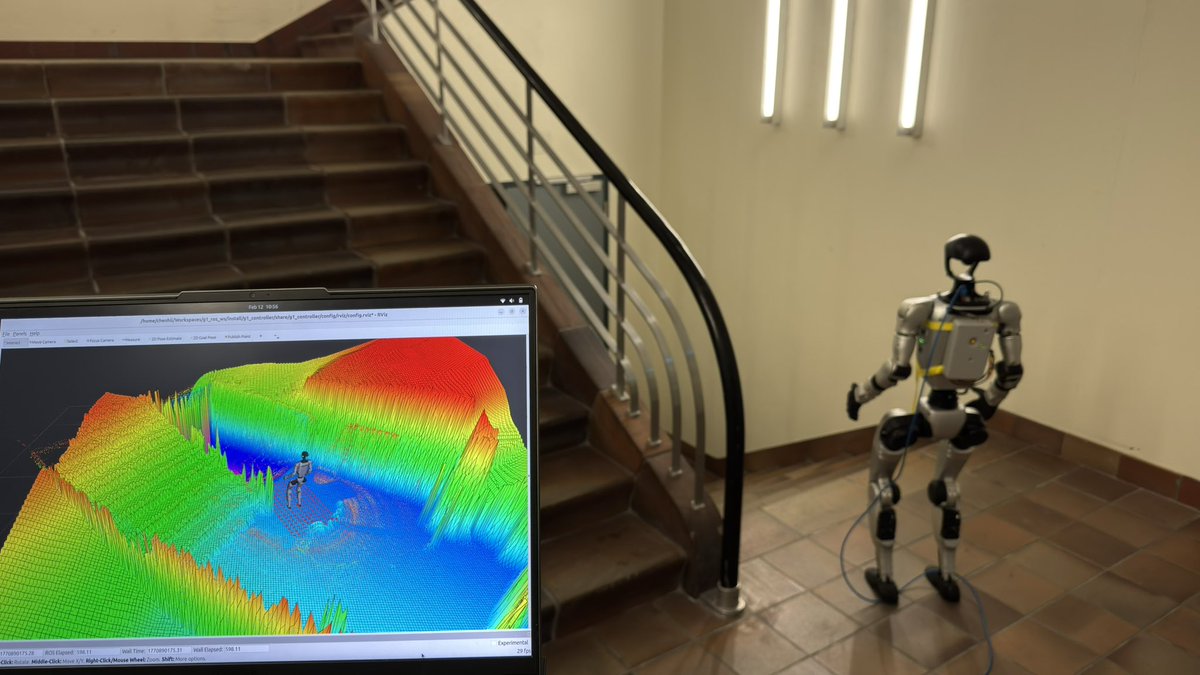

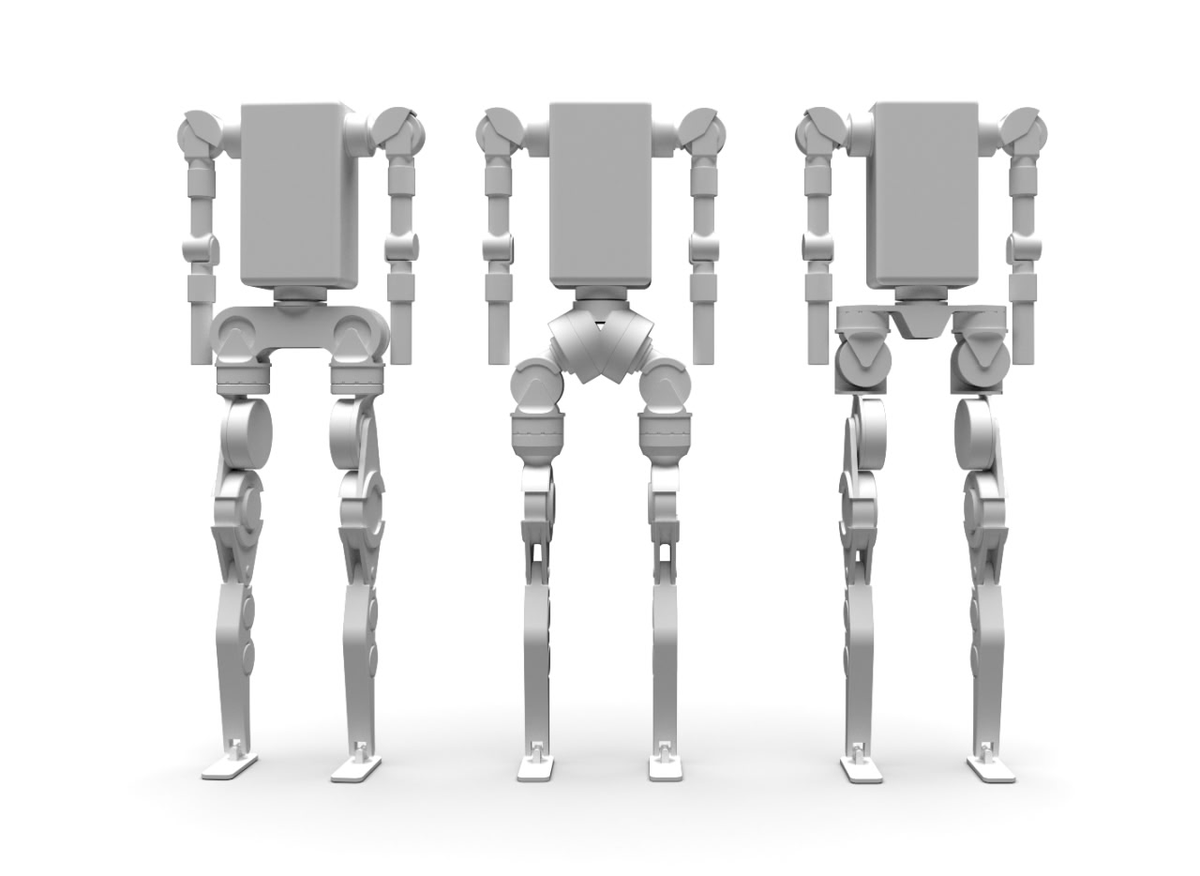

Real-world loco-manipulation demands more than replaying fixed reference motions. We argue that true autonomy requires two capabilities: 1️⃣ flexibly leveraging whatever signals are available — dense references, partial cues, state estimates, or egocentric perception 2️⃣ remaining capable when any of these signals are missing or unreliable We introduce ULTRA — an all-in-one controller for unified humanoid loco-manipulation 🤖 It supports: • general reference tracking • sparse goal following • execution with motion capture • execution with egocentric perception 🔗 Project page: ultra-humanoid.github.io

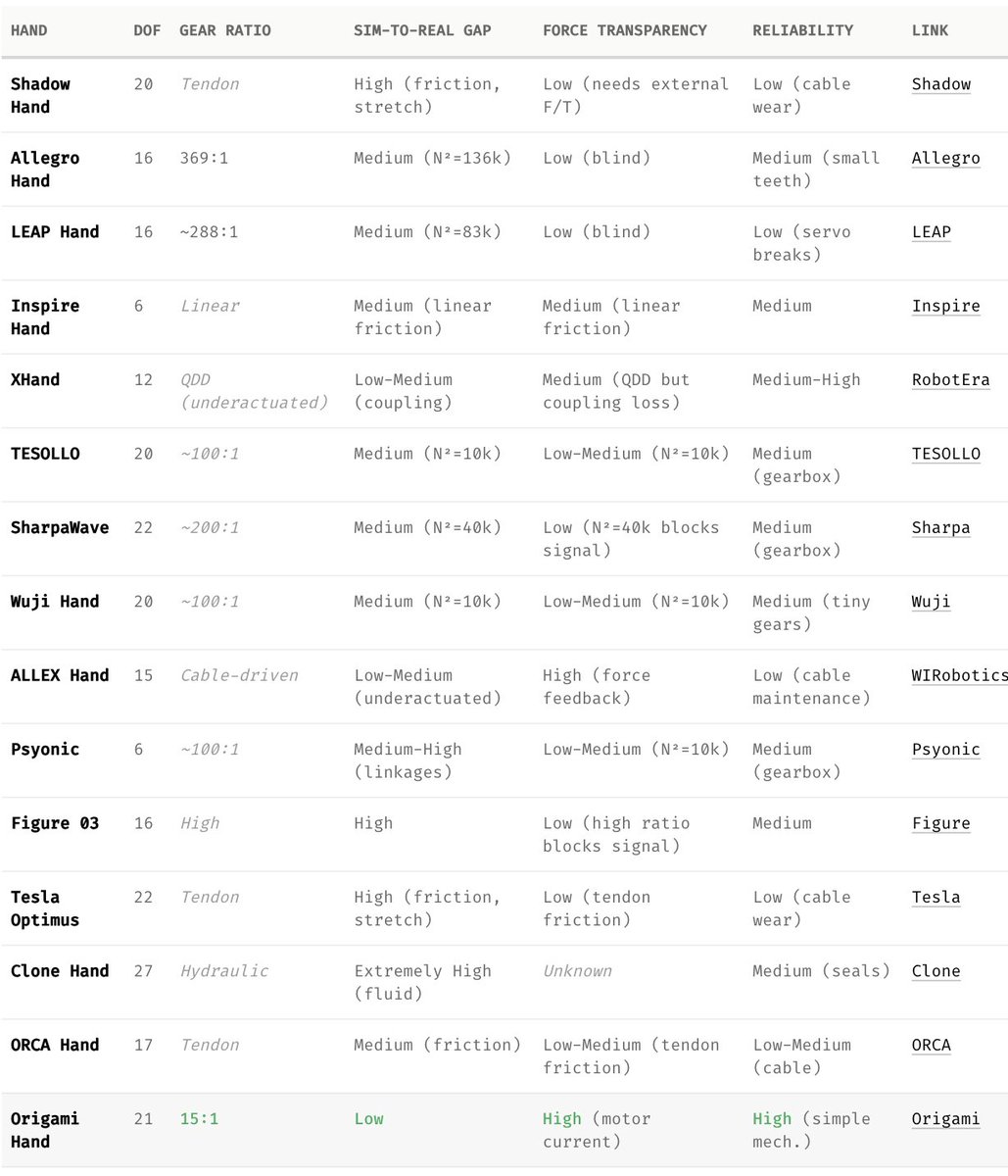

Why does manipulation lag so far behind locomotion? New post on one piece we don't talk about enough: The gearbox. The Gap You've probably seen those dancing humanoid robots from Chinese New Year. Locomotion isn't entirely solved; but clearly it's on a trajectory. But we haven't seen anything close for manipulation. 𝗪𝗵𝘆? When sim-to-real transfer fails, the instinct is to blame the algorithm. Train bigger networks. Crank up domain randomization. Those approaches have made real progress; we don't deny that. But we started wondering: are we treating the symptom or the disease? The Hardware Bottleneck: Fingers are too small for powerful motors. So most hands use massive gearboxes (200:1, 288:1) to get enough torque. But those gearboxes break everything manipulation needs: • Stiction and backlash are complex to simulate. Policies trained on smooth physics hallucinate when they hit that reality. • Reflected inertia scales as N². At large gear ratio, the finger hits with sledgehammer momentum. • Friction blocks force information. The hand becomes blind. And they're the first thing to break. What we are trying to build at Origami, we cut the gear ratio from 288:1 to 15:1 using axial flux motors and thermal optimization. The transmission becomes more transparent: backdrivable, low friction, forces propagate to motor current. Early signs are encouraging. Still running quantitative benchmarks. Why Interactive? I love how Science Center uses interactive devices to explain complex ideas. I want to borrow this concept and help people understand the hard problems in robotics better visually. The post has demos where you can toggle friction, slide gear ratios, watch the sim-to-real gap widen in real-time. What's inside: • Interactive demos (friction curves, N² scaling, contact patterns) • Comparison table: 14 robot hands by sim-to-real gap and force transparency • The math behind why low-ratio matters Read it here: origami-robotics.com/blog/dexterity… We're not claiming we've solved dexterity. The deadlock has many pieces. But we think this one's foundational. Curious what you think.

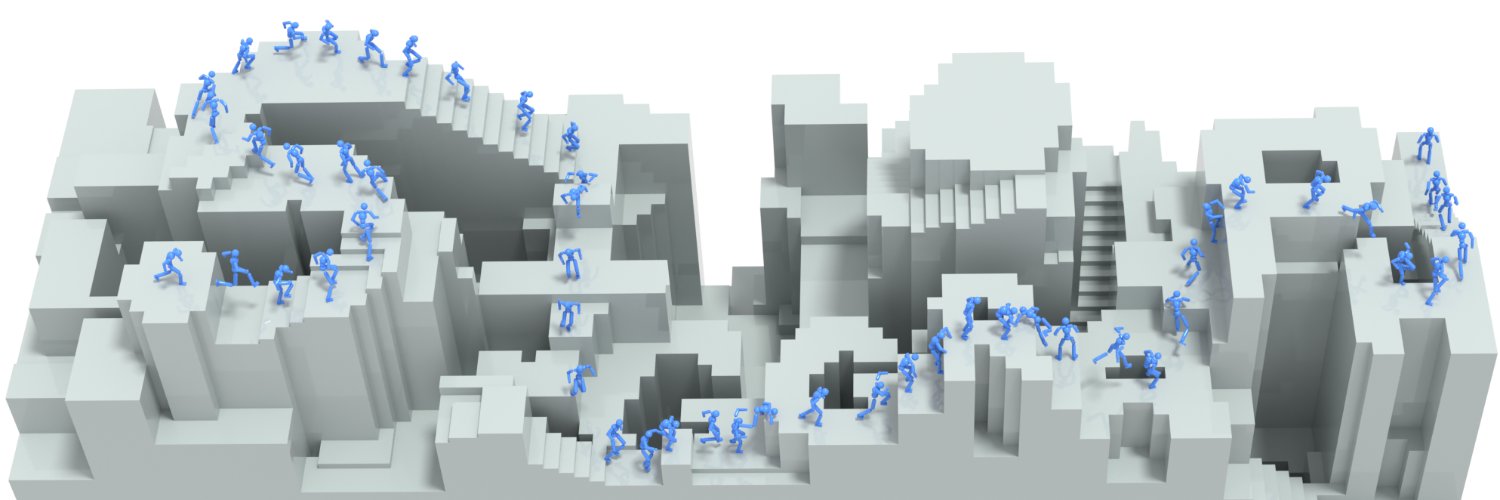

Humanoids need autonomy + versatility + generalization to be truly useful. Loco-manipulation makes that hard. InterPrior is our step toward bridging the gap — one policy, no reference. Could be promising for immersive games 🎮 and real robots 🤖 🔗 sirui-xu.github.io/InterPrior 📜 arxiv.org/abs/2602.06035 [1/9]