Nitarshan

1.6K posts

Nitarshan

@nitarshan

computer @anthropic, PhD @cambridge_cl. prev created @aisecurityinst, AI Safety Summit, UK AI Research Resource, EU AI Code of Practice.

SF / London Katılım Mayıs 2012

2.3K Takip Edilen2.3K Takipçiler

Sabitlenmiş Tweet

The West has a closing window to win on AI. In our @JoinFAI article, @saroshnagar, @scott_r_singer and I argue that our leadership in AI requires "full-stack diffusion" to promote our entire AI stack globally. 1/6

English

Nitarshan retweetledi

I simply don't understand what people have in mind when they say stuff like this.

What we have is extremely capable computer use agents. They will continue to get better at computer use. But how does a capable computer use agent 'take over' and why haven't they done that today?

Elizabeth Barnes@BethMayBarnes

(1) We are likely on track to develop AI systems capable of causing human extinction/permanent disempowerment, quite possibly within the next few years

English

Nitarshan retweetledi

@kareem_carr There was 0 human involvement. The prompt is in the report. The final answer by the model is in the report. And we have a (gpt-rewritten) CoT that we released.

English

Nitarshan retweetledi

Nitarshan retweetledi

Nitarshan retweetledi

Nitarshan retweetledi

Over a weekend and with ~$760, I (not a biologist) used Claude Code to fine-tune a biological AI model on human-infecting viral sequences. Although my experiment wasn't dangerous, it demonstrates how coding agents are changing the biosecurity risk landscape.

In a new @GovAIOrg blog post with @lucafrighetti and James Black, we describe this experiment and its policy implications.

Biosecurity has traditionally divided AI risks into two buckets: general LLMs that "raise the floor" by democratizing knowledge and specialized biological AI models (BAIMs) that "raise the ceiling" by enabling experts.

Increasingly capable coding agents blur that line via three mechanisms:

1) Coding agents let both novices and experts operate BAIMs more effectively, expanding the pool of potential misusers and letting experts test more designs faster.

2) Data filters on BAIMs are brittle when coding agents can autonomously fine-tune the models, as my experiment shows.

3) Coding agents speed up ML engineering, making it more feasible for threat actors to train new specialized models optimized for harmful capabilities from scratch.

Policy recommendations: BAIM developers should move beyond data filtering toward trusted-access programs; LLM developers should test agent interactions with BAIMs; policymakers should prioritize physical chokepoints like DNA synthesis screening.

Read the piece: governance.ai/analysis/codin…

English

Nitarshan retweetledi

I think a lot of arguments in this piece are weak or out of date, and mostly repeating theoretical assumptions developed way before we had the AI systems we build today. Some of the evidence cited is a bit selective too. Some takes:

1. Grok going MechaHitler is not an example of goal misgeneralization or specification gaming, which were issues with different, older RL systems. Nor does it support the article's claim that 'AI won't do what we want (by default)'. This was a result of a bad system prompt, which was easily fixed: simonwillison.net/2025/Jul/15/xa…

2. In general, models are pretty good at instruction following, and even more so over time. The default empirical trend so far is improving steerability and instruction following, so expecting this to get worse as they get more capable requires strong evidence than metaphors or analogies.

3. The examples used in the post don't really support the chimp to human analogy, which is rhetorically vivid but analytically sloppy; plus there are many examples of control exerted over more capable systems, like control over companies. Control is also likely not the sole frame we should use to understand and steer AI.

4. The 'we are growing AIs' meme is not very instructive. It's not true that 'all we can see are the trillions of inscrutable parameters' - there is a lot of research that now give us a much better understanding of how models work: circuit tracing work, sparse feature work, representation engineering etc. Just because models aren't 'pre-programmed' does not imply that 'there is no way to directly specify what behaviour we want an AI system to have.'

5. We don't train AIs to 'optimise for long-term goals', this is not in fact a good description of what model training does. The article compresses too many distinct things (models, instruction tuning, scaffolds etc) into one 'goal-maximizing' or 'scheming' story. See also: lesswrong.com/posts/pdaGN6pQ…

6. It's not at all obvious that learning self-preservation is a necessary side effect of better capabilities, which is a core assumption in rationalist circles. There are in my view no strong signs pointing towards robust endogenous self-preservation drives, and if anything more signs pointing in the other direction. See also: blog.cosmos-institute.org/p/alignment-by…

7. The cited 'blackmail the engineer' test environment is widely seen as highly flawed and not instructive in any way. Even Anthropic’s own write-up makes clear these were contrived scenarios with no external validity. See also: arxiv.org/abs/2507.03409 and x.com/sebkrier/statu…

8. Reward hacking is a legitimate issue, but not one that implies the kind of loss of control alluded to in the article, nor that some hidden reward has become the system’s deep objective. See also: turntrout.com/reward-hacking…

9. The 2024 alignment faking paper is also highly stylized, not particularly instructive, and dismissed as not in fact proving deceptive intent as the post implies. The label “alignment faking” imported more intentionality and strategic coherence than the setup warranted. See also: arxiv.org/abs/2506.18032 and alignmentforum.org/posts/PWHkMac9…

10. A model to inferring it's being evaluated shows recognizing highly standardised/obvious evaluation environments rather than any deceptive intent. Interpreting eval awareness as such illustrates nicely the underlying assumptions held by some safety researchers. The jump from "models can detect standardized eval contexts" to "models are deceptively scheming" is a unwarranted interpretive leap.

Benjamin Todd@ben_j_todd

With chatbots, AI alignment looked easier than expected. But with the shift to ever smarter longer-horizon agents, the classic reasons for concern come back. New primer: four reasons why AI won't do what we want 🧵

English

Nitarshan retweetledi

AI models' cyber capabilities keep getting meaningfully better, and fast. To determine how AI capabilities will impact cybercrime, we first need a baseline for global cybercrime damages.

In a new @GovAIOrg technical report with John Halstead and @lucafrighetti, we arrive at a baseline estimate of global cybercrime damages: $500B (with 90% CI of $100B-$1T) per year.

Existing estimates of global cybercrime damages range from tens of billions to tens of trillions of dollars. Most have serious problems: they rely on reported damages only (missing the vast majority of incidents that go unreported), or they don't publish their methodology at all. We tried to do better by extrapolating mostly from survey data, which captures unreported incidents, and by being transparent about every assumption we make.

Our total estimate: ~$500B a year. This includes direct losses to individuals, direct + response costs to businesses, and defensive spending. Notably, this does not include costs that are even harder to quantify, such as IP theft, espionage, and national security costs, so the real yearly damages are presumably higher.

As AI gets better at cyber, even a modest additive effect on the volume of cybercrime is a big deal. A 20% increase would mean ~$100B in additional yearly damages.

Our estimates have extremely high uncertainty ranges. If we want to understand how AI is shaping cybercrime, we'll need to build new ways of measure the effects by looking at real world indicators of threat actor AI usage.

Read the full report here: governance.ai/research-paper…

English

Nitarshan retweetledi

NASA does not have a top-line problem. We receive roughly $25 billion in annual appropriations, including more than a $10 billion plus-up from President Trump’s One Big Beautiful Bill. If that is not enough to run a lunar exploration program and do all the other things across science and discovery, then what is the right number?

We don’t need to blame budgets or continuity of decision-making as the common excuse, as if a billion dollars is somehow not a billion dollars and troubled programs should perpetually stay troubled programs. NASA, like the federal government, cannot spend our way out of every problem, nor can we perpetuate bad decisions.

That means not getting spread thin across too many imposed endeavors or jumping straight to the “dream state,” which is how everything becomes over budget and behind schedule.

Instead, we concentrate on the needle-moving objectives, the reason NASA exists in the first place. We execute with urgency, in an iterative and safe way, and empower the workforce and our partners to get the job done.

That is how we changed the world on July 20, 1969, and it is how we will do it again. Expect more from NASA and start believing again.

English

Nitarshan retweetledi

Nitarshan retweetledi

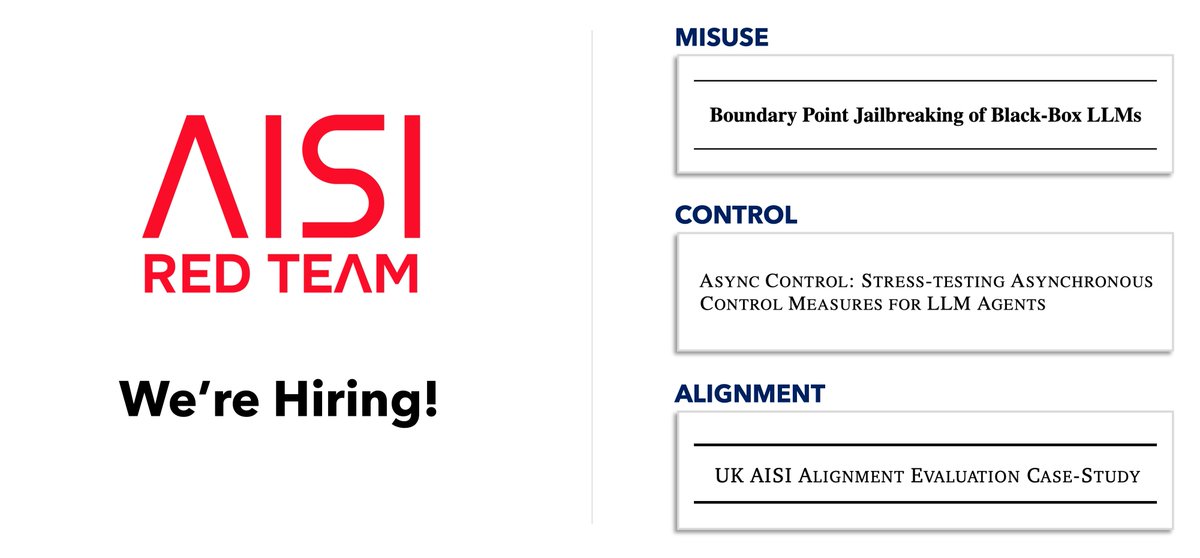

The Red Team at @AISecurityInst is hiring! We work with frontier AI companies to red team their misuse safeguards, control measures, and alignment techniques. As the stakes rise, we need much stronger red teaming and many more talented researchers working within gov 🧵

English

Nitarshan retweetledi

Nitarshan retweetledi

@memeticweaver @tautologer > the USG can in general do whatever they want

the founders of this great nation fought several bloody wars to make sure this is not true

English

Nitarshan retweetledi

Nitarshan retweetledi

Nitarshan retweetledi

Nitarshan retweetledi

Nitarshan retweetledi

Nitarshan retweetledi

Nitarshan retweetledi