Brian Crabtree

6.2K posts

Brian Crabtree

@ourtown2

Curiosity driven AI expert No possessions, no agenda. Just exploring math, systems, and strange ideas.

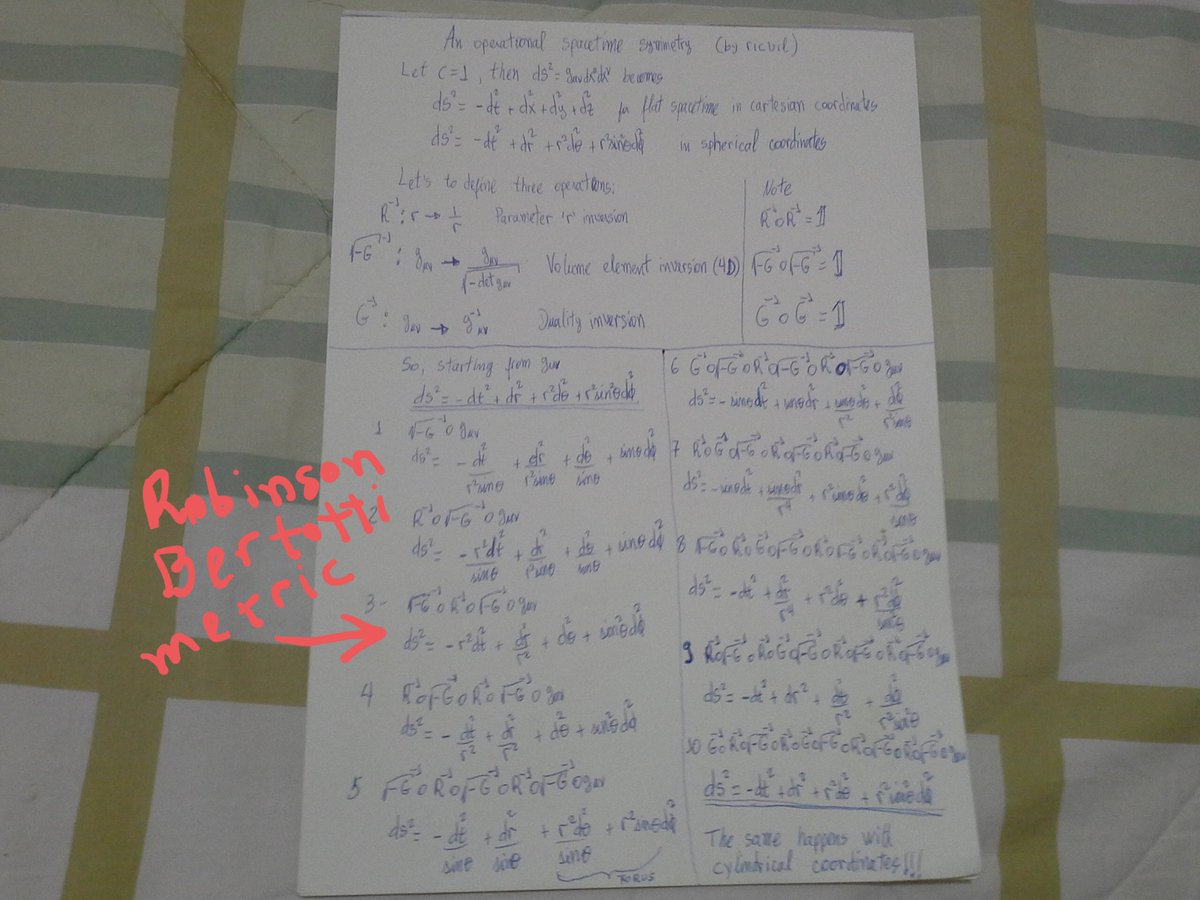

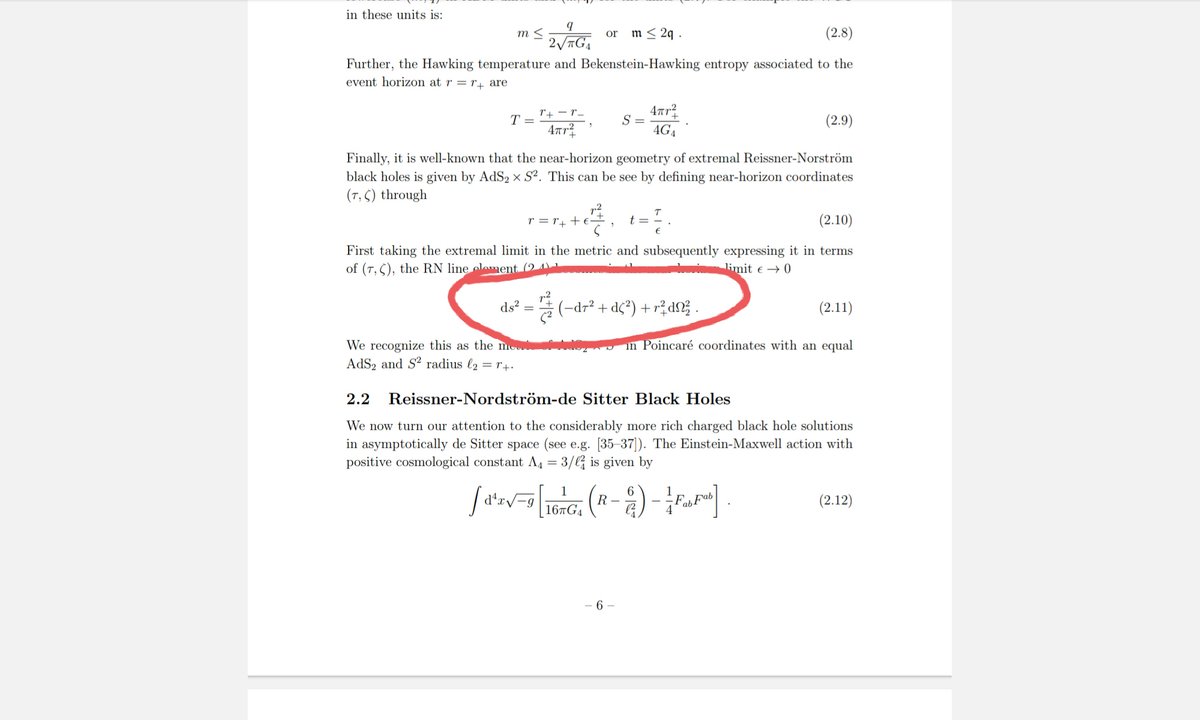

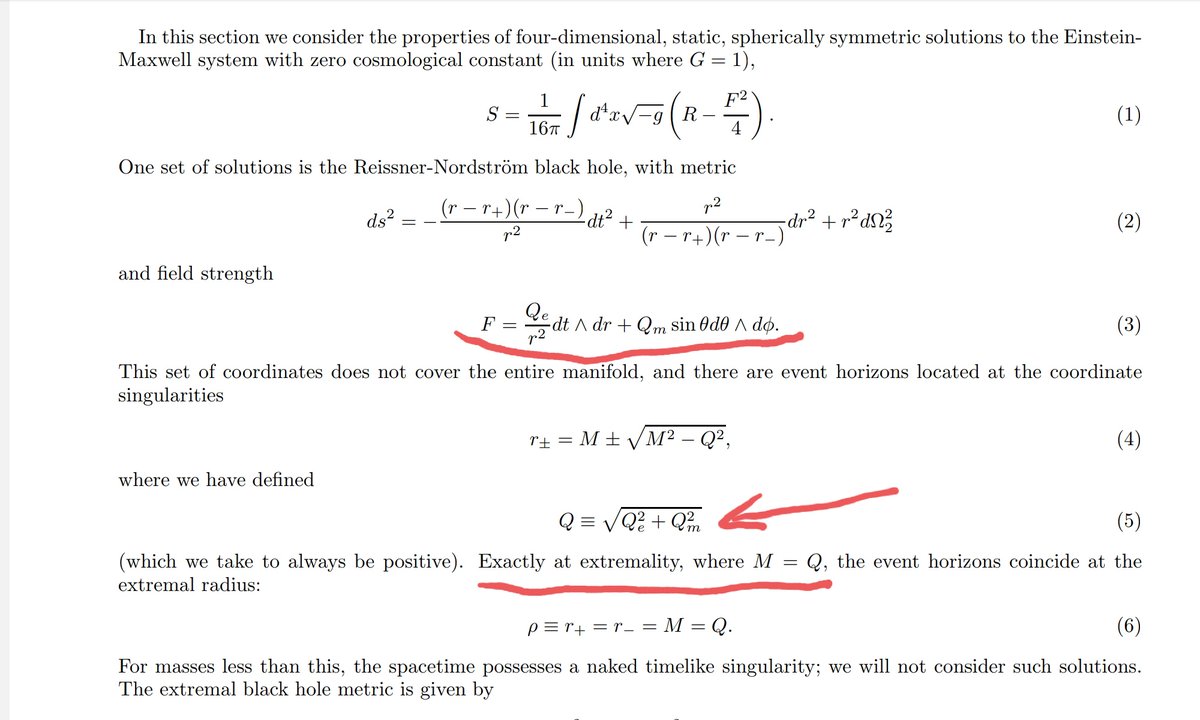

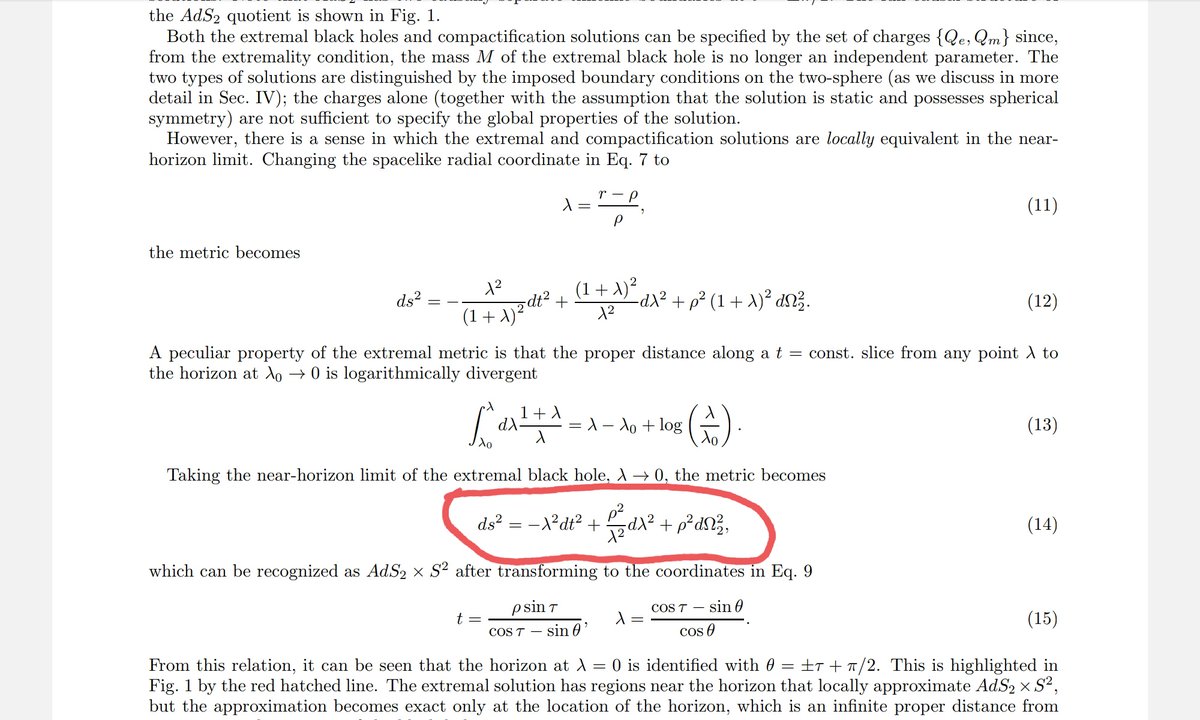

Remarks on electrical Penrose process for magnetized Reissner-Nordström black hole A. Baez, Nora Breton, I. Cabrera-Munguia arxiv.org/abs/2605.20492 [𝚐𝚛-𝚚𝚌]

Ofc Demis is absolute goat no doubt but its kinda funny to think we pushed the goalpost to a degree that it takes Ramanujan to be considered AGI

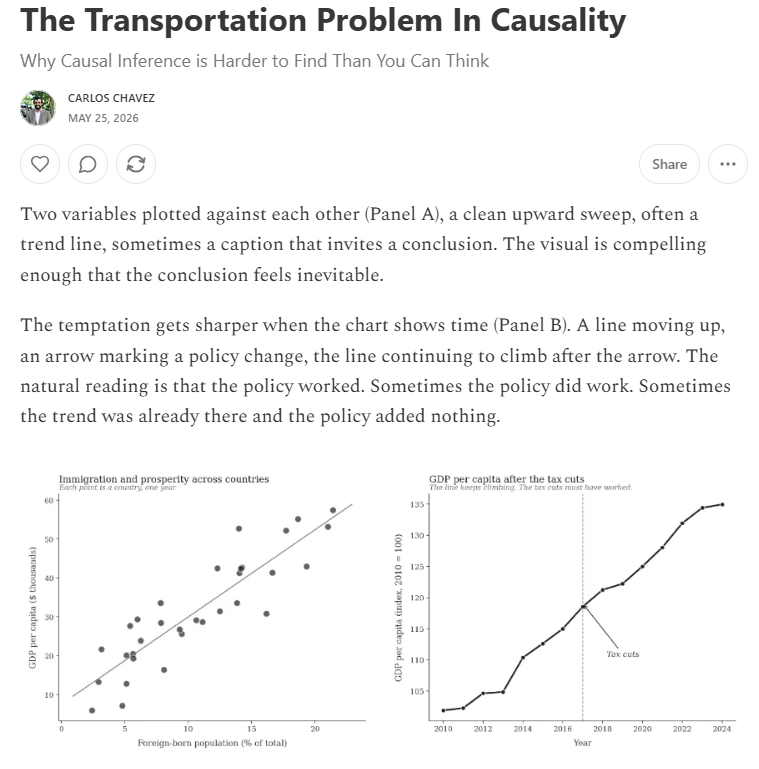

The gap between countries running large trade surpluses and those running large deficits is growing. Sound domestic policies, not trade barriers, remain the path to durable rebalancing. See our blog for more. imf.org/en/blogs/artic…

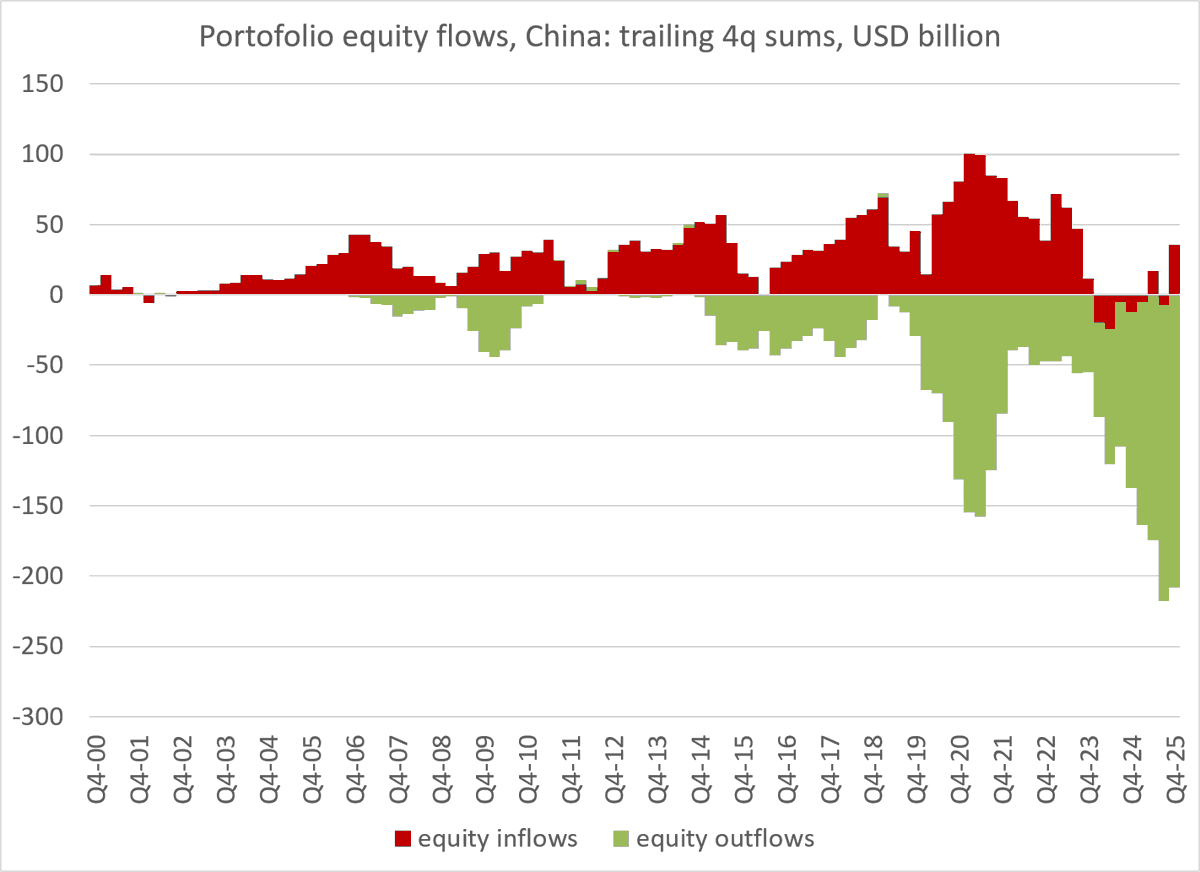

Beijing tightens capital controls despite large current account surplus and supposedly undervalued currency. China launches crackdown on cross-border stock trading to stem capital outflows. An estimated $1 tn flowed out of country last year, biggest capital outflow since data began in 2006 bloomberg.com/news/articles/…

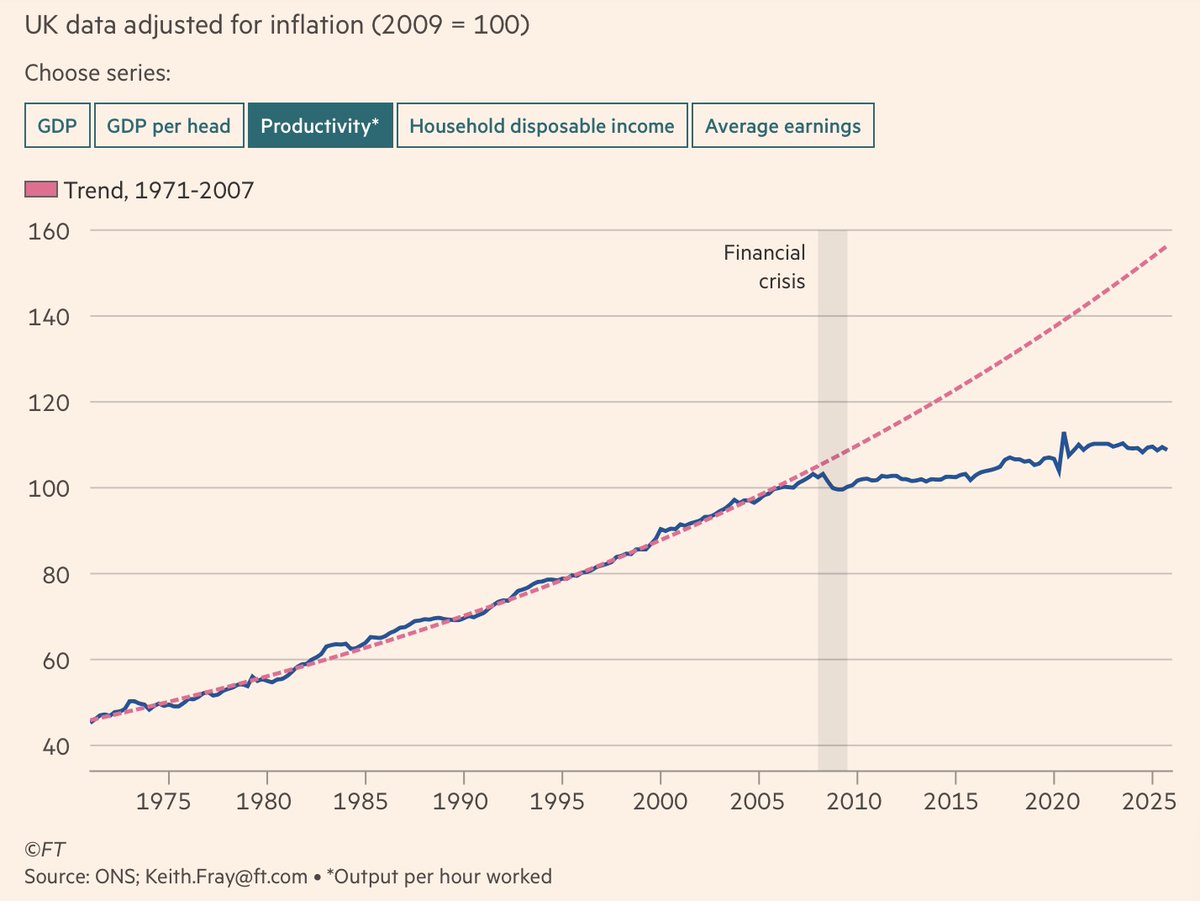

The Economist on the U.S. economy’s consistent growth outperformance relative to other advanced countries: “America’s outperformance began decades ago, but in the 2020s it has become vast. And it is likely to last. The latest IMF forecasts show American growth besting the rest all the way to 2030 and beyond…. Many of America’s advantages are hard to emulate. The country’s continental scale, single language, natural-resource wealth and the fiscal space that comes from issuing the world’s safe asset give it a unique economic advantage over Europe… But America also shows just how much other rich countries are failing to live up to their economic potential.” #economy @EconUS @TheEconomist