NeuralMyth

963 posts

NeuralMyth

@seoinetru

Unleashing imagination through neural network-generated fantasy art. Follow for captivating scenes and mystical creatures 🎨 #fantasyart #digitalart

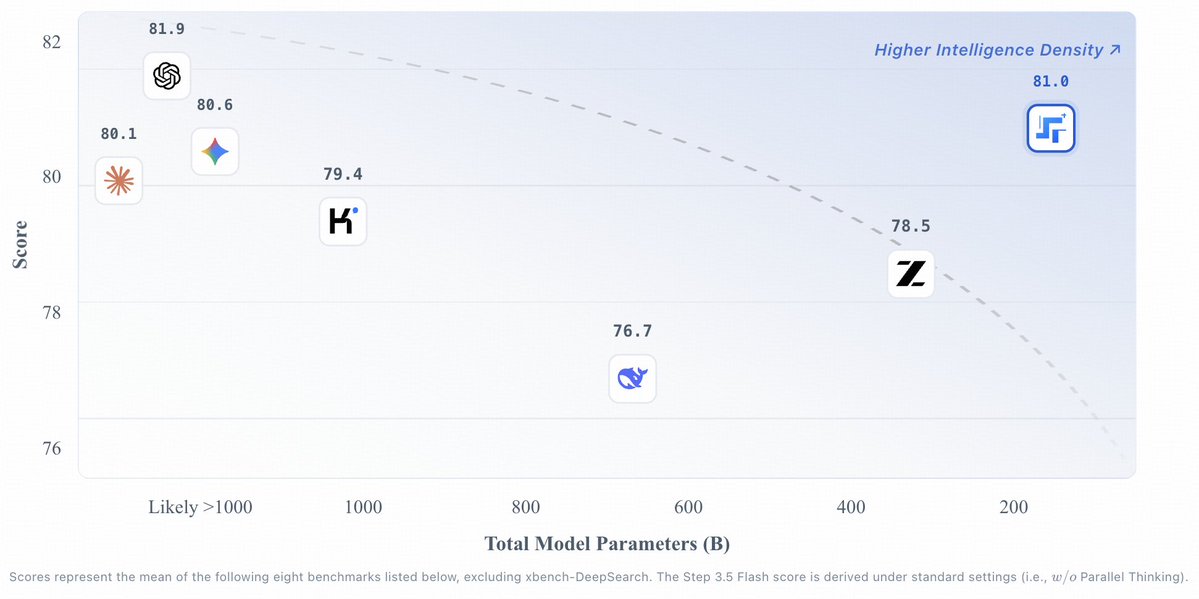

Xiaomi MiMo-V2.5 is now officially open-sourced! MIT License, supporting commercial deployment, continued training, and fine-tuning - no additional authorization required. Two models, both supporting a 1M-token context window : • MiMo-V2.5-Pro: built for complex agent and coding tasks, ranking No.1 among open-source models on GDPVal-AA and ClawEval • MiMo-V2.5: a native omni-modal model with strong agent capabilities A model's value isn't measured by rankings alone — it's measured by the problems it solves. Let's build with MiMo now! 🤗 Weights: huggingface.co/collections/Xi… 📄 Blog: #blog" target="_blank" rel="nofollow noopener">mimo.xiaomi.com/index#blog

Seedance 2.0 is now available to everyone without any restrictions! fal.ai/models/bytedan…