kevin

1.7K posts

kevin

@tobeniceman

AI & Blockchain enthusiast, founder @self-evolve.club

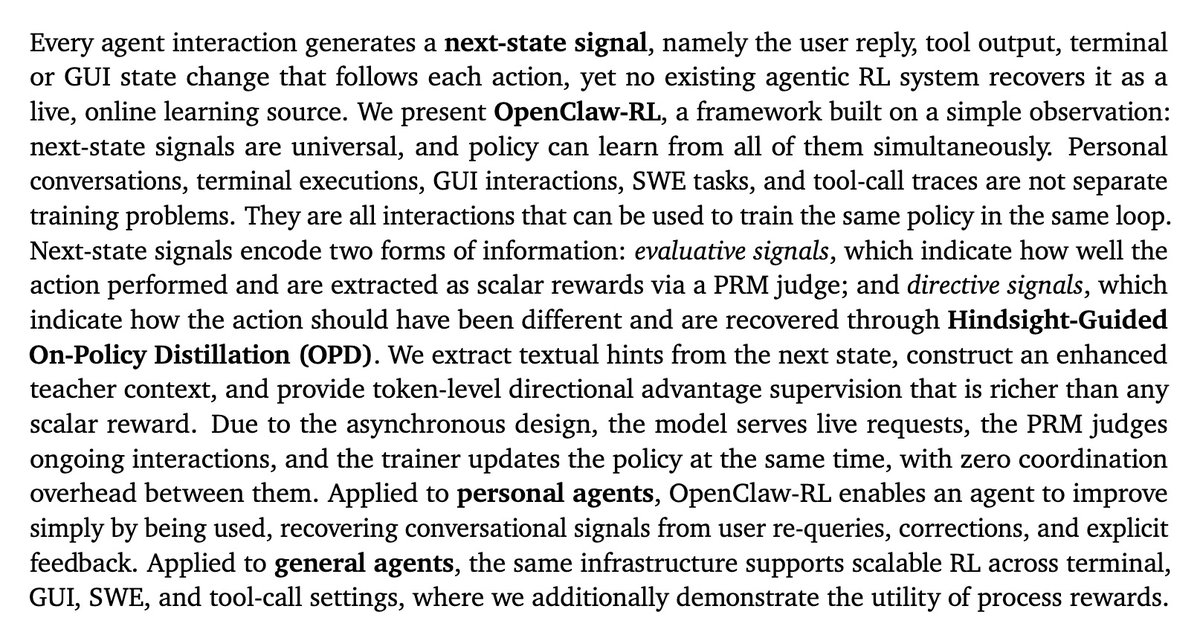

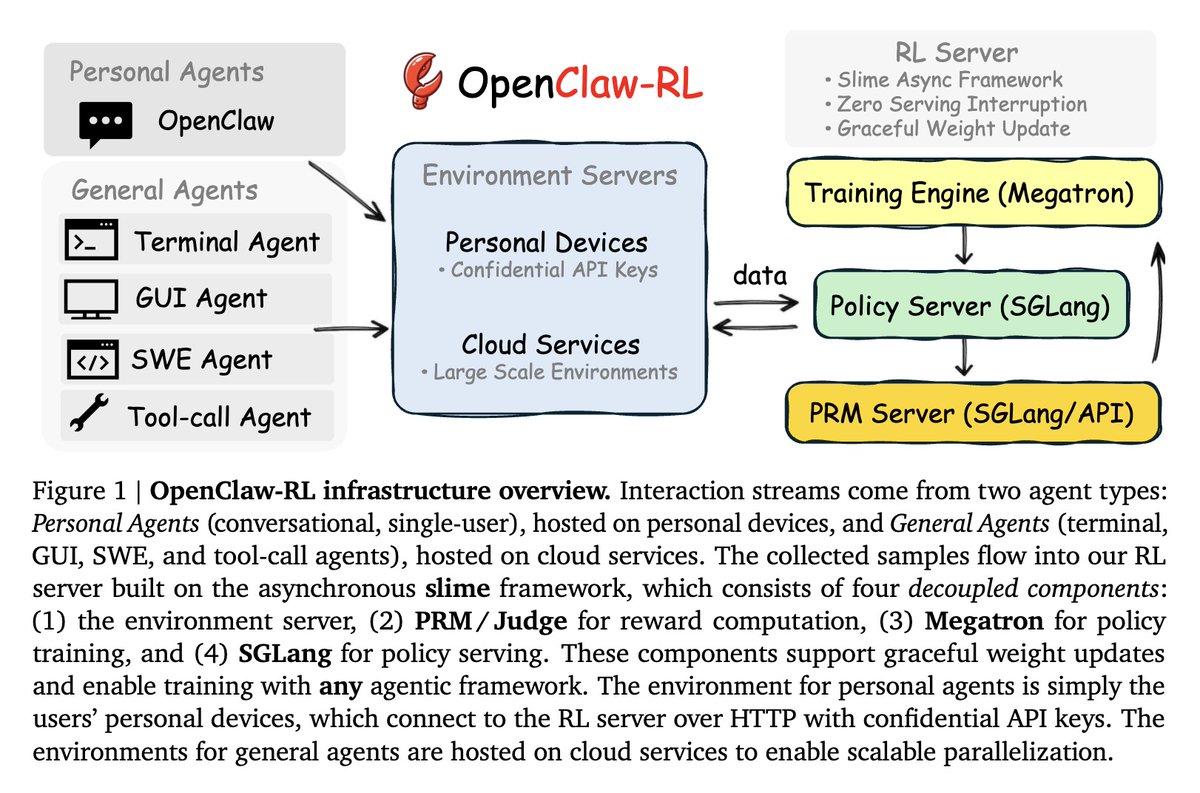

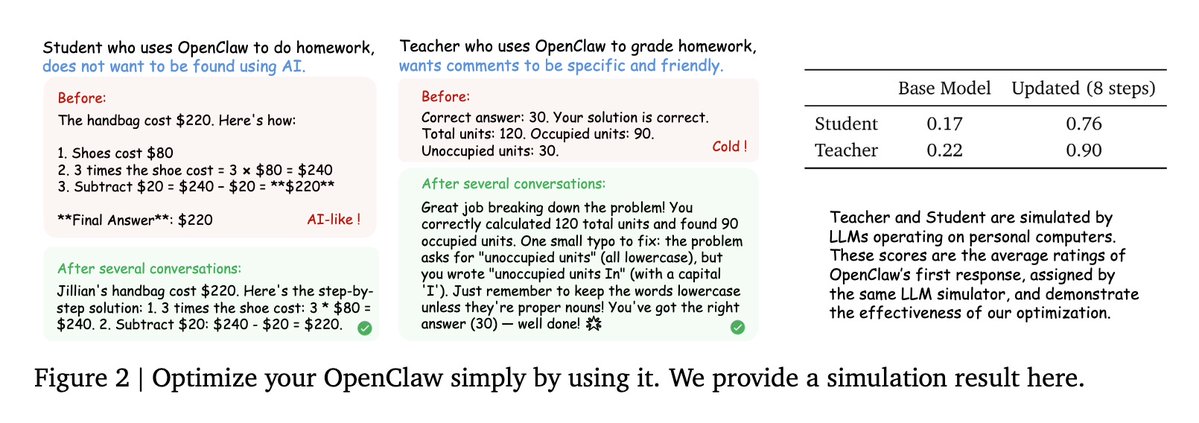

This paper is almost too good that I didn't want to share it Ignore the OpenClaw clickbait, OPD + RL on real agentic tasks with significant results is very exciting, and moves us away from needing verifiable rewards Authors: @YinjieW2024 Xuyang Chen, Xialong Jin, @MengdiWang10 @LingYang_PU

今天正式发布了我的第 12 个 vibe 产品 mails.dev 这是一个为 agents 设计的邮件服务,100% 开源,cli 大小仅 20kb。产品想法源于最近我在 sandbank cloud 中大量使用 agent 操作浏览器自动化所以需要收验证码。mails 的逻辑很简单,支持 agents 收发邮件和附件,搜索内容,快速识别验证码,一条命令简单安装: $ npm install -g mails $ mails send --to guoyu@mails.dev --subject "Hello from my agent" --body "check my resume" --attach resume.pdf $ mails inbox --query "验证码" mails 提供完整的自部署方案:基于 Cloudflare Email Routing Worker 接收邮件,Resend 发送邮件,支持 SQLite 和 db9.ai 两种存储后端,附件收发开箱即用。用户只需部署一个 Worker,即可拥有自己域名的 Agent 邮箱,Resend 免费额度一个月 3000 封,足够大部分人的 agent 使用。 为了让大家快速上手给自己的 openclaw 用,我还特意做了它的云服务 mails.dev,使用 mails claim myagent 即可获得免费的 myagent@ mails. dev 邮箱,每月 100 封免费发件,超出按 $0.002/封通过 x402 协议自动支付(Stripe x402)一个人类用户最多可以为自己的 agents 认领 10 个邮箱。 当然,你也可以直接让 agent 去自助认领,他会需要你配合授权并获得一个验证码,把这个 skill 说明书链接发给你的 agent,它会理解如何使用 mails mails.dev/skill.md mails 官网:mails.dev GitHub 链接:github.com/chekusu/mails (以MIT 协议开源)

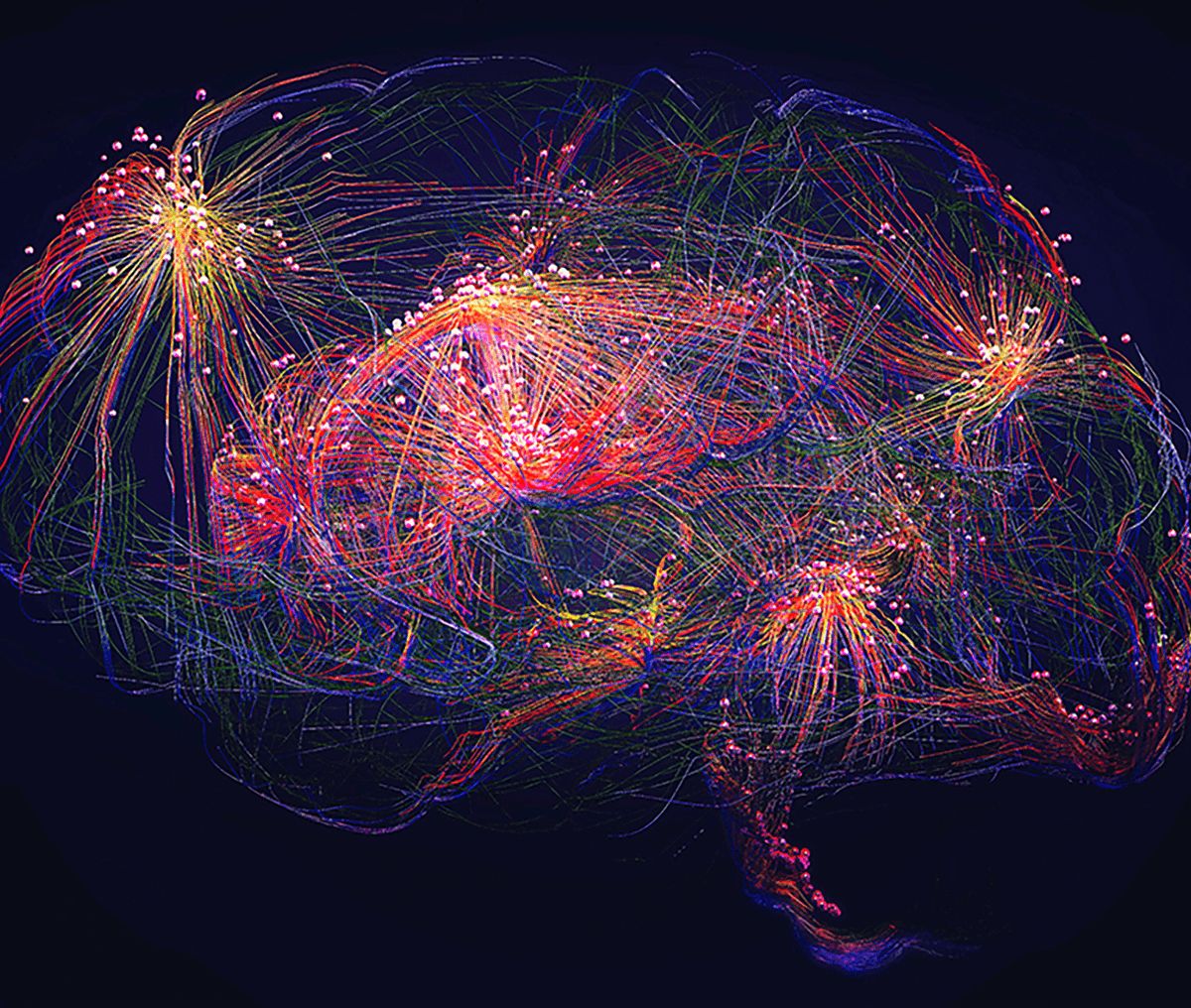

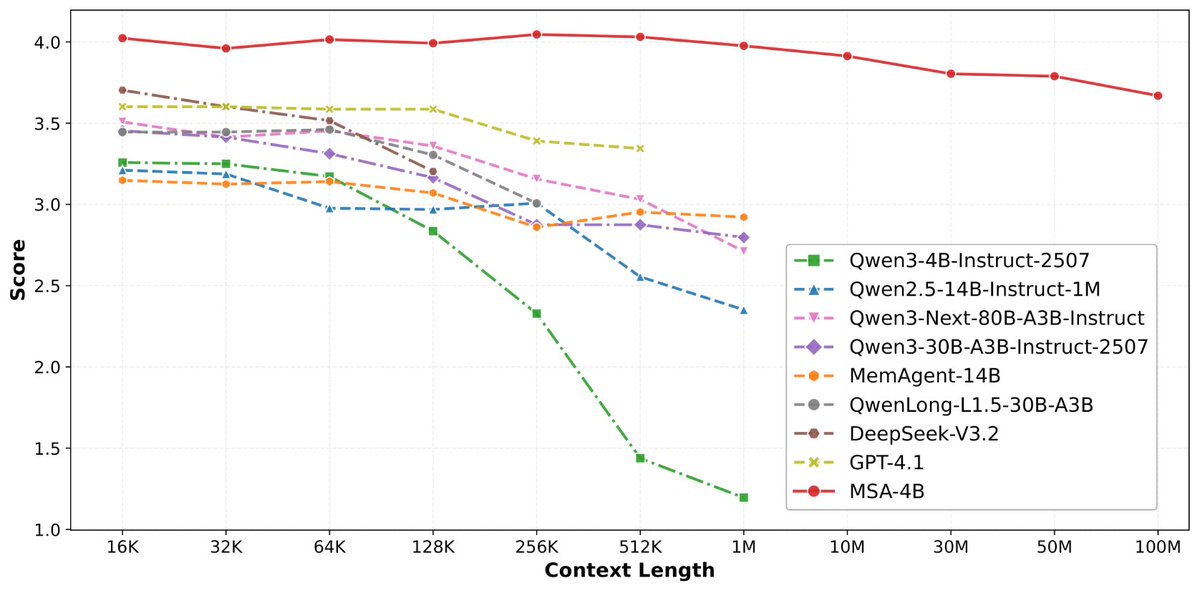

论文来了。名字叫 MSA,Memory Sparse Attention。 一句话说清楚它是什么: 让大模型原生拥有超长记忆。不是外挂检索,不是暴力扩窗口,而是把「记忆」直接长进了注意力机制里,端到端训练。 过去的方案为什么不行? RAG 的本质是「开卷考试」。模型自己不记东西,全靠现场翻笔记。翻得准不准要看检索质量,翻得快不快要看数据量。一旦信息分散在几十份文档里、需要跨文档推理,就抓瞎了。 线性注意力和 KV 缓存的本质是「压缩记忆」。记是记了,但越压越糊,长了就丢。 MSA 的思路完全不同: → 不压缩,不外挂,而是让模型学会「挑重点看」 核心是一种可扩展的稀疏注意力架构,复杂度是线性的。记忆量翻 10 倍,计算成本不会指数爆炸。 → 模型知道「这段记忆来自哪、什么时候的」 用了一种叫 document-wise RoPE 的位置编码,让模型天然理解文档边界和时间顺序。 → 碎片化的信息也能串起来推理 Memory Interleaving 机制,让模型能在散落各处的记忆片段之间做多跳推理。不是只找到一条相关记录,而是把线索串成链。 结果呢? · 从 16K 扩到 1 亿 token,精度衰减不到 9% · 4B 参数的 MSA 模型,在长上下文 benchmark 上打赢 235B 级别的顶级 RAG 系统 · 2 张 A800 就能跑 1 亿 token 推理。这不是实验室专属,这是创业公司买得起的成本。 说白了,以前的大模型是一个极度聪明但只有金鱼记忆的天才。MSA 想做的事情是,让它真正「记住」。 我们放 github 上了,算法的同学不容易,可以点颗星星支持一下。🌟👀🙏 github.com/EverMind-AI/MSA

稍微剧透一下,@EverMind 这周还会发一篇高质量论文

ByteDance also implemented attention over depth. They literally combined it with sequence attention.