We scaled training data attribution (TDA) methods ~1000x to find influential pretraining examples for thousands of queries in an 8B-parameter LLM over the entire 160B-token C4 corpus! medium.com/people-ai-rese…

Tolga Bolukbasi

95 posts

@tolgab0

AI research/Gemini pretraining @GoogleDeepmind, PhD, opinions my own.

We scaled training data attribution (TDA) methods ~1000x to find influential pretraining examples for thousands of queries in an 8B-parameter LLM over the entire 160B-token C4 corpus! medium.com/people-ai-rese…

We scaled training data attribution (TDA) methods ~1000x to find influential pretraining examples for thousands of queries in an 8B-parameter LLM over the entire 160B-token C4 corpus! medium.com/people-ai-rese…

You misread. There had been multiple LLM projects within FAIR for years. Some were open sourced as research prototypes (e.g. OPT175B, Galactica, BlenderBot...). In mid-2022, FAIR started a large LLM project called Zetta, which was still going in late 2022 when ChatGPT came out. A small group at FAIR-Paris was working on theorem proving. They needed an LLM for their own purpose and thought Zetta was too big and not ready. They developed their own model, which eventually became Llama-1. What happened internally between Zetta and Llama is somewhat similar to what just happened between DeepSeek and the big US players: a small team of talented folks innovated and beat the large teams.

Our work on scaling training data attribution is out. There are a lot of insights in there, I especially like the distinction between attribution and influence. Thanks to our amazing student researcher Tyler for making this happen.

New Anthropic research paper: Many-shot jailbreaking. We study a long-context jailbreaking technique that is effective on most large language models, including those developed by Anthropic and many of our peers. Read our blog post and the paper here: anthropic.com/research/many-…

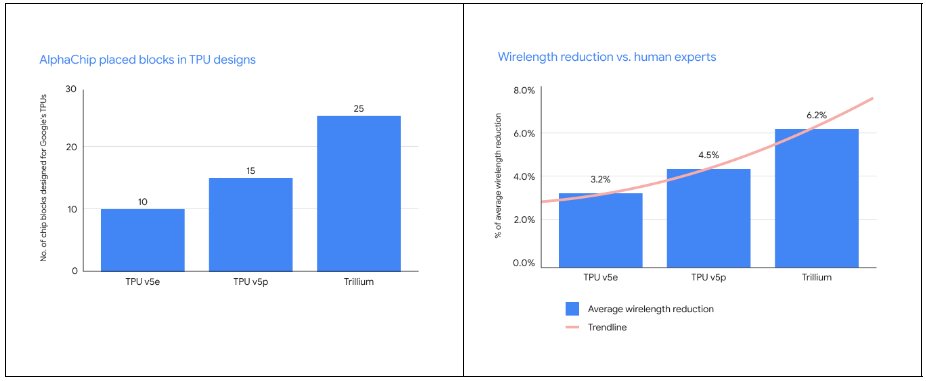

Our AI for chip design method AlphaChip has transformed the way we design microchips. ⚡ From helping to design state-of-the-art TPUs for building AI models to CPUs in data centers - its widespread impact can be seen across Alphabet and beyond. Find out more → dpmd.ai/3ZDRtYY

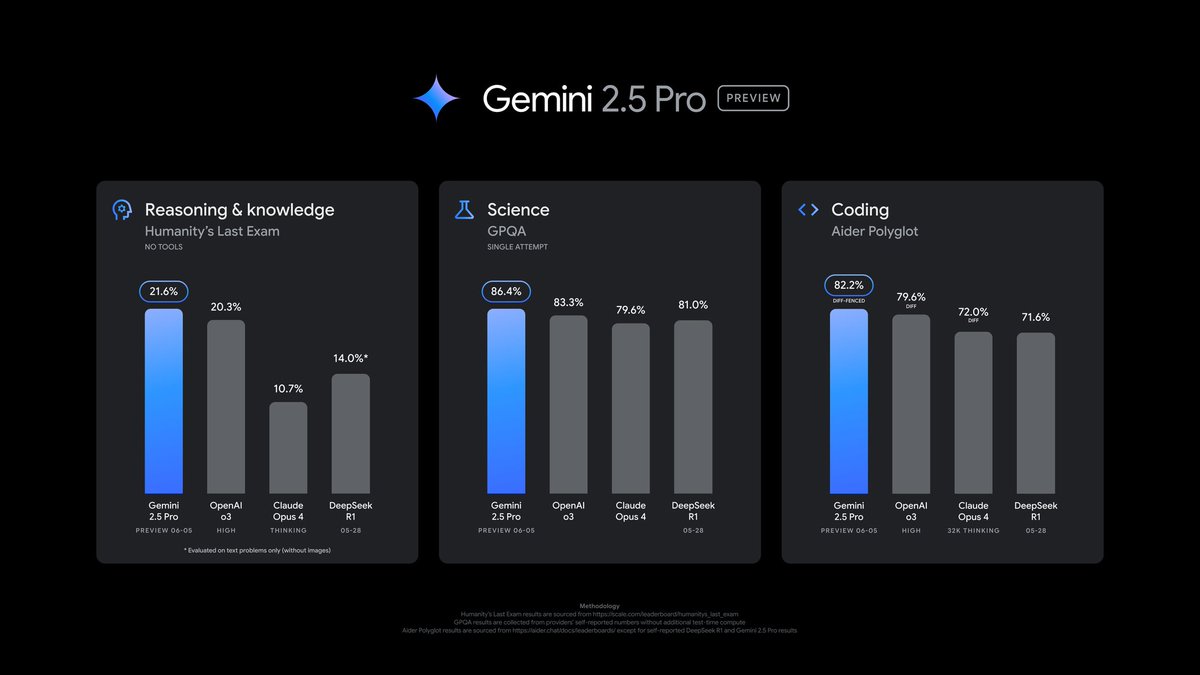

Exciting News from Chatbot Arena! @GoogleDeepMind's new Gemini 1.5 Pro (Experimental 0801) has been tested in Arena for the past week, gathering over 12K community votes. For the first time, Google Gemini has claimed the #1 spot, surpassing GPT-4o/Claude-3.5 with an impressive score of 1300 (!), and also achieving #1 on our Vision Leaderboard. Gemini 1.5 Pro (0801) excels in multi-lingual tasks and delivers robust performance in technical areas like Math, Hard Prompts, and Coding. Huge congrats to @GoogleDeepMind on this remarkable milestone! Gemini (0801) Category Rankings: - Overall: #1 - Math: #1-3 - Instruction-Following: #1-2 - Coding: #3-5 - Hard Prompts (English): #2-5 Come try the model and let us know your feedback! More analysis below👇

Gemini 1.5 for video understanding 🚀🚀

Introducing Veo: our most capable generative video model. 🎥 It can create high-quality, 1080p clips that can go beyond 60 seconds. From photorealism to surrealism and animation, it can tackle a range of cinematic styles. 🧵 #GoogleIO

Very excited to open-source LLM Comparator! This new #visualization tool lets you analyze LLM responses side-by-side. It’s been used for evaluating LLMs @Google, and we're proud to release it as part of Google's Responsible GenAI Toolkit. medium.com/people-ai-rese…