ToolKami

52 posts

@tool_kami

https://t.co/Iyd2e53Ler: personal proactive AI assistant for empire builders.

Nothing humbles you like telling your OpenClaw “confirm before acting” and watching it speedrun deleting your inbox. I couldn’t stop it from my phone. I had to RUN to my Mac mini like I was defusing a bomb.

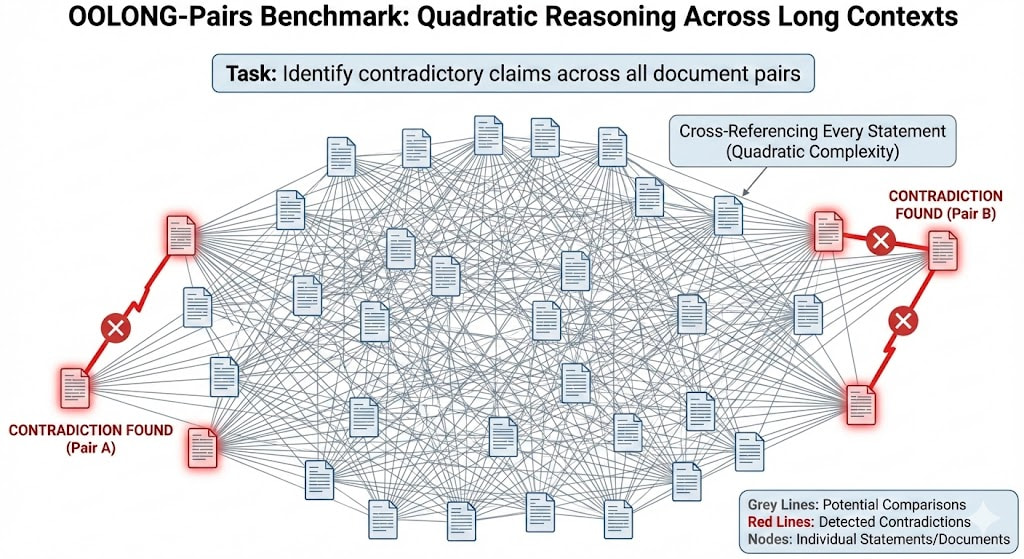

If you're building agentic tooling right now, there's only one finish line: the RL pipeline. Everything else is a race toward obsolescence. Looking at the landscape. A wave of startups has emerged around the atomic components of agentic systems: - Agentic search ( @trychroma , @turbopuffer, @pinecone) - Browser automation ( @browserbase and @browser_use) - Code diffing tools ( @morphllm and others) Some of these companies exist for one reason: "today's language models aren't good enough" They need scaffolding. They need specialized tools to compensate for their limitations. The brutal truth that some people know is that scaffolding is only temporary. We now have two futures and only one survival Path - Scenario 1: The Great Simplification LLMs become so capable that specialized tooling becomes unnecessary overhead. Why use a complex vector database when GPT-7 can just... remember or perhaps use Ripgrep and it always works? Why use browser automation middleware when the model natively understands web interaction? Your carefully built infrastructure becomes legacy code. - Scenario 2: The Integration Frontier labs integrate specific tooling directly into their RL training pipelines. The tools that agents learn with become the tools they're optimized for. This is the only path to survival and it's not about having the best features. So the real competition wouldn't be feature Velocity but it would be about becoming embedded in how the next generation of models learns to use tools at all. If OpenAI, Anthropic, or Google trains their models with your infrastructure in the loop, you win. Your tool becomes the native interface. You become infrastructure. If they don't? You're building on quicksand.

Introducing SWE-grep and SWE-grep-mini: Cognition’s model family for fast agentic search at >2,800 TPS. Surface the right files to your coding agent 20x faster. Now rolling out gradually to Windsurf users via the Fast Context subagent – or try it in our new playground!