Parallax

87 posts

Parallax

@tryParallax

build your own ai cluster. run open models across your machines.

Running 400B model on iPhone! 0.6 t/s Credit @danveloper @alexintosh @danpacary @anemll

PinchBench results for Qwen3.5 27B using @UnslothAI K_XL quants, best of 3, thinking enabled. TL;DR: Q3 KXL (14.5GB) or Q4 KXL (18GB) While overall the "best" results showed little degradation, if you dig into mean/std Q4_K_XL overall was the best at ~84% on average. Q3 seems viable, while Q2 is the the lowest performing, of course.

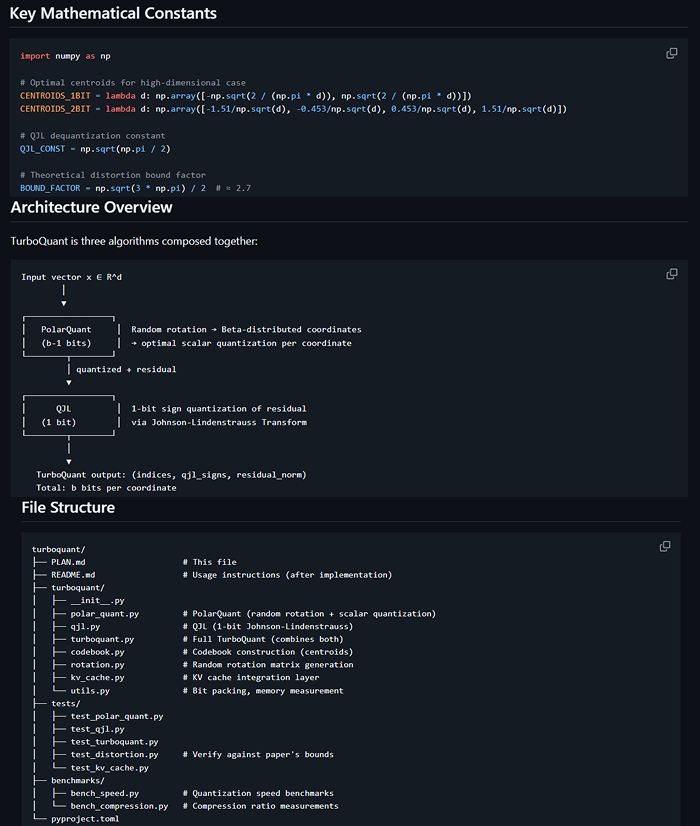

I am continuing my adventure into distributed AI system with the parallax scheduling strat from @Gradient_HQ in this 37min tutorial I go through: - heuristic used to make scheduling tractable - dynamic programming formulation - filling GPU with water - shoving them into shelves

Today, we're taking Manus out of the cloud and putting it on your desktop. Introducing My Computer, the core feature of the new Manus Desktop app. It’s your AI agent, now on your local machine.

Beyond improvements in speed and cost, Echo-2 demonstrates a high standard of model performance. Benchmarking data across five math reasoning tasks shows Echo-2 achieving an average score of 35.75, compared to 35.30 for ByteDance’s verl. These results confirm that the architectural efficiencies of the Open Intelligence Stack (OIS) do not come at the expense of reasoning capabilities.