UmaShankar Arora

496 posts

UmaShankar Arora

@usarora

CGO @ Zordial – exploring AI, emerging tech & Salesforce. Sharing insights on generative AI, leadership & innovation. Empowering businesses with AI.

Jaipur, India Katılım Şubat 2009

430 Takip Edilen387 Takipçiler

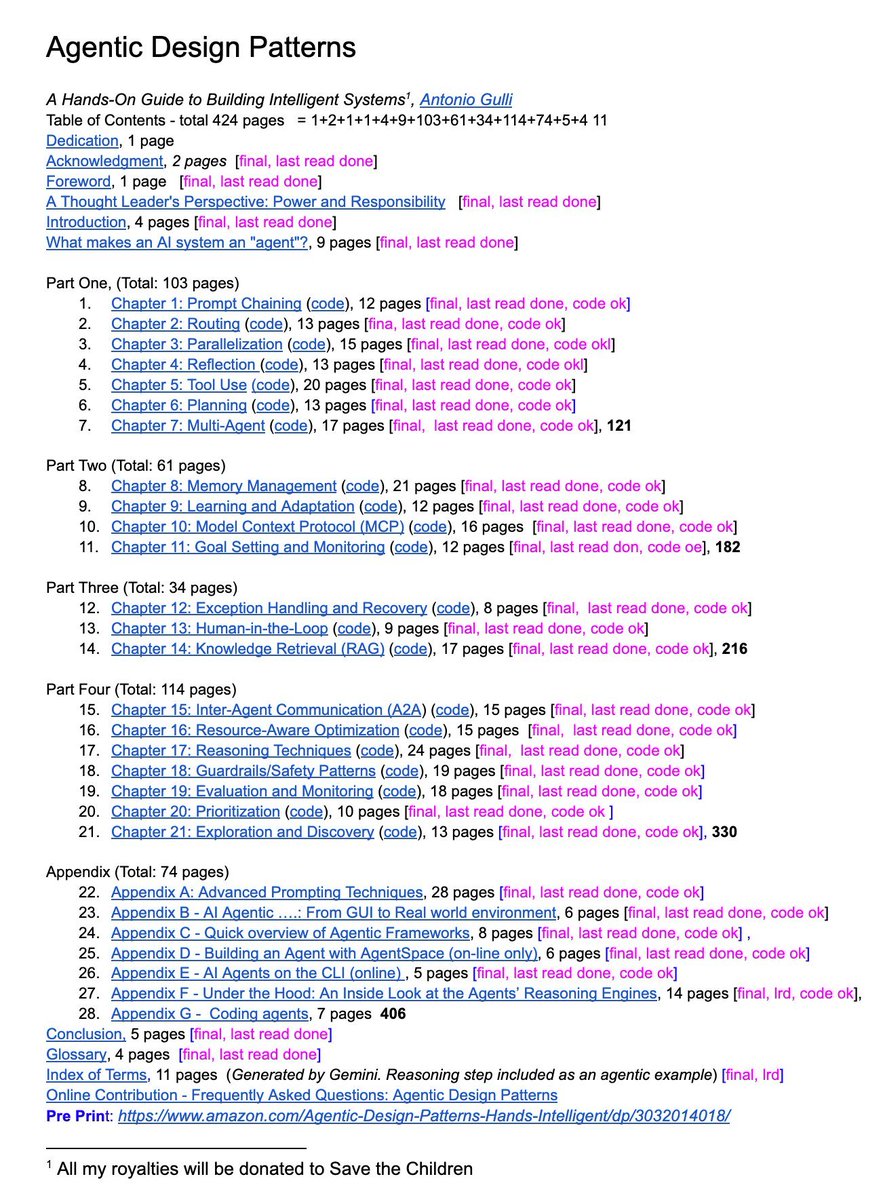

A senior Google engineer just dropped a 421-page doc called Agentic Design Patterns.

Every chapter is code-backed and covers the frontier of AI systems:

→ Prompt chaining, routing, memory

→ MCP & multi-agent coordination

→ Guardrails, reasoning, planning

This isn’t a blog post. It’s a curriculum. And it’s free.

English

🎁 What a wonderful surprise to before HOLI.

I have the opportunity to collaborate with the Trailhead Salesforce team on Superbadge development.

@trailhead @justguilda @salesforce @gurugram_sfmc @JaipurTug #SuperBadgeSME #Superbadge #trailhead #marketingChampion

English

Claude Code + Nano Banana 2 is f*cking cracked 🤯

I built a skill inside Claude Code that writes JSON image prompts for Nano Banana 2, and the outputs look like they came from a professional photo shoot.

One plain-text prompt. Claude rewrites it as structured JSON with lighting, camera, composition, style, and negative prompts.

Then fires it off to Nano Banana 2.

All inside Claude Code.

Perfect for DTC brands and agencies who need high-volume ad creative without booking a shoot.

If you're using Nano Banana 2 for product shots and lifestyle images but every generation feels like pulling a slot machine lever — random lighting, inconsistent style, plastic skin, misspelled labels ...

This skill fixes the entire output:

→ You describe what you want in plain English

→ Claude rewrites it as a structured JSON prompt (lighting, camera angle, lens, depth of field, color grading — all of it)

→ Fires it to Nano Banana 2 via API

→ Saves the prompt + image in organized folders

→ You iterate on the style until it's dialed, then every output matches

No more slot machine prompting.

No more inconsistent brand imagery.

No more burning credits on unusable generations.

What you get:

- Photo-realistic product shots and lifestyle images on demand

- Full control over style, lighting, composition, and camera settings

- Saved JSON prompts you can reuse across every campaign

- A skill that gets smarter the more feedback you give it

Built 100% in Claude Code with a custom skill + Python scripts.

I put together a full playbook showing the exact skill, the JSON schema, and the workflow to set this up yourself.

Want the full playbook?

> Like this post

> Comment "BANANA"

And I'll send it over (must be following so I can DM)

English

UmaShankar Arora retweetledi

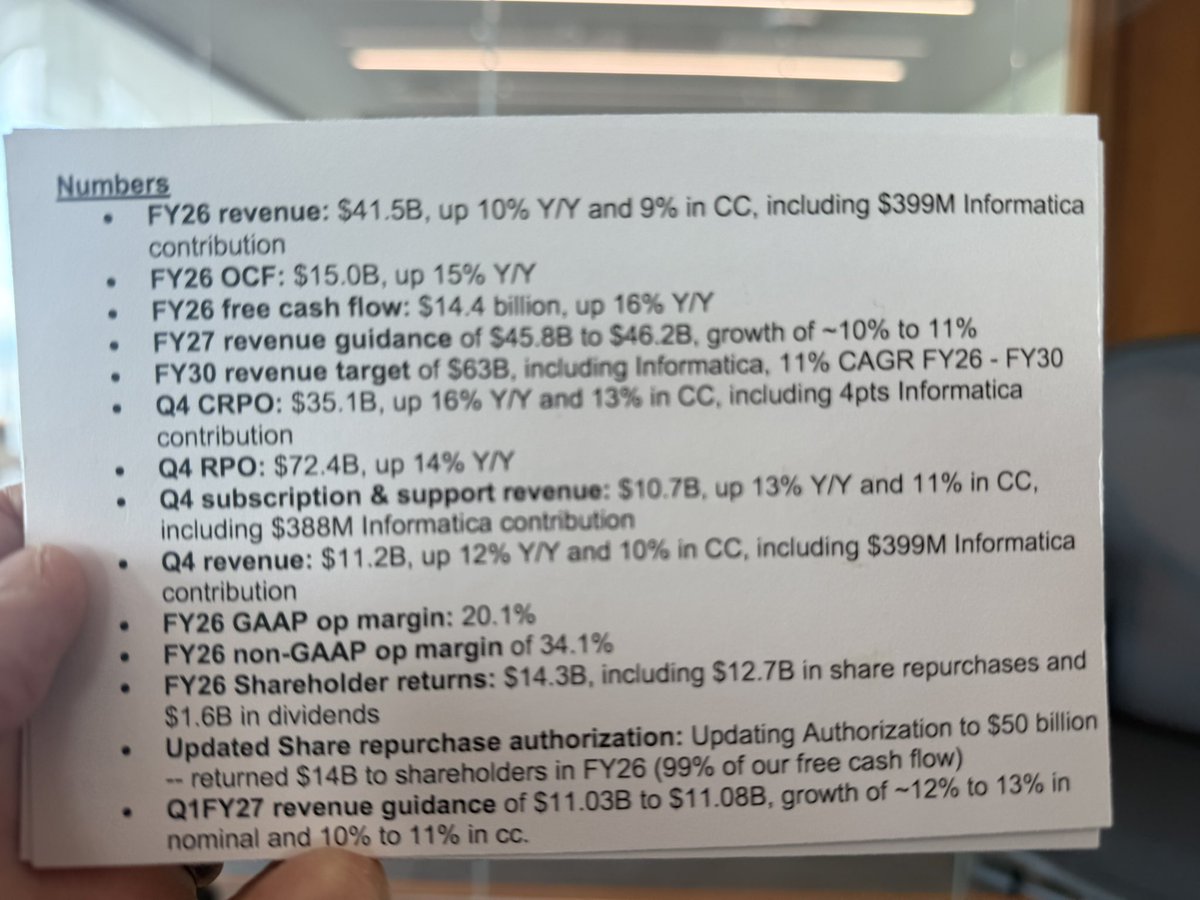

Huge congrats to the @Salesforce Ohana on an epic FY26! 🚀 $41.5B revenue (up 10% Y/Y), $15B OCF (up 15%), $14.4B FCF (up 16%). FY27 guidance: $44.5B-$46.2B revenue (~10-14% growth). Crushing it with 99% FCF returned & $65B+ FY30 target. Mahalo! investor.salesforce.com/financials/qua…

English

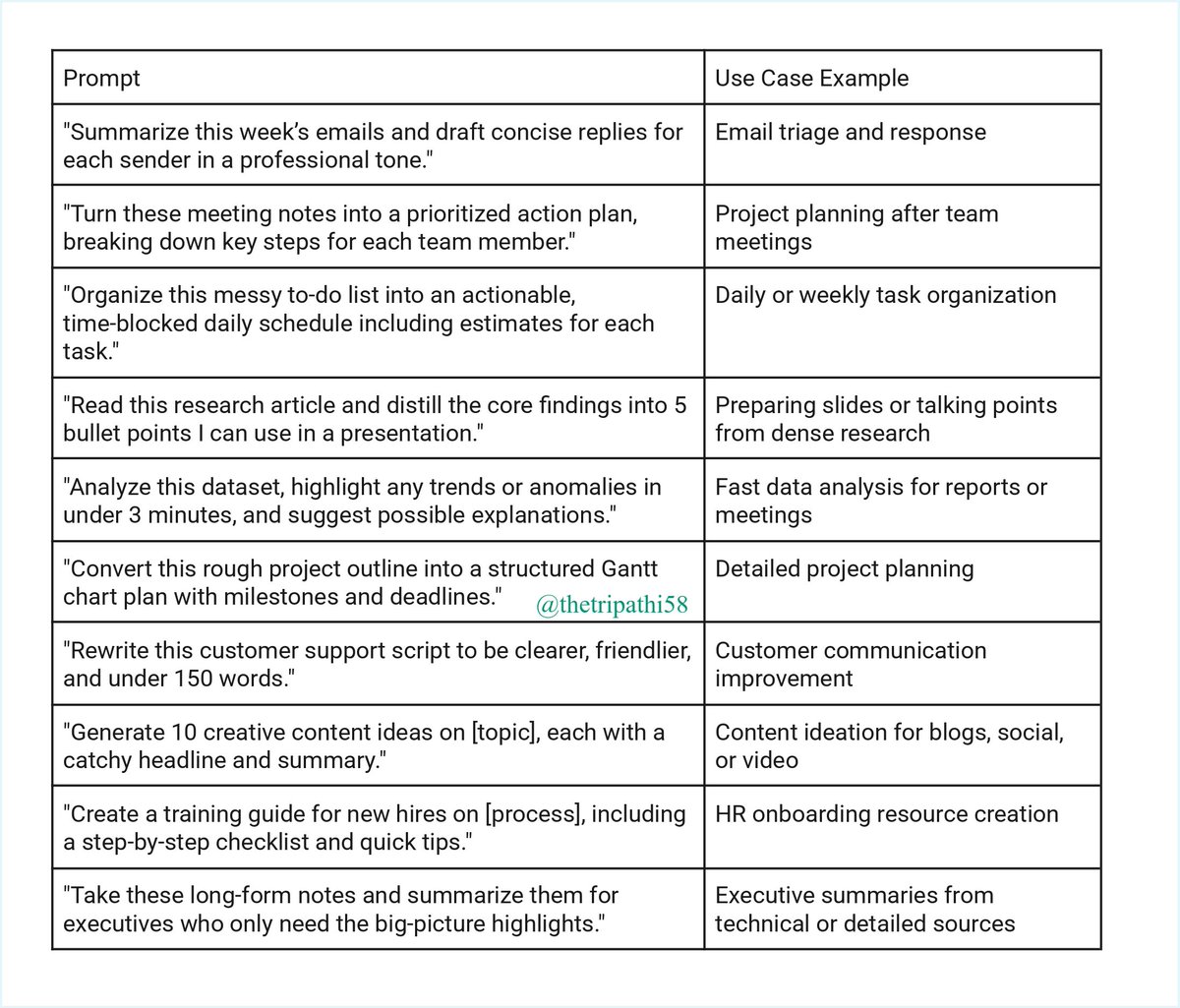

Most people use Claude like a chatbot 😱

I use it like a startup team.

I built 21 Claude Mega Prompts for:

• Idea validation

• AI agent design

• Product Hunt launches

• Viral content

• Growth strategy

These are execution-level frameworks — not basic prompts.

Giving it away FREE.

Follow me MUST so i can dm you

Comment “CLAUDE”

I’ll send the doc

English

Every CXO I meet "we're exploring AI." Matt nails why that's not enough anymore. The gap between exploring and implementing is where careers and companies get left behind. If you run a $5M+ business and haven't embedded AI into your operations yet, this is your 10-minute warning.

Matt Shumer@mattshumer_

English

UmaShankar Arora retweetledi

UmaShankar Arora retweetledi

When you are from Rajasthan and you travel to a beach - and you are missing home!

And then all of a sudden - you see a camel and you feel it's home again!😄

#Beach #Rajasthani

🐪🏖️☀️

English

Nearly 3 years ago, I started my little startup @vanshivtech in my makeshift home office in Jaipur, a Tier-2 city of India focused on @salesforce. And earlier this week, we celebrated our biggest milestone till date- as of 13th October, 2025 - Vanshiv is NOW a registered company in the US! 🇺🇸

What's more - we've chosen Silicon Valley in California as our US base and we'll soon be hiring for multiple roles here.

And what better than celebrating with our Indian flag at Moscone Center to mark this occasion! 🇮🇳

It does not matter where you start, what matters is how you plan your journey and where it takes you!

#startup #Startupjourney #Founder #Salesforce

English

@gauravkheterpal That’s true. I still remember when I got my first laptop and the feeling I had. These small things go really good long way.

English

We just upgraded one of our best developers from a Windows to a Mac machine as a surprise & this is the note he sent me!

Sometimes, what's trivial for you may mean the world to others!

For some, it’s just a laptop.

For others, it’s a symbol of how far they’ve come. ❤️

As a founder - Always back your team, they do your best for you!

English

One skill that is missing here and that is communication.

GREG ISENBERG@gregisenberg

"the core skills for 2030" agree/disagree?

English

@AndrewYNg Great analysis, Andrew. The shift toward capital-intensive AI means compensation models are evolving alongside infrastructure spending. How do you foresee startups balancing salary and compute budgets as models grow larger?

English

Recently Meta made headlines with unprecedented, massive compensation packages for AI model builders exceeding $100M (sometimes spread over multiple years). With the company planning to spend $66B-72B this year on capital expenses such as data centers, a meaningful fraction of which will be devoted to AI, from a purely financial point of view, it’s not irrational to spend a few extra billion dollars on salaries to make sure this hardware is used well.

A typical software-application startup that’s not involved in training foundation models might spend 70-80% of its dollars on salaries, 5-10% on rent, and 10-25% on other operating expenses (cloud hosting, software licenses, marketing, legal/accounting, etc.). But scaling up models is so capital-intensive, salaries are a small fraction of the overall expense. This makes it feasible for businesses in this area to pay their relatively few employees exceptionally well. If you’re spending tens of billions of dollars on GPU hardware, why not spend just a tenth of that on salaries? Even before Meta’s recent offers, salaries of AI model trainers have been high, with many being paid $5-10M/year, although Meta has raised these numbers to new heights.

Meta carries out many activities, including run Facebook, Instagram, WhatsApp, and Oculus. But the Llama/AI-training part of its operations is particularly capital-intensive. Many of Meta’s properties rely on user-generated content (UGC) to attract attention, which is then monetized through advertising. AI is a huge threat and opportunity to such businesses: If AI-generated content (AIGC) substitutes for UGC to capture people's attention to sell ads against, this will transform the social-media landscape.

This is why Meta — like TikTok, YouTube, and other social-media properties — is paying close attention to AIGC, and why making significant investments in AI is rational. Further, when Meta hires a key employee, not only does it gain the future work output of that person, but it also potentially gets insight into a competitor’s technology, which also makes its willingness to pay high salaries a rational business move (so long as it does not adversely affect the company’s culture).

The pattern of capital-intensive businesses compensating employees extraordinarily well is not new. For example, Netflix expects to spend a huge $18B this year on content. This makes the salary expense of paying its 14,000 employees a small fraction of the total expense, which allows the company to routinely pay above-market salaries. Its ability to spend this way also shapes a distinctive culture that includes elements of “we’re a sports team, not a family” (which seems to work for Netflix but isn’t right for everyone). In contrast, a labor-intensive manufacturing business like Foxconn, which employs over 1 million people globally, has to be much more price-sensitive in what it pays people.

Even a decade ago, when I led a team that worked to scale up AI, I built spreadsheets that modeled how much of my budget to allocate toward salaries and how much to allocate toward GPUs (using a custom model for how much productive output N employees and M GPUs would lead to, so I could optimize N and M subject to my budget constraint). Since then, the business of scaling up AI has skewed the spending significantly toward GPUs.

I’m happy for the individuals who are getting large pay packages. And regardless of any individual's pay, I’m grateful for the contributions of everyone working in AI. Everyone in AI deserves a good salary, and while the gaps in compensation are growing, I believe this reflects the broader phenomenon that developers who work in AI, at this moment in history, have an opportunity to make a huge impact and do world-changing work.

[Original text: deeplearning.ai/the-batch/issu… ]

English