Rambone

155 posts

Rambone

@vinrambone

Bone Agent, Open Source - Software and agent enthusiast

My name is Ahmad and I have a Compute problem

Introducing Grok Voice Think Fast 1.0 A state-of-the-art voice model built for complex, multi-step workflows with snappy responses and high accuracy. It takes the top spot on the Tau Voice Bench and handles real-world messiness like noise, accents, and interruptions better than any other model in the world. x.ai/news/grok-voic…

My 4090 went from 26 -> 154 tok/s Qwen 3.6 27B🤯 Same GPU. Same Q4_K_M . No FP8, no extra quant. The unlock: ik_llama.cpp + speculative decoding using Qwen3-1.7B as the draft model. 85% acceptance rate. Full config + benchmarks 👇🏻

500+ likes in 28 mins. On their way to be the fastest model ever to get to #1 trending on HF! huggingface.co/deepseek-ai/De…

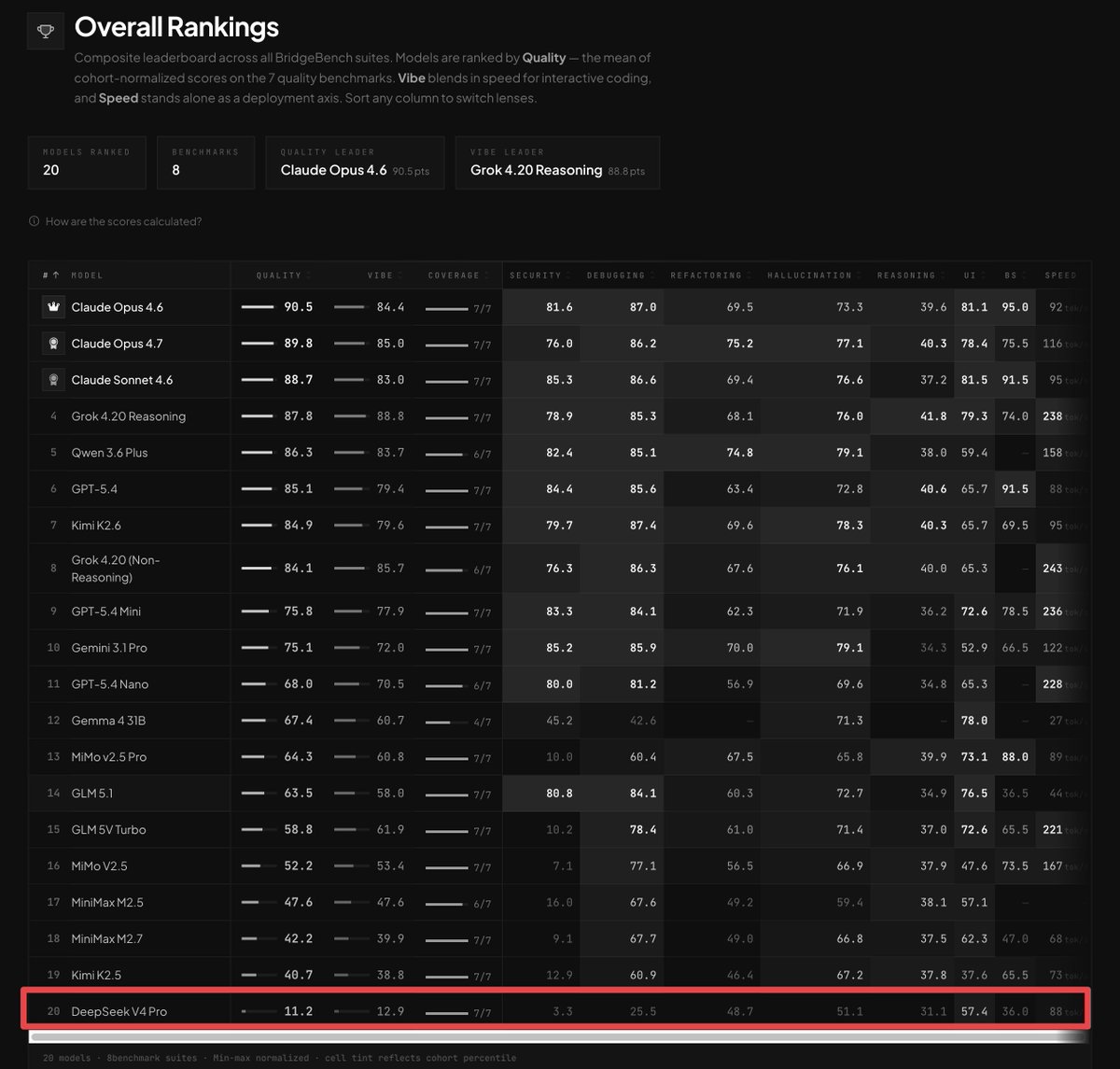

🚀 DeepSeek-V4 Preview is officially live & open-sourced! Welcome to the era of cost-effective 1M context length. 🔹 DeepSeek-V4-Pro: 1.6T total / 49B active params. Performance rivaling the world's top closed-source models. 🔹 DeepSeek-V4-Flash: 284B total / 13B active params. Your fast, efficient, and economical choice. Try it now at chat.deepseek.com via Expert Mode / Instant Mode. API is updated & available today! 📄 Tech Report: huggingface.co/deepseek-ai/De… 🤗 Open Weights: huggingface.co/collections/de… 1/n

🚀 DeepSeek-V4 Preview is officially live & open-sourced! Welcome to the era of cost-effective 1M context length. 🔹 DeepSeek-V4-Pro: 1.6T total / 49B active params. Performance rivaling the world's top closed-source models. 🔹 DeepSeek-V4-Flash: 284B total / 13B active params. Your fast, efficient, and economical choice. Try it now at chat.deepseek.com via Expert Mode / Instant Mode. API is updated & available today! 📄 Tech Report: huggingface.co/deepseek-ai/De… 🤗 Open Weights: huggingface.co/collections/de… 1/n

recommended reading. cool they are fixing things. but it's also a reason i switched away from CC. no control over the harness means having to wait for them to fix things. the model didn't change. the harness did.

@TheAhmadOsman Would be nice to see the list with the corresponding cost to run each one locally?

New in the Codex app: - GPT-5.5 - Browser control - Sheets & Slides - Docs & PDFs - OS-wide dictation - Auto-review mode Enjoy!