WeiChen

29 posts

@wei_chen_ai

Phd @SCUT1918, intern @RIKEN_AIP_EN. Probabilistic modeling & generations, post-training, including their applications to trustworthy and safe AI

🎉 Our paper about Preference Optimization has been accepted to ICML 2026! We unify entangled & disentangled objectives via incentive–score decomposition, derive the Disentanglement Band for ideal training dynamics: suppress loser while preserving winner. #ICML2026

📢Curious why your LLM behaves strangely after long SFT or DPO? We offer a fresh perspective—consider doing a "force analysis" on your model’s behavior. Check out our #ICLR2025 Oral paper: Learning Dynamics of LLM Finetuning! (0/12)

🎉 Our paper about Preference Optimization has been accepted to ICML 2026! We unify entangled & disentangled objectives via incentive–score decomposition, derive the Disentanglement Band for ideal training dynamics: suppress loser while preserving winner. #ICML2026

TIL the importance of diligent ACs in the peer-review process (again), unfortunately as an author #ICML2026 No response to our repeated appeals against reviewer policy/guideline violation On top of that, the AC did the same violation as the reviewer I flagged What's next?

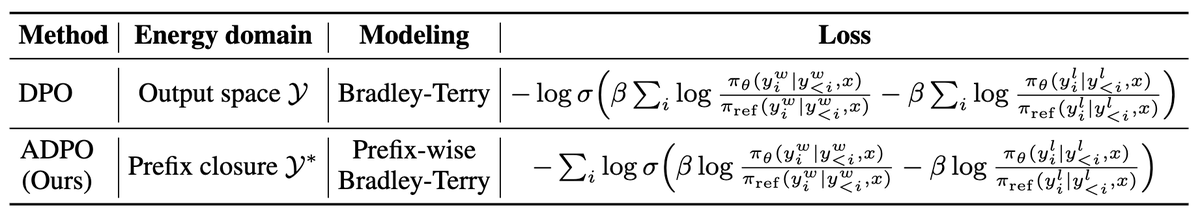

Two first-author papers accepted to #ICML2026 🇰🇷 ! - Human-like multi-image spatial reasoning in multimodal LLMs (@silviasetitech @sponddd @dai0NLP Prof. Inoue @chokkanorg) - Autoregressive direct preference optimization (Mahiro Ukai @MasahiroKaneko_ @chokkanorg Prof. Inoue)

🚀 DeepSeek-V4 Preview is officially live & open-sourced! Welcome to the era of cost-effective 1M context length. 🔹 DeepSeek-V4-Pro: 1.6T total / 49B active params. Performance rivaling the world's top closed-source models. 🔹 DeepSeek-V4-Flash: 284B total / 13B active params. Your fast, efficient, and economical choice. Try it now at chat.deepseek.com via Expert Mode / Instant Mode. API is updated & available today! 📄 Tech Report: huggingface.co/deepseek-ai/De… 🤗 Open Weights: huggingface.co/collections/de… 1/n

🚀 DeepSeek-V4 Preview is officially live & open-sourced! Welcome to the era of cost-effective 1M context length. 🔹 DeepSeek-V4-Pro: 1.6T total / 49B active params. Performance rivaling the world's top closed-source models. 🔹 DeepSeek-V4-Flash: 284B total / 13B active params. Your fast, efficient, and economical choice. Try it now at chat.deepseek.com via Expert Mode / Instant Mode. API is updated & available today! 📄 Tech Report: huggingface.co/deepseek-ai/De… 🤗 Open Weights: huggingface.co/collections/de… 1/n