David

13.5K posts

David

@havetorunalot

HW4 Tesla Model S, HW4 Model Y & Solar owner. An original 💯score #FSDBeta Tester and still a long $TSLA investor. Fighting Tesla FUD & dishonest fanboy hype.

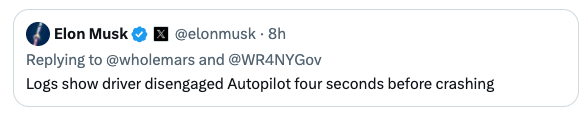

'TERRIFYING': Dashcam video shows the moment a Tesla Cybertruck, allegedly operating in self-driving mode, nearly sent a Houston mom and her infant off a bridge before violently crashing into an overpass barrier. The woman claims she suffered multiple injuries from the incident and is now suing the automaker for $1 million.

Terafab may be the most essential vertical integration Tesla has ever undertaken— and it is truly non-optional. It will take years to build and will test even Elon’s speedrunning abilities to the limit, but that won’t stop him from trying. The breakthrough likely lies in overhauling the overall facility’s cleanroom model. By moving wafers in sealed pods with localized micro-environments, the fab no longer needs a monolithic ultra-clean space. Elon’s line about “eating cheeseburgers and smoking cigars” on the fab floor isn’t silly, it’s the practical reality of a radically simpler, cheaper, faster approach that could finally change the economics of chipmaking. This is all forced by the brutal “pinch” in chip supply. Tesla must produce on the order of 100–200 billion AI chips per year just to saturate its roadmap. That volume powers: FSD cars & Robotaxis (tens of millions of vehicles needing AI5 inference for near-perfect autonomy), Physical Optimus (scaling from thousands today to millions per year, each requiring AI5/AI6-level compute), Digital Optimus (the new xAI-Tesla software agents for digital/office automation, running massive inference clusters), Space-based data centers (AI7/Dojo3 orbital compute for GW-scale training and inference beyond Earth limits). AI5 delivers the ~10× leap for vehicles and early robots; AI6 shifts focus to Optimus + terrestrial DCs; AI7 goes orbital. No external foundry (TSMC, Samsung, etc.) can deliver that scale or timeline— hence the Terafab launch. Without it, the entire robotics + autonomy future hits a brick wall. Terafab isn’t optional; it’s the only way forward.

'TERRIFYING': Dashcam video shows the moment a Tesla Cybertruck, allegedly operating in self-driving mode, nearly sent a Houston mom and her infant off a bridge before violently crashing into an overpass barrier. The woman claims she suffered multiple injuries from the incident and is now suing the automaker for $1 million.

Tesla says FSD was off before Cybertruck crash — but the video tells a different story electrek.co/2026/03/18/tes… by @fredlambert

Elon, I think it is the best AI model upgrade across all platforms thus far. I have convinced the last OpenAI hold out clients to move to X.ai APIs. The absolute tonnage of @Grok Heavy in lifting power is stunning and closeed the last hold out. We will need the space telescope to observe number two so far behind. Thank you and the team!