Felix Faustus@FelixFaustus

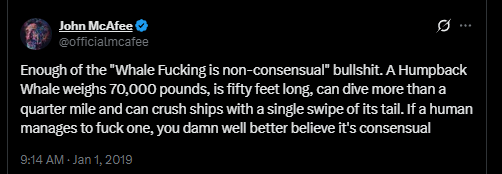

A woman is unconscious. The room is full of future doctors. Consent is… implied.

That’s not just a medical procedure. That’s a systems failure wearing a lab coat. And the reason it feels unsettling isn’t just the moral intuition. It’s the pattern. Because when something like this appears in a headline, the instinct is to treat it as an isolated scandal, a bad actor, or an outdated practice. But when you start seeing numbers, timelines, and institutional participation, the story stops looking like an anomaly and starts looking like something structural.

This is where Bayesian thinking kicks in. If millions of procedures occur under anesthesia, and explicit consent isn’t consistently obtained, then the system isn’t failing randomly. Random failures are rare, scattered, and corrected quickly. But persistent patterns across institutions and years suggest something else. They suggest incentives. They suggest normalization. They suggest a process that quietly tolerates outcomes that would otherwise trigger alarm.

Somewhere along the way, competing pressures start shaping behavior. Training throughput begins to matter more than patient autonomy. Institutional liability becomes a concern, but only insofar as it’s manageable within policy language. Checklists replace moral clarity. “Training purposes.” “Implied consent.” “Standard practice.” These phrases don’t just describe reality. They reshape it. They soften friction. They make uncomfortable practices administratively legible.

And that’s how normalization happens. Not through overt malice, but through bureaucratic gravity. Each individual actor may assume the practice is approved, standard, or necessary. Each institution inherits precedent. Each policy inherits ambiguity. And slowly, what would shock people outside the system becomes routine inside it.

The darker Bayesian inference isn’t that people are evil. It’s that systems stabilize around what they reward, tolerate, or fail to measure. If something morally uncomfortable persists across institutions, across years, and across lawsuits, then it likely survives because it solves some institutional problem. Perhaps it accelerates training. Perhaps it reduces friction. Perhaps it simply persists because no one owns the responsibility to stop it.

This is the difference between individual blame and systems thinking. Bayesian reasoning doesn’t ask, “Are doctors monsters?” It asks, “What conditions make this outcome more likely?” And once you frame it that way, the answer becomes less dramatic but more unsettling. Diffused accountability. Ambiguous consent language. Training incentives. Institutional inertia. None of these require bad people. They only require a system that doesn’t strongly penalize the outcome.

That’s why the real scandal isn’t that it happened once. It’s that it kept happening quietly, bureaucratically, and predictably. Because predictable harm is rarely accidental. Predictable harm usually means the system has adapted to tolerate it.

System checks? More like system shrugs.

And that’s the Bayesian conclusion: when harm repeats, look past individuals. Audit the incentives. Audit the norms. Audit the silence. Because sometimes the system doesn’t fail. Sometimes it works exactly as designed.

#medicine #science #audit