MD RIFAT

3.1K posts

Storing large files across a distributed network sounds easy at first. But it quickly gets complicated. Each node only has limited storage, and none of them know what the others are holding. That’s the core challenge this paper dives into how to distribute data efficiently so a downloader can recover the full file while connecting to as few storage locations as possible. There’s no coordination here. Every node acts on its own. That makes things harder, but also far more realistic for large scale systems. The authors look at three different approaches. The first is uncoded storage. It’s simple nodes just store random pieces of the file. Easy to set up, no complexity. But it doesn’t perform well. Too many overlaps happen, and important pieces go missing. So the downloader ends up connecting to more and more nodes just to fill the gaps. Then comes traditional erasure coding, like Reed Solomon. This improves things quite a bit. The file is encoded in a way that only a subset of pieces is needed to reconstruct everything. Much more efficient. Still, there’s a tradeoff. It requires a significant amount of extra storage at the central server before anything gets distributed, which adds overhead. The third approach is where things start to stand out random linear coding. Instead of storing actual chunks, nodes store random linear combinations of the data. It sounds abstract but the impact is clear. Almost any group of nodes will provide useful, independent information. Because of that, the downloader can rebuild the entire file with high probability after connecting to nearly the minimum number of nodes. No coordination needed. So what’s the takeaway? Uncoded storage is simple but inefficient. Erasure coding is effective but comes with a storage cost. Random linear coding sits right in the middle it delivers near optimal performance without needing extra centralized storage. In a way, it makes a completely uncoordinated system behave as if everything were perfectly organized. And that’s a pretty powerful idea for distributed storage and peer to peer networks. @get_optimum @cryptooflashh

Day 3/5 Of Explaining The Vision Of @twin3_ai With A Hand-Drawn Art🩶 Your digital twin cannot just exist, it must understand. It needs a framework to translate your human intuition, values, and knowledge into a language the machine can navigate. Let me introduce you to the 256 Dimensions Twin Matrix. In the realm of AI, data is coordinate in high dimensional space. The Blueprint is the architectural layer where your essence is categorized and weighted. Your digital self lives in high dimensional embeddings. To capture the nuance of a human personality, we need more than just likes and dislikes. We need a spectrum that captures the subtle gradients of how you think, work, and create. Every preference, skill, and memory is assigned a dimension. This ensures your AI agent doesn't just mimic you it aligns with you.

3 Types of Extraction Mechanisms Explained Simply In the evolving world of Web3, understanding how value is extracted is key 👇 Bundling Transactions are grouped together for efficiency but control over ordering can create hidden advantages. PVP / VAMPS It’s a competitive battlefield where participants extract value directly from each other. Dev Extraction Developers design systems where they capture value through fees, tokenomics, or protocol mechanics. The game isn’t just about participation anymore. It’s about understanding where the value flows and who captures it. Stay sharp, Stay informed. @Zerg_App

Day 86 Decentralize to Scale 𝗖𝗼𝗱𝗶𝗻𝗴 𝘃𝘀 𝗥𝗼𝘂𝘁𝗶𝗻𝗴: 𝗧𝗵𝗲 𝗜𝗻𝘁𝗲𝗿𝗻𝗲𝘁 𝗨𝗽𝗴𝗿𝗮𝗱𝗲 𝗠𝗼𝘀𝘁 𝗣𝗲𝗼𝗽𝗹𝗲 𝗡𝗲𝘃𝗲𝗿 𝗡𝗼𝘁𝗶𝗰𝗲𝗱 Optimum @get_optimum is building a high-performance decentralized networking layer for Web3 designed to make data flow faster and more efficiently across blockchains like Ethereum. For decades, the internet has relied on routing, sending packets from one point to another, step by step. If a packet is lost, it must be resent. Simple but inefficient at scale. Now imagine coding instead of routing. With 𝐑𝐚𝐧𝐝𝐨𝐦 𝐋𝐢𝐧𝐞𝐚𝐫 𝐍𝐞𝐭𝐰𝐨𝐫𝐤 𝐂𝐨𝐝𝐢𝐧𝐠 (𝐑𝐋𝐍𝐂), data isn’t sent as fixed packets. Instead, nodes combine or code data into new packets before forwarding them. Each packet carries partial information about the whole dataset. This means even if some packets are lost, the receiver can still recover the original data. No need for exact retransmissions. Example: Instead of waiting for one missing file chunk, you receive multiple coded pieces that can rebuild it anyway. This shift from routing to coding reduces delays, saves bandwidth, and improves reliability especially in large, dynamic networks. For Ethereum, this is a game changer. ♾️ Faster block propagation ♾️ Lower latency under congestion ♾️ Higher throughput without centralization Optimum doesn’t just move data, it upgrades how data moves. 𝐋𝐞𝐚𝐫𝐧 𝐦𝐨𝐫𝐞 𝐚𝐛𝐨𝐮𝐭 𝐎𝐩𝐭𝐢𝐦𝐮𝐦: ➔ Follow X @get_optimum ➔ Join Discord discord.gg/getoptimum ➔ Check Docs docs.getoptimum.xyz • I am a volunteer contributor to Optimum

what do these 2 colors remind you of?

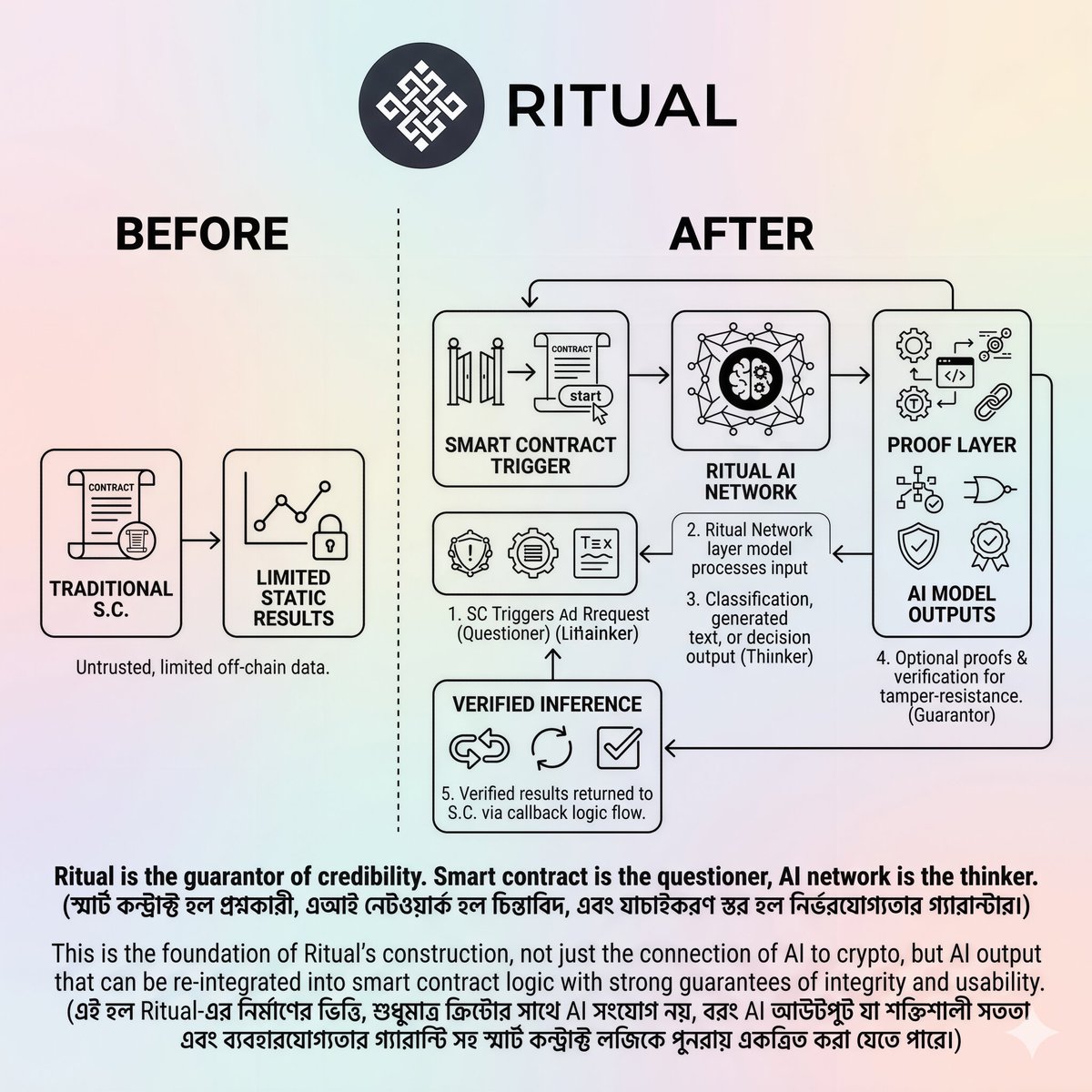

𝗠𝗼𝘀𝘁 𝗔𝗜 𝘀𝘆𝘀𝘁𝗲𝗺𝘀 𝗮𝗿𝗲 𝗯𝗼𝗴𝗴𝗲𝗱 𝗱𝗼𝘄𝗻 𝗯𝘆 𝗮 𝗰𝗼𝗺𝗽𝗹𝗲𝘅 𝗾𝘂𝗲𝘀𝘁𝗶𝗼𝗻: Do you want the answer now, or do you want proof that the answer is reliable? Fast output is attractive to users, but speed alone does not guarantee reliability. Latency and verification serve as conflicting goals in AI infrastructure. Reduced latency makes the system feel smoother, and strong verification limits the opportunity for manipulation or falsification of results. @ritualnet’s Infernet does not view this conflict as a permanent two-way street. Its design creates an integrated framework for handling inference requests according to the needs of each situation. Infernet supports multiple inference services and request flows, and optionally adds a proof mechanism to ensure computational authenticity where necessary. 𝗧𝗵𝗲 𝗸𝗲𝘆 𝗾𝘂𝗲𝘀𝘁𝗶𝗼𝗻 𝘁𝗵𝗲𝗿𝗲𝗳𝗼𝗿𝗲 𝗯𝗲𝗰𝗼𝗺𝗲𝘀 𝗿𝗲𝗹𝗲𝘃𝗮𝗻𝘁: when is speed enough, and when is higher certainty of the result essential? Not every use case is at the same risk. Some requests can be handled with limited verification, while others require more rigorous proof before trust can be placed. Getting answers quickly means solving the problem and moving forward. Verification is the second layer where the stability of the work is checked. Eliminating this layer increases speed, but increases reliance on assumptions. If you want full guarantees in every case, the system becomes cumbersome. The intelligent path is not to choose one side forever, but to build an infrastructure capable of handling both needs. Infernet indicates that balance, where there is neither distrust nor practical difficulties, but rather a smooth combination of the two, understanding the situation. @ritualfnd @joshsimenhoff @Jez_Cryptoz