John Lam

7.2K posts

John Lam

@john_lam

I work on building our AI coding experiences @Microsoft. Accidentally co-created GitHub spec-kit a few months ago (https://t.co/B2UXf4w6Cs).

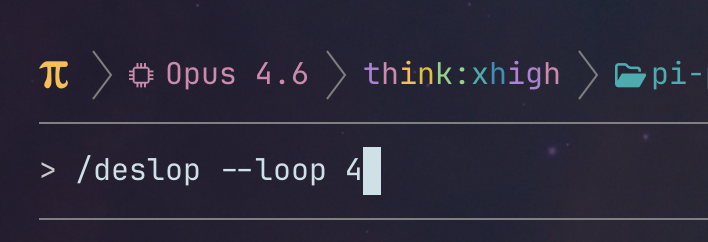

going to try pi-autoresearch to see if we can optimize startup time and memory usage in pi that way. excited to burn some tokens for something worthwhile! github.com/davebcn87/pi-a…

Random thing that improved my life: I got this ring light that I put next to my desk to shine bright light in my eyes early in the morning. I wake up at 430am and definitely saw an improvement in morning alertness and sleep quality. Also felt like it helped avoid winter lows.

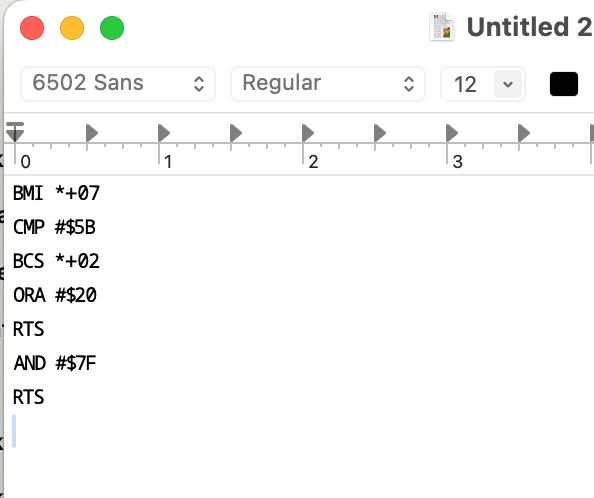

Just added a convenient way to chain prompt templates (slash commands) in Pi coding agent. Each step runs a different prompt template with its own model, skill, and thinking level. pi install npm:pi-prompt-template-model github.com/nicobailon/pi-…

🆕 How to Kill The Code Review latent.space/p/reviews-dead the volume and size of PRs is skyrocketing. @simonw called out StrongDM’s “Dark Factory” last month: no human code, but *also* no human review (!?) in this week’s guest post, @ankitxg makes a 5 step layered playbook for how this can come true.

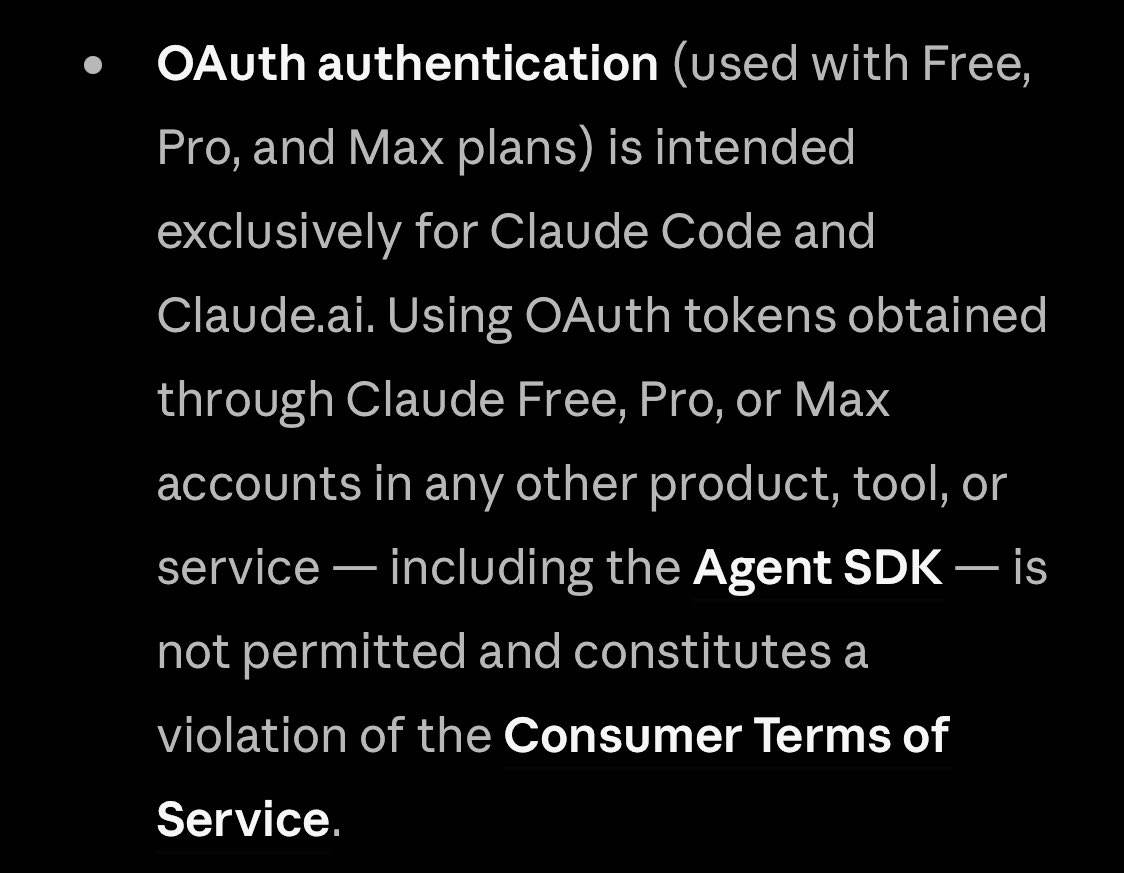

Major Claude Code policy clear up from Anthropic: "Using OAuth tokens obtained through Claude Free, Pro, or Max accounts in any other product, tool, or service — including the Agent SDK — is not permitted"

A super interesting new study from Harvard Business Review. A 8-month field study at a US tech company with about 200 employees found that AI use did not shrink work, it intensified it, and made employees busier. Task expansion happened because AI filled in gaps in knowledge, so people started doing work that used to belong to other roles or would have been outsourced or deferred. That shift created extra coordination and review work for specialists, including fixing AI-assisted drafts and coaching colleagues whose work was only partly correct or complete. Boundaries blurred because starting became as easy as writing a prompt, so work slipped into lunch, meetings, and the minutes right before stepping away. Multitasking rose because people ran multiple AI threads at once and kept checking outputs, which increased attention switching and mental load. Over time, this faster rhythm raised expectations for speed through what became visible and normal, even without explicit pressure from managers.