backofbeyond

142 posts

backofbeyond

@beyond862

Can railing, then, cure these worn maladies?

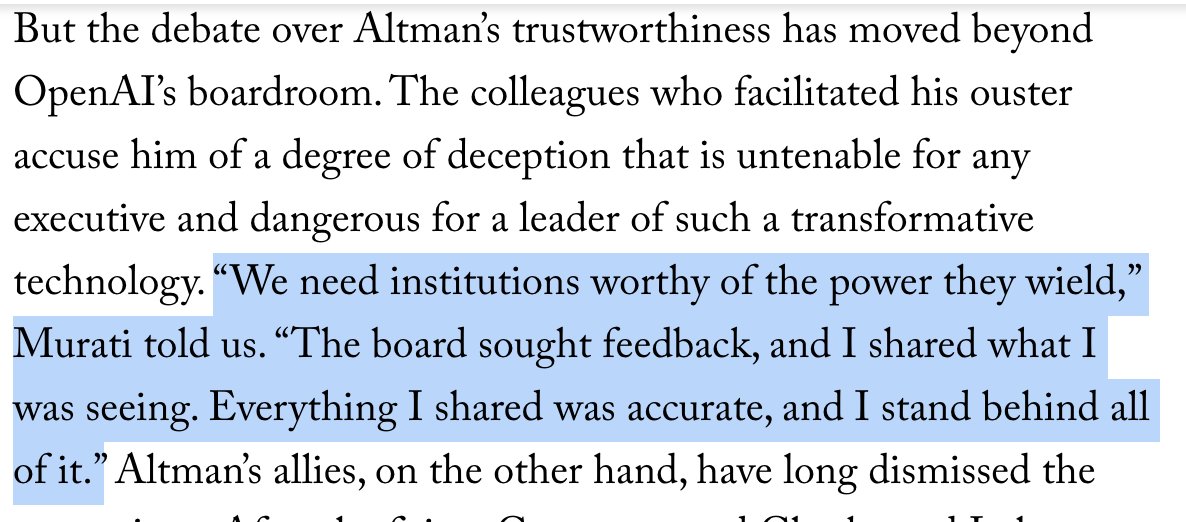

New interviews and closely guarded documents, some of which have never been publicly disclosed, shed light on the persistent doubts about the OpenAI C.E.O. Sam Altman. @AndrewMarantz and @RonanFarrow report. newyorkermag.visitlink.me/ejw-Ob

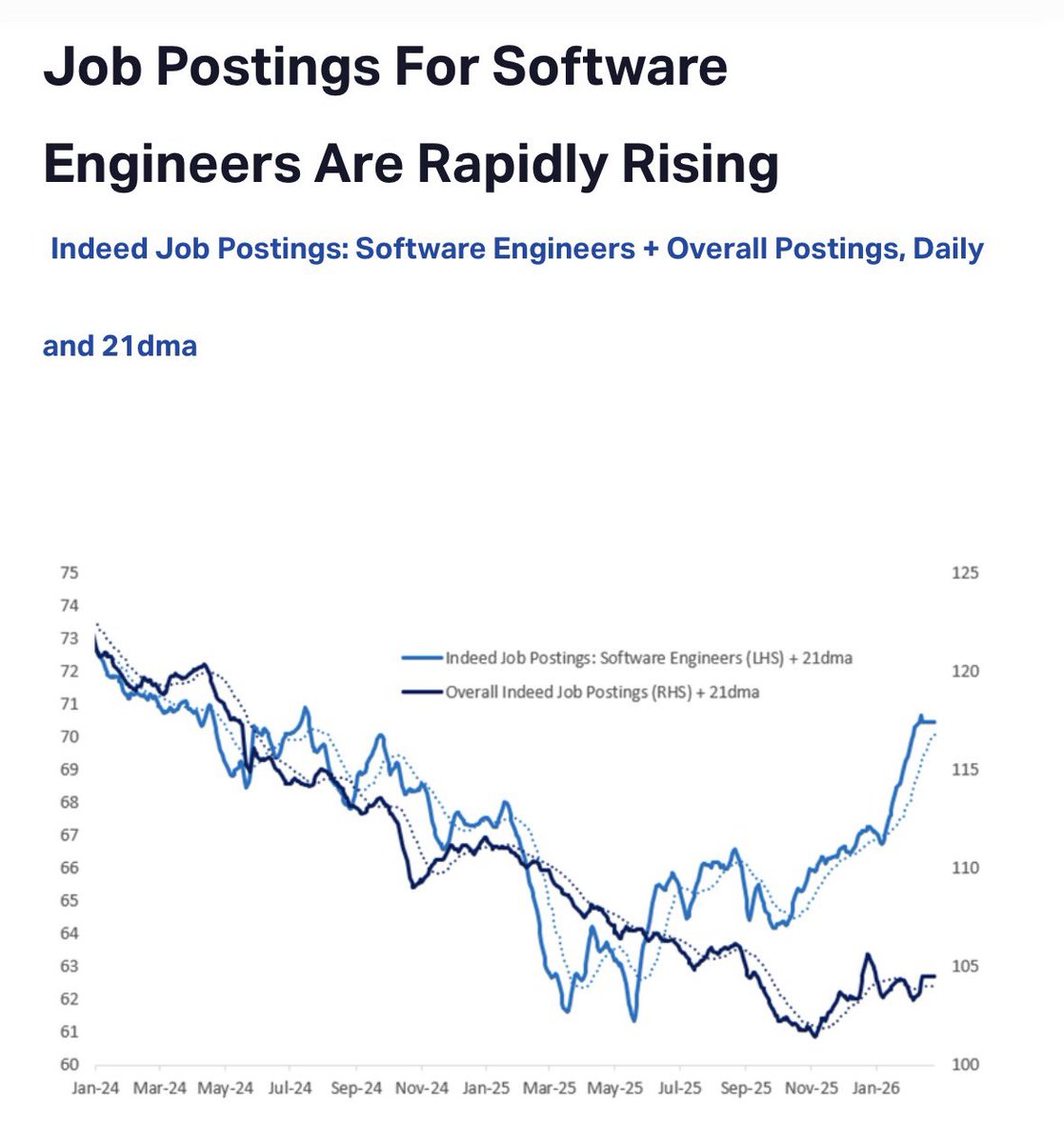

Citadel elegantly murders the Citrini post here citadelsecurities.com/news-and-insig…

The jobs humans alone can do may not exist in sufficient numbers to offset displacement. That’s why many economists argue for structural solutions like universal basic income, reduced working hours, or redefining “work” itself. Perhaps the real answer is that AI will force us to rethink the idea of “jobs” altogether. Instead of trying to replace every lost role with a new one, societies may shift toward valuing human flourishing, creativity, and care as central activities—supported by AI productivity. In that sense, the “new jobs” may not be jobs at all, but new social roles humans carve out for meaning and community.

Leftists should support AI because it’s the only realistic path towards the abolition of labor even if that requires enduring shitty slop videos for a few years

Gemini Nano Banana Pro can solve exam questions *in* the exam page image. With doodles, diagrams, all that. ChatGPT thinks these solutions are all correct except Se_2P_2 should be "diselenium diphosphide" and a spelling mistake (should be "thiocyanic acid" not "thoicyanic") :O