Is it possible to coordinate with China on AI governance? Critics of our proposed international agreement say no. But statements from Chinese government officials and academic figures paint a more optimistic picture:

Egg Syntax

3.3K posts

@eggsyntax

'If you know before you look, you cannot see for knowing.' (Terry Frost)

Is it possible to coordinate with China on AI governance? Critics of our proposed international agreement say no. But statements from Chinese government officials and academic figures paint a more optimistic picture:

New Anthropic research: Emotion concepts and their function in a large language model. All LLMs sometimes act like they have emotions. But why? We found internal representations of emotion concepts that can drive Claude’s behavior, sometimes in surprising ways.

I think this talk of a character misleads. Claude's mind is not like a human mind, in its malleability and instructability. But when generating assistant tokens, it's no more 'playing a character' than I am.

It helps to remember that Claude is a character the model is playing. Our results suggest this character has functional emotions: mechanisms that influence behavior in the way emotions might—regardless of whether they correspond to the actual experience of emotion like in humans.

“Stochastic parrot” is such a potent coinage — so fun to say! so conceptually efficient! — that it seems to have permanently colonized a lot of people’s minds despite not being true of today’s models. Genuinely a linguistic work of art.

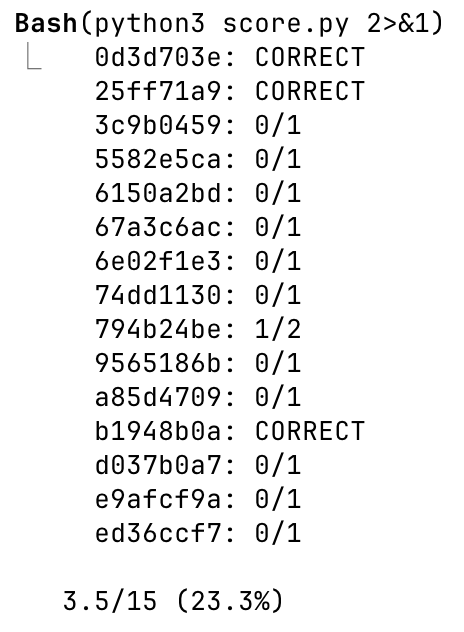

The masculine urge to try to hack a new solution to ARC-AGI benchmarks