Igor Vasiljevic

57 posts

Igor Vasiljevic

@vslevic

ML @Woven_ToyotaJP; Senior Research Scientist @ToyotaResearch. Surf @ Santa Cruz and Ichinomiya. PhD @TTIC_Connect, @UChicago alum.

TRI's latest Large Behavior Model (LBM) paper landed on arxiv last night! Check out our project website: toyotaresearchinstitute.github.io/lbm1/ One of our main goals for this paper was to put out a very careful and thorough study on the topic to help people understand the state of the technology, and to share a lot of details for how we're achieving it. youtube.com/watch?v=BEXFnr…

#CVPR2025 starts in two days, and can’t wait to share our new work! 🎉 We present ZeroGrasp, a unified framework for 3D reconstruction and grasp prediction that generalizes to unseen objects. Paper📄: arxiv.org/abs/2504.10857 Webpage🌐:sh8.io/#/zerograsp (1/4 🧵)

Thank you to the whole OpenThoughts team for yet another great effort! @etash_guha, @ryanmart3n, @sedrickkeh2, @NeginRaoof_, @GeorgeSmyrnis1, @hbXNov, @marnezhurina, @MercatJean, @trungthvu, @ZayneSprague, @suvarna_ashima, @FeuerBenjamin, @cliangyu_, @codezakh, @esfrankel, @sachingrover, @carolineschoi, @Muennighoff, @shiye_su, @WanjiaZhao1203, @jyangballin, @sayshrey, @kartiks26387917, @charlie_jcj02, @YCEthanDeng, @sarahmhpratt, @RamanujanVivek, @JonSaadFalcon, @jeffwpli, @achalddave, @AlbalakAlon, @karora4u, @wulfebw, @chegday, @gregd_nlp, @sewoong79, @mohitban47, @GabrielSaadia, @adityagrover_, @kaiwei_chang, @Vaishaal, @SkyLi0n, @Mike_A_Merrill, @tatsu_hashimoto, @YejinChoinka, @JJitsev, @HeckelReinhard, @madiator, @AlexGDimakis, @lschmidt3 (N/N)

Announcing the Open Thoughts project. We are building the best reasoning datasets out in the open. Building off our work with Stratos, today we are releasing OpenThoughts-114k and OpenThinker-7B.

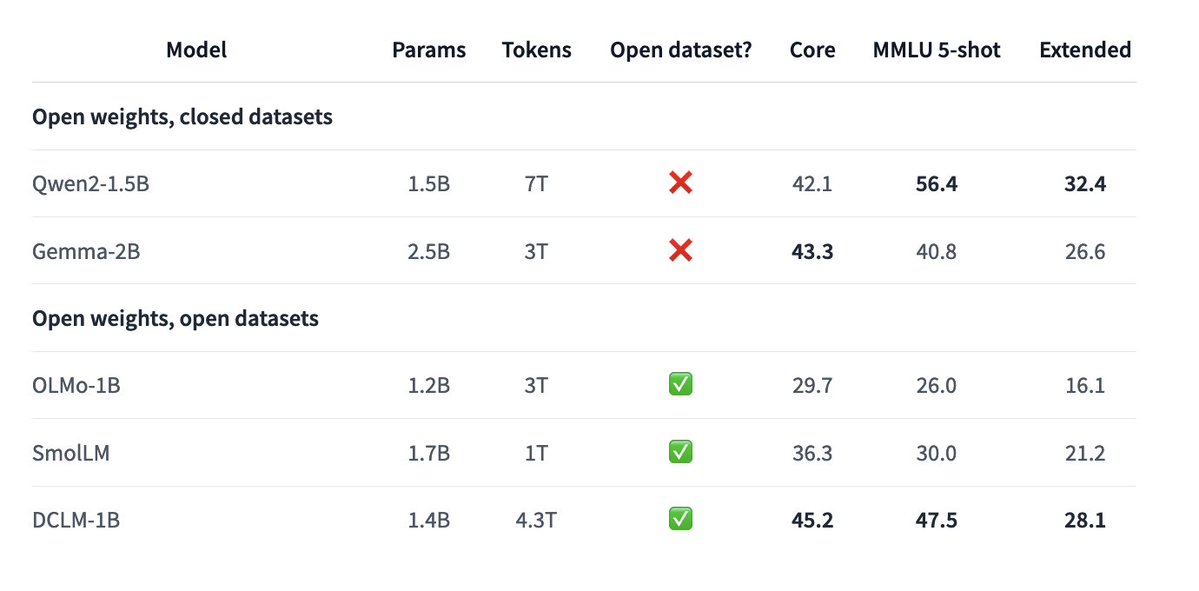

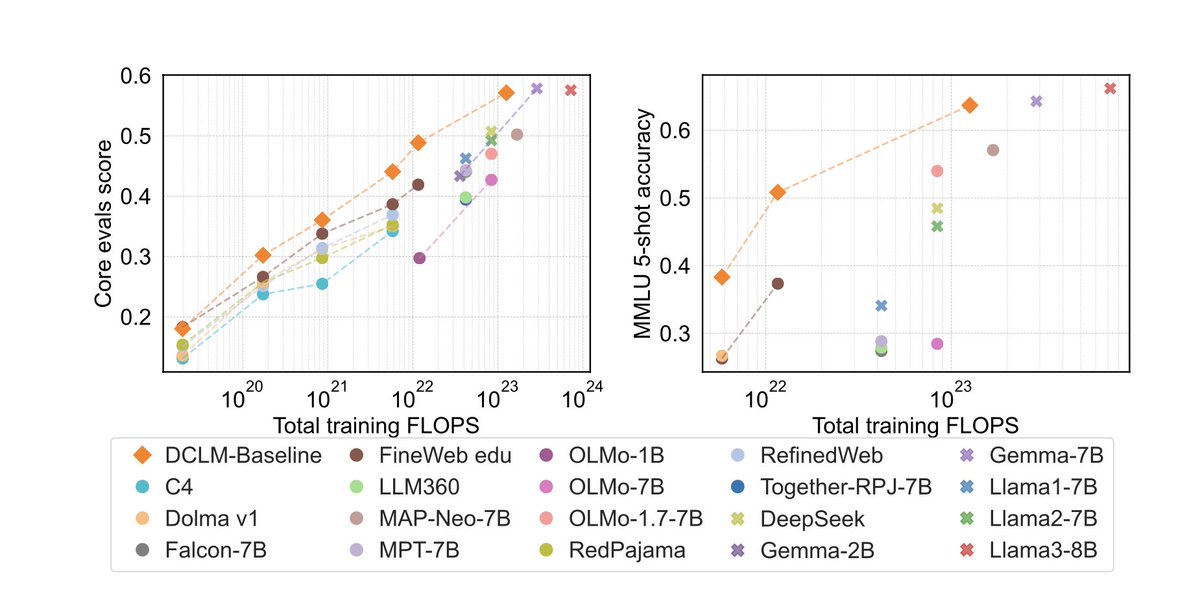

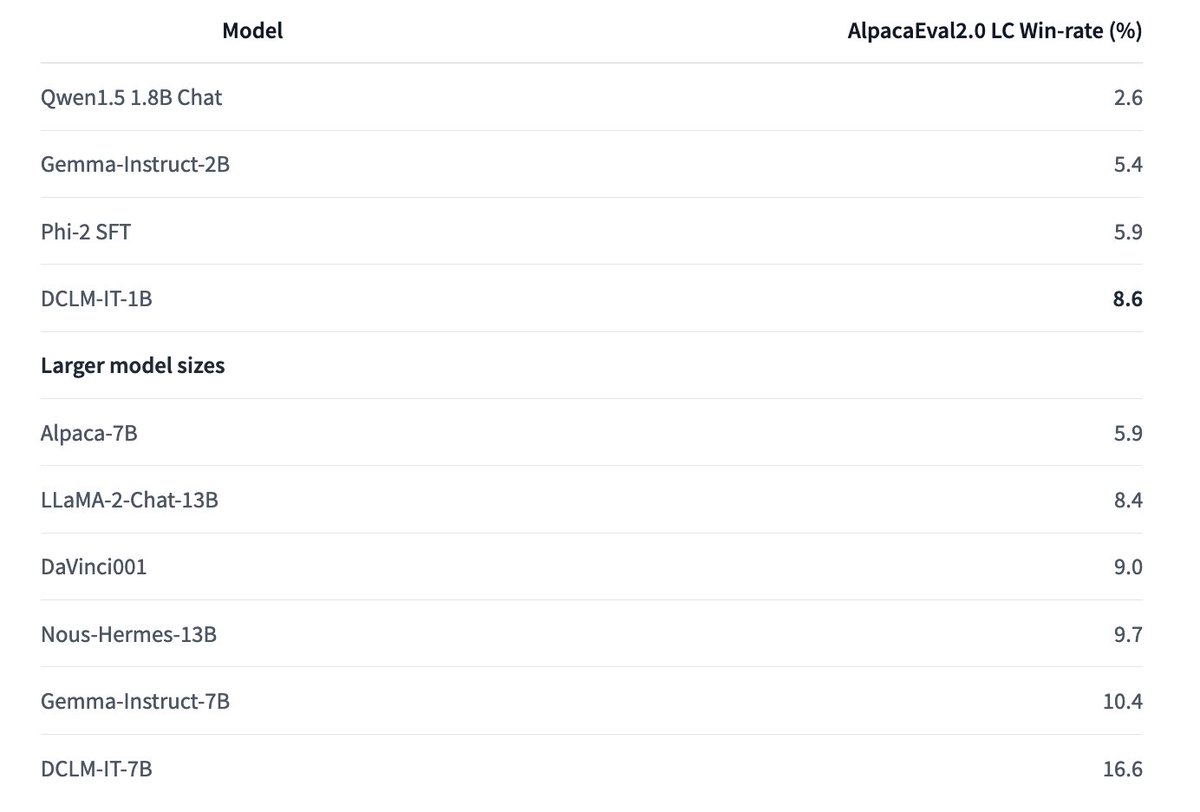

Excited to share our new-and-improved 1B models trained with DataComp-LM! - 1.4B model trained on 4.3T tokens - 5-shot MMLU 47.5 (base model) => 51.4 (w/ instruction tuning) - Fully open models: public code, weights, dataset!

I am really excited to introduce DataComp for Language Models (DCLM), our new testbed for controlled dataset experiments aimed at improving language models. 1/x