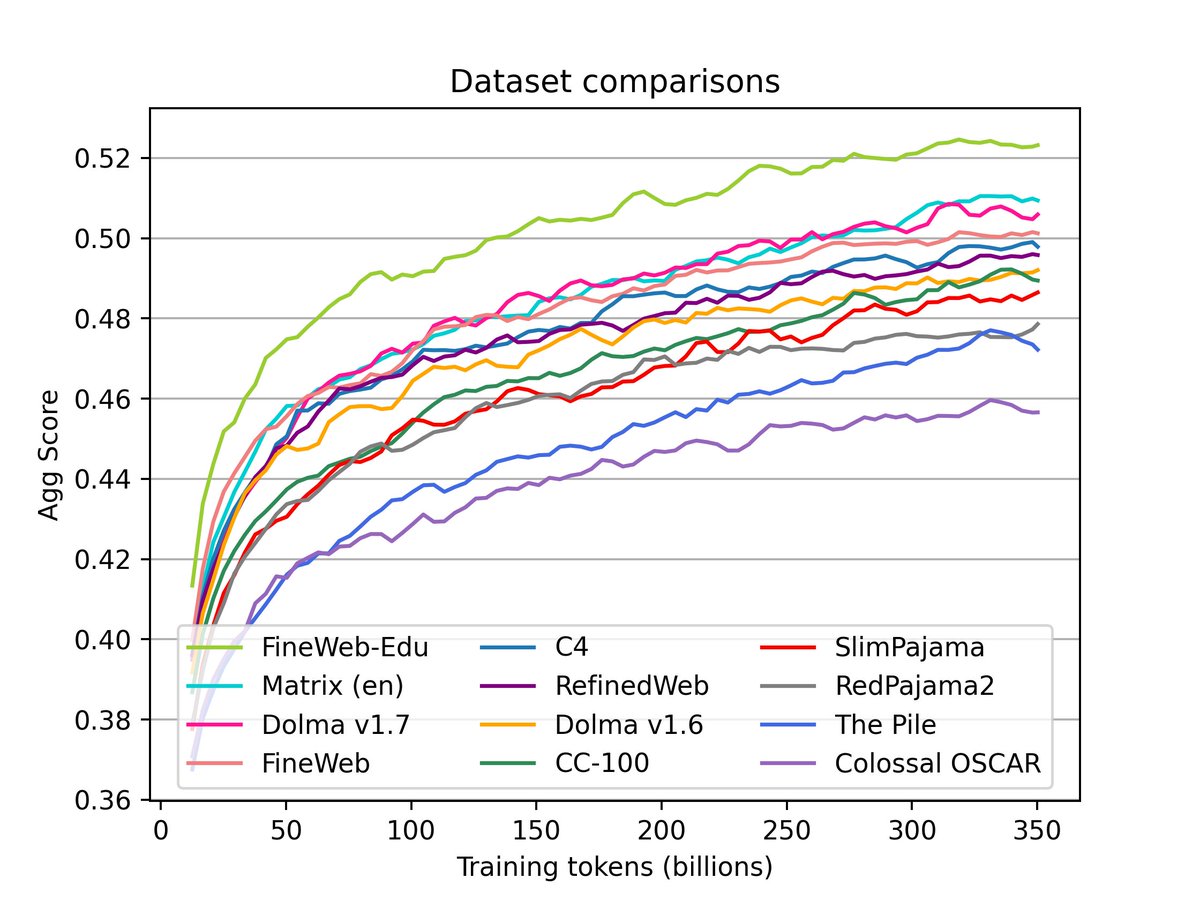

The very first workshop on multilingual data quality signals (WMQDS 🦆) is kicking off tomorrow at #COLM2025

Workshop on Multilingual Data Quality Signals@wmdqs

In collaboration with @CommonCrawl @MLCommons @AiEleuther, the first edition of WMDQS at @COLM_conf starts tomorrow in Room 520A! We have an updated schedule on our website, including a list of all accepted papers.

English